Abstract

Fact-checking agencies assess and score the truthfulness of politicians’ claims to foster their electoral accountability. Fact-checking is sometimes presented as a quasi-scientific activity, based on reproducible verification protocols that would guarantee an unbiased assessment. We will study these verification protocols and discuss under which conditions fact-checking could achieve effective reproducibility. Through an analysis of the methodological norms in verification protocols, we will argue that achieving reproducible fact-checking may not help much in rendering politicians accountable. Political fact-checkers do not deliver either reproducibility or accountability today, and there are reasons to think that traditional quality journalism may serve liberal democracies better.

Similar content being viewed by others

Notes

Mark Stencel and Joel Luther are in charge of updating the Duke Reporters’ Lab database of fact-checking sites every year according to certain criteria that can be accessed at https://reporterslab.org/how-we-identify-fact-checkers/ (Last access on July 21, 2023).

Throughout this paper we will use Quality journalism as an informal shortcut to refer to reporting characterized by features such as: its trustworthiness; diversity in sources, coverage, etc.; depth and breadth of information; comprehensiveness; or its emphasis on public affairs. See (Lacy & Rosenstiel, 2015).

This paper is the theoretical companion of two empirical surveys trying to grasp the actual effects of PFC on political accountability. The first one is already published: (Fernández-Roldán et al., 2023).

(Galison, 2015) explores some analogies in the evolution of the ideal of objectivity in science and journalism. Crucial for our argument below is that public trust seems not to depend anymore on the value-free ideal, neither in science nor in journalism (Elliott, 2017). Still, it is open to discussion how trust in these two institutions should be reconstructed: for a preliminary exploration in line with our own view, see (de Melo-Martín & Intemann, 2018).

The IFCN Code of Principles is accessible on: https://ifcncodeofprinciples.poynter.org/know-more/the-commitments-of-the-code-of-principles (Last visited on July 21, 2023).

The relevant passage says: “Signatories want their readers to be able to verify findings themselves. Signatories provide all sources in enough detail that readers can replicate their work, except in cases where a source’s personal security could be compromised. In such cases, signatories provide as much detail as possible.”

Although the IFCN uses replicability, the definition it uses is consistent with the way reproducibility is understood in the metaresearch literature (Goodman et al., 2016). It would make no sense, for instance, to speak of the direct or indirect replication of a fact-checking protocol, given that these protocols are not tracking causal interventions, like most scientific experiments do -see Sect. 4 below.

A reviewer suggests an alternative interpretation though: conflict of interest rules aim to secure a fair coverage, rather than reproducibility. Avoiding a conflict of interest would “screen out biases that could affect the overall picture of political discussions an agency creates over time”, e.g., keeping a balance in verification between the different parties, selecting claims according to their reach and importance, etc. Fair coverage would be, in fact, independent of reproducibility. We agree that this is a plausible interpretation of the conflict of interest rules, but still, readers of the IFCN code are left wondering how the guidelines will foster reproducibility. In any case, this does not affect our claim since conflict of interest rules are also said to contribute to reproducibility, via bias correction, in various experimental disciplines:. For clinical trials see, for instance, (Lundh et al., 2017).

We do not imply that replicability in science is entirely rule-based, with tacit knowledge playing no role. Yet, the lack of explicit enough experimental protocols has been noted as a relevant factor in the replicability crisis in different disciplines (Andreoletti, 2020).

There is an emerging literature about potential biases in PFC, namely about how balanced is the attention they pay to different political parties. The most prominent among these would be a differential treatment of politicians according to their ideology. Although the evidence is still preliminary, according to the fact-checks of some leading US agencies, right-wing politicians would systematically lie more than their left-wing peers -see (Amazeen, 2016; Farnsworth & Lichter, 2019; Marietta et al., 2015). See also ( Fernández-Roldán et al., 2023) for a methodological discussion.

A reviewer objects to whether the appropriate comparison to assess the reproducibility of PFC should not be with the qualitative social sciences (e.g., history). It has been argued that there are different reproducibility benchmark across disciplines (Leonelli, 2018). In those fields where there is a low degree of control on the environment and statistical tools are rarely used, reproducibility requires, at most, that “any skilled experimenter working with same methods and materials would produce similar results”. This is what standard-based PFC could achieve at its best, assuming that PFC agencies could operate without the practical constraints of traditional journalism (limited resources and tight publication deadlines). But even in those ideal circumstance, as we also argue next in Sect. 5, it is dubious that standard-based PFC would foster political accountability better than standard journalism.

Rational choice models of accountability and the media have some underlying dilemmas. Downs (1957) was the first to show that learning about candidates and their policies is beneficial for voters, but since no individual voter will have the power to alter an election outcome with a ballot, it is rational for each one of them not to invest in any learning. Publishing the information required for rendering politicians accountable can be thus conceived as a problem of privately providing a public good, for which solutions exist only under a limited range of circumstances (Bruns & Himmler, 2016).

This is, of course, nothing but a conjecture, theoretically inspired by the law and economics analysis of avoidance. Criminals engage in avoidance activities to reduce the probability of punishment or its magnitude: covering up incriminating evidence or abusing evidentiary rules and procedures. Against a naive view of accountability, law & economics scholars have shown that, under standard economic rationality, increasing punishment, instead of deterring criminals, may lead them to more avoidance (Nussim & Tabbach, 2009) In our case, the cost for a politician to avoid a rule-based fact-checker is so low (a mere play on words) that we may safely assume they will give it a try –rather than facing the electoral penalties of being caught lying.

Experimental research suggests that measurable effects on vote change are small (Nyhan et al., 2020).

Newtral’s truth scores and methodology are accessible here: https://www.newtral.es/metodologia-transparencia/ (Last visited on July 21, 2023). The truth scores are described as follows: “True: the claim is rigorous and there is neither context nor relevant additional data missing. Half true: the claim is correct, although it needs clarification, additional information or context. Misleading: the claim contains correct data, but neglects very relevant elements (sic.) or mixes incorrect data conveying an impression different (sic.), imprecise or false. False: the claim is false” (Our translation, the highlighted bits are not grammatical in the original Spanish either). Newtral has been positively audited by the IFCN 6 times over the last 7 years: https://ifcncodeofprinciples.poynter.org/profile/newtral (Last visited on July 21, 2023).

References

Amazeen, M. A. (2016). Checking the fact-checkers in 2008: Predicting Political Ad Scrutiny and assessing consistency. Journal of Political Marketing, 15(4), 433–464. https://doi.org/10.1080/15377857.2014.959691.

Amazeen, M. A., Thorson, E., Muddiman, A., & Graves, L. (2018). Correcting political and consumer misperceptions: The Effectiveness and effects of Rating Scale Versus Contextual correction formats. Journalism and Mass Communication Quarterly, 95(1), 28–48. https://doi.org/10.1177/1077699016678186.

Andreoletti, M. (2020). Replicability Crisis and Scientific reforms: Overlooked issues and Unmet challenges. International Studies in the Philosophy of Science, 33(3), 135–151. https://doi.org/10.1080/02698595.2021.1943292.

Andreoletti, M., & Teira, D. (2019). Rules versus standards: What are the costs of epistemic norms in drug regulation? Science Technology and Human Values, 44(6), 1093–1115. https://doi.org/10.1177/0162243919828070.

Birks, J. (2019). Fact-checking journalism and political argumentation: A British perspective. Palgrave. https://doi.org/10.1007/978-3-030-30573-4.

Braun, J. A., & Eklund, J. L. (2019). Fake news, real money: Ad tech platforms, profit-driven hoaxes, and the business of Journalism. Digital Journalism, 7(1), 1–21. https://doi.org/10.1080/21670811.2018.1556314.

Breen, M., & Gillanders, R. (2020). Press freedom and corruption perceptions: Is there a reputational premium? Politics and Governance, 8(2), 103–115. https://doi.org/10.17645/pag.v8i2.2697.

Bruns, C., & Himmler, O. (2016). Mass media, instrumental information, and electoral accountability. Journal of Public Economics, 134, 75–84. https://doi.org/10.1016/j.jpubeco.2016.01.005.

Carson, A., Gibbons, A., Martin, A., & Phillips, J. B. (2022). Does third-party fact-checking increase trust in news stories? An Australian case study using the sports rorts Affair. Digital Journalism, 10(5), 801–822. https://doi.org/10.1080/21670811.2022.2031240.

Chang, H. (2004). Inventing temperature: Measurement and scientific progress. Oxford University Press. https://doi.org/10.1093/0195171276.001.0001.

de Melo-Martín, I., & Intemann, K. (2009). How do disclosure policies fail? Let us count the ways. The FASEB Journal, 23(6), 1638–1642. https://doi.org/10.1096/fj.08-125963.

de Melo-Martín, I., & Intemann, K. (2018). The fight against doubt: How to Bridge the gap between scientists and the Public. Oxford University Press. https://doi.org/10.1093/oso/9780190869229.001.0001.

Downs, A. (1957). An economic theory of political action in a democracy. Journal of Political Economy, 65(2), 135–150. https://doi.org/10.1086/257897.

Elías, C. (2019). Science on the ropes. Springer. https://doi.org/10.1007/978-3-030-12978-1.

Elliott, K. C. (2017). A tapestry of values: An introduction to values in Science. Oxford University Press. https://doi.org/10.1093/acprof:oso/9780190260804.001.0001.

Farnsworth, S., & Lichter, R. (2019). Partisan targets of media Fact-checking: Examining President partisan targets of media Fact-checking: Examining President Obama and the 113th Congress. Virginia Social Science Journal, 53, 51–62.

Fernández-Roldán, A., Elías, C., Santiago-Caballero, C., & Teira, D. (2023). Can we detect Bias in political Fact-Checking? Evidence from a Spanish case study. Journalism Practice. https://doi.org/10.1080/17512786.2023.2262444.

Fidler, F., & Wilcox, J. (2018). Reproducibility of scientific results. In E. N. Zalta (Ed.), The Stanford Encyclopedia of Philosophy. Metaphysics Research Lab - Stanford University.

Galison, P. (2015). The Journalist, the Scientist, and Objectivity. In Flavia Padovani, Alan Richardson, & Jonathan Y. Tsou (Eds.), Objectivity in Science (pp 57–75). Springer. https://doi.org/10.1007/978-3-319-14349-1_4.

Gans, H. J. (2004). Democracy and the News. Oxford University Press.

Graves, L. (2016). Deciding what’s true: The rise of political fact-checking in American journalism. Columbia University.

Graves, L. (2018). Boundaries not drawn: Mapping the institutional roots of the global fact-checking movement. Journalism Studies, 19(5), 613–631. https://doi.org/10.1080/1461670X.2016.1196602.

Graves, L., & Cherubini, F. (2016). The rise of fact-checking sites in Europe. Reuters Institute -University of Oxford. https://reutersinstitute.politics.ox.ac.uk/our-research/rise-fact-checking-sites-europe.

Graves, L., & Konieczna, M. (2015). Sharing the news: Journalistic collaboration as field repair. International Journal of Communication, 9(1), 1966–1984.

Goodman, S. N., Fanelli, D., & Ioannidis, J. P. (2016). What does research reproducibility mean? Science Translational Medicine, 8(341), 341ps12.

Guo, Z., Schlichtkrull, M., & Vlachos, A. (2022). A survey on automated fact-checking. Transactions of the Association for Computational Linguistics, 10, 1–28. https://doi.org/10.1162/tacl_a_00454.

Hansson, S. O. (2018). How connected are the major forms of irrationality? An analysis of pseudoscience, science denial, fact resistance and alternative facts. Mètode, 8, 125–131. https://doi.org/10.7203/metode.8.10005.

Hodge, D. R. (2007). A systematic review of the empirical literature on intercessory prayer. Research on Social Work Practice, 17(2), 174–187. https://doi.org/10.1177/1049731506296170.

Johnson, R. L., & Morgan, G. B. (2016). Survey scales: A guide to Development, Analysis and Reporting. Guilford Press.

Kaplow, L. (1992). Rules versus standards: An economic analysis. Duke Law Journal, 42(3), 557–629.

Knobel, B. (2018). The Watchdog still barks. Fordham University. https://doi.org/10.2307/j.ctt201mpdz.

Lacy, S., & Rosenstiel, T. (2015). Defining and measuring quality journalism. Rutgers School of Communication and Information. http://mpii.rutgers.edu/wp-content/uploads/sites/129/2015/04/Defining-and-Measuring-QualityJournalism.pdf.

Lazarski, E., Al-Khassaweneh, M., & Howard, C. (2021). Using NLP for fact checking: A survey. Designs, 5(3), 1–22. https://doi.org/10.3390/designs5030042.

Leonelli, S. (2018). Rethinking reproducibility as a criterion for research quality. Research in the History of Economic Thought and Methodology, 36(B), 129–146. https://doi.org/10.1108/S0743-41542018000036B009.

Lim, C. (2018). Checking how fact-checkers check. Research and Politics, 5(3), 1–7. https://doi.org/10.1177/2053168018786848.

Lundh, A., Lexchin, J., Mintzes, B., Schroll, J. B., & Bero, L. (2017). Industry sponsorship and research outcome. Cochrane Database of Systematic Reviews, 2(2), MR000033. https://doi.org/10.1002/14651858.MR000033.PUB3.

Mansbridge, J. (2014). A contingency theory of accountability. In M. Bovens, R. Goodin, & T. Schillemans (Eds.), The Oxford Handbook of Public Accountability ( (pp. 55–68). Oxford University Press. ).

Mantzarlis, A. (2018). Fact-Checking 101. In Journalism, fake news & disinformation: Handbook for journalism education and training. UNESCO. https://en.unesco.org/node/296054.

Marietta, M., Barker, D. C., & Bowser, T. (2015). Fact-checking polarized politics: Does the fact-check industry provide consistent guidance on disputed realities? The Forum, 13(4), 577–596. https://doi.org/10.1515/for-2015-0040.

Meyers, C. (2020). Partisan news, the myth of objectivity, and the standards of responsible journalism. Journal of Media Ethics: Exploring Questions of Media Morality, 35(3), 180–194. https://doi.org/10.1080/23736992.2020.1780131.

Norton, J. D. (2015). Replicability of experiment. Theoria, 30(2), 229–248. https://doi.org/10.1387/theoria.12691.

Nussim, J., & Tabbach, A. D. (2009). Deterrence and avoidance. International Review of Law and Economics, 29(4), 314–323. https://doi.org/10.1016/j.irle.2009.05.001.

Nyhan, B., Porter, E., Reifler, J., & Wood, T. J. (2020). Taking fact-checks literally but not seriously? The effects of Journalistic Fact-checking on factual beliefs and candidate favorability. Political Behavior, 42(3), 939–960. https://doi.org/10.1007/s11109-019-09528-x.

Stalph, F. (2018). Truth Corrupted: The Role of Fact-Based Journalism in a Post-Truth Society. In H. Oliver & S. Florian (Eds.), Digital Investigative Journalism: Data, Visual Analytics and Innovative Methodologies in International Reporting (pp. 237–248). Palgrave Macmillan. https://doi.org/10.1007/978-3-319-97283-1_22.

Star, S. L., & Collins, H. M. (1988). Changing Order: Replication and induction in scientific practice. The University of Chicago. https://doi.org/10.2307/3105095.

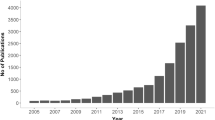

Stencel, M., & Griffin, R. (2018, February 22). Fact-checking triples over four years. In Duke Reporters’ Lab. https://reporterslab.org/fact-checking-triples-over-four-years/.

Stencel, M., & Luther, J. (2020, October 13). Fact-checking count tops 300 for the first time. In Duke Reporters’ Lab. https://reporterslab.org/fact-checking-count-tops-300-for-the-first-time/.

Stencel, M., Ryan, E., & Luther, J. (2022, June 17). Fact-checkers extend their global reach with 391 outlets, but growth has slowed. In Duke Reporters’ Lab. https://reporterslab.org/fact-checkers-extend-their-global-reach-with-391-outlets-but-growth-has-slowed/.

Strömberg, D. (2016). Media Coverage and political accountability: Theory and evidence. In P. Simon, J. Anderson., & Waldfogel.,David Strömberg. (Eds.), Handbook of Media Economics (pp. 595–622). North-Holland.

Teira, D. (2016). Debiasing methods and the acceptability of experimental outcomes. Perspectives on Science, 24(6), 722–743. https://doi.org/10.1162/POSC_a_00230.

Thorne, J., & Vlachos, A. (2018). Automated fact checking: Task formulations, methods and future directions. COLING 2018–27th International Conference on Computational Linguistics, Proceedings. https://doi.org/10.48550/arXiv.1806.07687.

Uscinski, J. E. (2015). The epistemology of fact checking (is still Naìve): Rejoinder to Amazeen. Critical Review, 27(2), 243–252. https://doi.org/10.1080/08913811.2015.1055892.

Uscinski, J. E., & Butler, R. W. (2013). The epistemology of fact checking. Critical Review, 25(2), 162–180. https://doi.org/10.1080/08913811.2013.843872.

Vargo, C. J., Guo, L., & Amazeen, M. A. (2018). The agenda-setting power of fake news: A big data analysis of the online media landscape from 2014 to 2016. New Media and Society, 20(5), 2028–2049. https://doi.org/10.1177/1461444817712086.

Vicario, M., Del, Bessi, A., Zollo, F., Petroni, F., Scala, A., Caldarelli, G., Stanley, H. E., & Quattrociocchi, W. (2016). The spreading of misinformation online. Proceedings of the National Academy of Sciences of the United States of America, 113(3), 554–559. https://doi.org/10.1073/pnas.1517441113.

Walter, N., Cohen, J., Holbert, R. L., & Morag, Y. (2020). Fact-Checking: A Meta-analysis of what works and for whom. Political Communication, 37(3), 350–375. https://doi.org/10.1080/10584609.2019.1668894.

Zeng, X., Abumansour, A. S., & Zubiaga, A. (2021). Automated fact-checking: A survey. Language and Linguistics Compass, 15(10), 178–206. https://doi.org/10.1111/lnc3.12438.

Acknowledgements

Two grants by the Spanish Ministry of Science and Innovation funded our research (RTI2018-097709-B-I00 and PID2021-128835NB-I00). This manuscript has benefited from comments by audiences in Copenhagen (The reactivity project), Paris (IHPST) and Twente (Dpt. Of Philosophy). Carlos Elías, Javier González de Prado and Ezequiel López Rubio provided useful suggestions.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

We have no conflict of interest to declare.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Fernández-Roldan, A., Teira, D. The epistemic status of reproducibility in political fact-checking. Euro Jnl Phil Sci 14, 12 (2024). https://doi.org/10.1007/s13194-024-00575-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13194-024-00575-8