Abstract

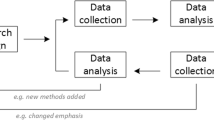

Sequential Multiple Assignment Randomized Trials (SMARTs) are increasing in popularity in behavior science research with over 130 federally funded studies currently active. SMARTs use multiple randomizations to experimentally evaluate the impact of different decisions during an ongoing adaptive intervention. While many aspects of SMARTs are similar to more traditional single-randomization trials, the additional complexity and variability introduced during subsequent randomization requires specificity in reporting. Due to the increase in the application and reporting of SMARTs, this paper serves as a guide to support transparent reporting of adaptive intervention research. Further, these guidelines provide detail to ensure that future meta-analyses examining SMARTs can be robust. We provide recommendations for reporting and how reporting in SMARTs warrant additional detail above and beyond a traditional randomized trial.

Similar content being viewed by others

References

Almirall, D., Compton, S. N., Gunlicks‐Stoessel, M., Duan, N., & Murphy, S. A. (2012) Designing a pilot sequential multiple assignment randomized trial for developing an adaptive treatment strategy. Statistics in Medicine 31(17), 1887–1902. https://doi.org/10.1002/sim.4512

Almirall, D., Nahum-Shani, I., Sherwood, N. E., & Murphy, S. A. (2014). Introduction to SMART designs for the development of adaptive interventions: With application to weight loss research. Translational Behavioral Medicine, 4(3), 260–274.

Austin, P. C., Manca, A., Zwarenstein, M., Juurlink, D. N., & Stanbrook, M. B. (2010). A substantial and confusing variation exists in handling of baseline covariates in randomized controlled trials: A review of trials published in leading medical journals. Journal of Clinical Epidemiology, 63(2), 142–153. https://doi.org/10.1016/j.jclinepi.2009.06.002

Begg, C., Cho, M., Eastwood, S., Horton, R., Moher, D., Olkin, I., et al. (1996). Improving the quality of reporting of randomized controlled trials. The CONSORT Statement. JAMA., 276(8), 637–639. https://doi.org/10.1001/jama.1996.03540080059030

Bigirumurame, T., Uwimpuhwe, G., & Wason, J. (2022). Sequential multiple assignment randomized trial studies should report all key components: A systematic review. Journal of Clinical Epidemiology, 142, 152–160.

Campbell, M. K., Elbourne, D. R., & Altman, D. G. (2004). CONSORT statement: Extension to cluster randomised trials. BMJ, 328(7441), 702–708.

Chow, J. C., & Hampton, L. H. (2019). Sequential multiple-assignment randomized trials: Developing and evaluating adaptive interventions in special education. Remedial and Special Education, 40(5), 267–276.

Chow, J. C., & Hampton, L. H. (2022). A systematic review of sequential multiple-assignment randomized trials in educational research. Educational Psychology Review, 34, 1–27.

Collins, L. M., Nahum-Shani, I., & Almirall, D. (2014). Optimization of behavioral dynamic treatment regimens based on the sequential, multiple assignment, randomized trial (SMART). Clinical Trials, 11(4), 426–434.

Cox. (1970). Analysis of binary data. Chapman & Hall/CRC.

de Boer, M. R., Waterlander, W. E., Kuijper, L. D., Steenhuis, I. H., & Twisk, J. W. (2015). Testing for baseline differences in randomized controlled trials: An unhealthy research behavior that is hard to eradicate. The International Journal of Behavioral Nutrition and Physical Activity, 12(1), 4–4. https://doi.org/10.1186/s12966-015-0162-z

Hampton, L. H., & Chow, J. C. (2022). Deeply tailoring adaptive interventions: Enhancing knowledge generation of SMARTs in special education. Remedial and Special Education, 43(3), 195–205.

Hedges, L. V. (1981). Distribution theory for Glass’s estimator of effect size and related estimators. Journal of Educational Statistics, 6(2), 107–128. https://doi.org/10.2307/1164588

Hedges, L. V. (2007). Effect sizes in cluster-randomized designs. Journal of Educational and Behavioral Statistics, 32(4), 341–370.

Heonig, J. M., & Heisey, D. M. (2001). The abuse of power: The pervasive fallacy of power calculations for data analysis. The American Statistician, 55, 19–24.

Institute of Education Sciences. (2022). What works clearinghouse procedures handbook v. 5.0. Retrieved [07/31/2023]. https://ies.ed.gov/ncee/wwc/Docs/referenceresources/Final_WWC-HandbookVer5_0-0-508.pdf

Lei, H., Nahum-Shani, I., Lynch, K., Oslin, D., & Murphy, S. A. (2012). A “SMART” design for building individualized treatment sequences. Annual Review of Clinical Psychology, 8, 21–48.

Nahum-Shani, I., Qian, M., Almirall, D., Pelham, W. E., Gnagy, B., Fabiano, G. A., Waxmonsky, J. G., Yu, J., & Murphy, S. A. (2012). Experimental design and primary data analysis methods for comparing adaptive interventions. Psychological Methods, 17(4), 457–477. https://doi.org/10.1037/a0029372

Nahum-Shani, I., Almirall, D., Yap, J. R. T., McKay, J. R., Lynch, K. G., Freiheit, E. A., & Dziak, J. J. (2020). SMART longitudinal analysis: A tutorial for using repeated outcome measures from SMART studies to compare adaptive interventions. Psychological Methods, 25(1), 1–29. https://doi.org/10.1037/met0000219

Nahum-Shani, I. and Almirall, D. (2019). An introduction to adaptive interventions and SMART designs in education (NCSER 2020–001). U.S. Department of Education. Washington, DC: National Center for Special Education Research. Retrieved [07/31/2023] from https://ies.ed.gov/ncser/pubs/

NeCamp, T., Kilbourne, A., & Almirall, D. (2017). Comparing cluster-level dynamic treatment regimens using sequential, multiple assignment, randomized trials: Regression estimation and sample size considerations. Statistical Methods in Medical Research, 26(4), 1572–1589. https://doi.org/10.1177/0962280217708654

Pocock, S. J., Assmann, S. E., Enos, L. E., & Kasten, L. E. (2002). Subgroup analysis, covariate adjustment and baseline comparisons in clinical trial reporting: Current practice and problems. Statistics in Medicine, 21(19), 2917–2930. https://doi.org/10.1002/sim.1296

Roberts, G., Clemens, N., Doabler, C. T., Vaughn, S., Almirall, D., & Nahum-Shani, I. (2021). Multitiered systems of support, adaptive interventions, and SMART designs. Exceptional Children, 88(1), 8–25.

Taylor, J., Pigott, T., & Williams, R. (2022). Promoting knowledge accumulation about intervention effects: Exploring strategies for standardizing statistical approaches and effect size reporting. Educational Researcher, 51(1), 72–80.

Zhang, Y., Hedo, R., Rivera, A., Rull, R., Richardson, S., & Tu, X. M. (2019). Post hoc power analysis: Is it an informative and meaningful analysis?. General psychiatry, 32(4). https://doi.org/10.1136/gpsych-2019-100069

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Hampton, L.H., Chow, J.C., Bhat, B.H. et al. Reporting and Design Considerations for SMART Behavioral Science Research. Educ Psychol Rev 35, 117 (2023). https://doi.org/10.1007/s10648-023-09837-y

Accepted:

Published:

DOI: https://doi.org/10.1007/s10648-023-09837-y