Abstract

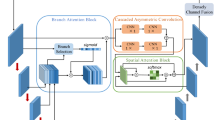

For weed detection in wheat field, it is difficult to identify weeds against the complex field background using single-modal red–green–blue (RGB) images due to similar appearance of grass weeds and wheat. To overcome limitations of single-modal information in grass weed detection, a dual-path weed detection network (WeedsNet) based on multi-modal information is proposed. At first, the single-channel depth image is encoded as a new three-channel image having similar structures with RGB color space, so that they are suitable for feature extraction using a convolutional neural network (CNN). Then, WeedsNet comprising a dual-path feature extraction network is constructed to extract features of weeds from RGB and depth images simultaneously. Finally, weights are assigned to features in different modalities in tandem with the idea of multi-scale object detection and the attention mechanism, thus effectively fusing multi-modal information. The results of the interpretability analysis of the model demonstrate that depth information is beneficial to achieve the detection of grass weeds, and effectively improves the weed detection accuracy in wheat field by complementing with RGB image features. WeedsNet has better detection accuracy than both traditional machine learning methods and integrated learning-based weight assignment methods. The weed detection accuracy is 62.3% based on WeedsNet in natural wheat field and the detection speed of single image was about 0.5s. Weed detection software is designed and developed based on WeedsNet to achieve real-time output of weeds distribution information in natural wheat field.

Similar content being viewed by others

References

Bell, S., Zitnick, C. L., Bala, K. & Girshick, R. (2016). Inside-Outside Net: Detecting Objects in Context with Skip Pooling and Recurrent Neural Networks. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2874–2883. https://doi.org/10.1109/CVPR.2016.314

Cai, Z., Cai, Z. & Shao, L. RGB-D data fusion in complex space. (2017). IEEE International Conference on Image Processing (ICIP), 1965–1969. https://doi.org/10.1109/ICIP.2017.8296625

Deng, B. Y., Ran, Z. Y., Chen, J. X., Zheng, D. S., Yang, Q., & Tian, L. L. (2021). Adversarial examples generation algorithm through DCGAN. Intelligent Automation and Soft Computing, 30, 889–898. https://doi.org/10.32604/iasc.2021.019727

dos Santos, A., Freitas, D. M., da Silva, G. G., Pistori, H., & Folhes, M. T. (2017). Weed detection in soybean crops using ConvNets. Computers and Electronics in Agriculture, 143, 314–324. https://doi.org/10.1016/j.compag.2017.10.027

Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D. & Houlsby, N. (2020). An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929

Eitel, A., Springenberg, J. T., Spinello, L., Riedmiller, M., Burgard, W. & IEEE. (2015). Multimodal Deep Learning for Robust RGB-D Object Recognition. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 681–687. https://doi.org/10.1109/IROS.2015.7353446

Fahad, S., Hussain, S., Chauhan, B. S., Saud, S., Wu, C., Hassan, S., Tanveer, M., Jan, A., & Huang, J. (2015). Weed growth and crop yield loss in wheat as influenced by row spacing and weed emergence times. Crop Protection, 71, 101–108. https://doi.org/10.1016/j.cropro.2015.02.005

Gaba, S., Chauvel, B., Dessaint, F., Bretagnolle, V., & Petit, S. (2010). Weed species richness in winter wheat increases with landscape heterogeneity. Agriculture, Ecosystems & Environment, 138, 318–323. https://doi.org/10.1016/j.agee.2010.06.005

Gupta, S., Girshick, R., Arbeláez, P. & Malik, J. (2014). Learning Rich Features from RGB-D Images for Object Detection and Segmentation. European Conference on Computer Vision (ECCV), 345–360. https://doi.org/10.1007/978-3-319-10584-0_23

Gupta, S., Hoffman, J., Malik, J. (2016). Cross Modal Distillation for Supervision Transfer. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2827–2836. https://doi.org/10.1109/CVPR.2016.309

Gupta, S., Hoffman, J., & Malik, J. (2016a). Cross modal distillation for supervision transfer. IEEE Computer Society. https://doi.org/10.1007/978-3-319-10584-0_23

Haque, A., Milstein, A., & Li, F. F. (2020). Illuminating the dark spaces of healthcare with ambient intelligence. Nature, 585(7824), 193–202. https://doi.org/10.1038/s41586-020-2669-y

Haug, S. & Ostermann, J.(2014). A Crop/Weed Field Image Dataset for the Evaluation of Computer Vision Based Precision Agriculture Tasks. European Conference on Computer Vision (ECCV), 105–116. https://doi.org/10.1007/978-3-319-16220-1_8

Hu, J., Shen, L., & Sun, G. (2020). Squeeze-and-excitation networks. IEEE Transactions on Pattern Analysis and Machine Intelligence. https://doi.org/10.1109/TPAMI.2019.2913372

Huang, H. S., Deng, J. Z., Lan, Y. B., Yang, A. Q., Deng, X. L., & Zhang, L. (2018b). A fully convolutional network for weed mapping of unmanned aerial vehicle (UAV) imagery. PLoS ONE. https://doi.org/10.1371/journal.pone.0196302

Huang, H., Lan, Y., Deng, J., Yang, A., Deng, X., Zhang, L., & Wen, S. (2018a). A semantic labeling approach for accurate weed mapping of high resolution UAV imagery. Sensors, 18, 2113.

Jin, X. J., Che, J., & Chen, Y. (2021). Weed identification using deep learning and image processing in vegetable plantation. IEEE Access, 9, 10940–10950. https://doi.org/10.1109/access.2021.3050296

Kong, T., Yao, A., Chen, Y., Sun, F. (2016). HyperNet: Towards Accurate Region Proposal Generation and Joint Object Detection. IEEE Conference on Computer Vision and Pattern Recognition, 845–853

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2017). ImageNet classification with deep convolutional neural networks. Communications of the Acm, 60, 84–90. https://doi.org/10.1145/3065386

Lecun, Y., & Bottou, L. (1998). Gradient-based learning applied to document recognition. Proceedings of the IEEE, 86, 2278–2324. https://doi.org/10.1109/5.726791

Li, J., Jia, J. J. & Xu, D. L. (2018). Unsupervised Representation Learning of Image-Based Plant Disease with Deep Convolutional Generative Adversarial Networks. 37th Chinese Control Conference (CCC), 9159–9163. https://doi.org/10.23919/ChiCC.2018.8482813

Li, X., & Chen, S. (2021). A concise yet effective model for non-aligned incomplete multi-view and missing multi-label learning. IEEE Transactions on Pattern Analysis and Machine Intelligence. https://doi.org/10.1109/tpami.2021.3086895

Liu, Z., Lin, Y., Cao, Y., Hu, H., Wei, Y., Zhang, Z., Lin, S. & Guo, B. (2021). Swin Transformer: Hierarchical vision transformer using shifted windows. arXiv preprint arXiv:2103.14030

Liu, X., Zhu, X., Li, M., Wang, L., Tang, C., Yin, J., Shen, D., Wang, H., & Gao, W. (2019). Late fusion incomplete multi-view clustering. IEEE Transactions on Pattern Analysis and Machine Intelligence, 41, 2410–2423. https://doi.org/10.1109/tpami.2018.2879108

Maheswari, G. U., Ramar, K., Manimegalai, D., & Gomathi, V. (2011). An adaptive region based color texture segmentation using fuzzified distance metric. Applied Soft Computing, 11, 2916–2924. https://doi.org/10.1016/j.asoc.2010.08.017

Meyer, G. E., Hindman, T., & Laksmi, K. (1999). Machine vision detection parameters for plant species identification. Precision Agriculture and Biological Quality. https://doi.org/10.1007/978-3-319-16220-1_8

Munier-Jolain, N. M., Guyot, S., & Colbach, N. (2013). A 3D model for light interception in heterogeneous crop: Weed canopies: Model structure and evaluation. Ecological Modelling, 250, 101–110. https://doi.org/10.1016/j.ecolmodel.2012.10.023

Nieuwenhuizen, A. T., Hofstee, J. W., & Henten, E. (2010). Performance evaluation of an automated detection and control system for volunteer potatoes in sugar beet fields. Biosystems Engineering, 107, 46–53.

Palm, C. (2004). Color texture classification by integrative co-occurrence matrices. Pattern Recognition, 37, 965–976. https://doi.org/10.1016/j.patcog.2003.09.010

Qi, C. R., Wei, L., Wu, C., Hao, S. & Guibas, L. J. (2017). Frustum PointNets for 3D Object Detection from RGB-D Data, (918–927). https://doi.org/10.1016/j.biosystemseng.2010.06.011

Ren, S., He, K., Girshick, R., & Sun, J. (2017). Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Transactions on Pattern Analysis & Machine Intelligence, 39, 1137–1149. https://doi.org/10.1109/TPAMI.2016.2577031

Roslim, M. H. M., Juraimi, A. S., Cheya, N. N., Sulaiman, N., Abd Manaf, M. N. H., Ramli, Z., & Motmainna, M. (2021). Using remote sensing and an unmanned aerial system for weed management in agricultural crops: A review. Agronomy-Basel. https://doi.org/10.3390/agronomy11091809

Schwarz, M., Schulz, H., Behnke, S. (2015). RGB-D Object Recognition and Pose Estimation based on Pre-trained Convolutional Neural Network Features. IEEE International Conference on Robotics and Automation (ICRA), 1329–1335. https://doi.org/10.1109/ICRA.2015.7139363

Selvaraju, R. R., Cogswell, M., Das, A., Vedantam, R., Parikh, D. & Batra, D. (2017). Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. IEEE International Conference on Computer Vision (ICCV), 618–626. https://doi.org/10.1109/ICCV.2017.74

Spitters, C., & Den Bergh, V. (1982). Competition between crop and weeds: A system approach. Biology and Ecology of Weeds. https://doi.org/10.1007/978-94-017-0916-3_12

Sudars, K., Jasko, J., Namatevs, I., Ozola, L., & Badaukis, N. (2020). Dataset of annotated food crops and weed images for robotic computer vision control. Data in Brief, 31, 105833. https://doi.org/10.1016/j.dib.2020.105833

Tellaeche, A., Burgosartizzu, X. P., Pajares, G., Ribeiro, A., & Fernandez-Quintanilla, U. (2008). A new vision-based approach to differential spraying in precision agriculture. Computers and Electronics in Agriculture, 60, 144–155. https://doi.org/10.1016/j.compag.2007.07.008

Tellaeche, A., Pajares, G., Burgos-Artizzu, X. P., & Ribeiro, A. (2011). A computer vision approach for weeds identification through support vector machines. Applied Soft Computing, 11, 908–915. https://doi.org/10.1016/j.asoc.2010.01.011

Tillett, N. D., Hague, T., & Miles, S. J. (2001). A field assessment of a potential method for weed and crop mapping on the basis of crop planting geometry - ScienceDirect. Computers and Electronics in Agriculture, 32, 229–246. https://doi.org/10.1016/S0168-1699(01)00167-3

Wang, A. C., Xu, Y. F., Wei, X. H., & Cui, B. B. (2020). Semantic segmentation of crop and weed using an encoder-decoder network and image enhancement method under uncontrolled outdoor illumination. IEEE Access, 8, 81724–81734. https://doi.org/10.1109/access.2020.2991354

Woo, S., Park, J., Lee, J.-Y. & Kweon, I. S. (2018). CBAM: Convolutional Block Attention Module. 2018 European Conference on Computer Vision.https://doi.org/10.48550/arXiv.1807.06521

Xu, K., Li, H., Cao, W., Zhu, Y., & Ni, J. (2020a). Recognition of weeds in wheat fields based on the fusion of RGB images and depth images. IEEE Access. https://doi.org/10.1109/ACCESS.2020.3001999

Xu, K., Zhang, J., Li, H., Cao, W., Zhu, Y., Jiang, X., & Ni, J. (2020b). Spectrum- and RGB-D-based image fusion for the prediction of nitrogen accumulation in wheat. Remote Sensing. https://doi.org/10.3390/rs12244040

Xu, X., Li, Y., Wu, G., & Luo, J. (2017). Multi-modal deep feature learning for RGB-D object detection. Pattern Recognition, 72, 300–313. https://doi.org/10.1016/j.patcog.2017.07.026

Zhang, L., & Grift, T. E. (2012). A LIDAR-based crop height measurement system for Miscanthus giganteus. Computers and Electronics in Agriculture, 85, 70–76. https://doi.org/10.1016/j.compag.2012.04.001

Zhu, H., Weibel, J.-B., Lu, S. (2016). Discriminative Multi-modal Feature Fusion for RGBD Indoor Scene Recognition. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2969–2976. https://doi.org/10.1109/CVPR.2016.324

Zou, K. L., Chen, X., Wang, Y. L., Zhang, C. L., & Zhang, F. (2021). A modified U-Net with a specific data argumentation method for semantic segmentation of weed images in the field*. Computers and Electronics in Agriculture. https://doi.org/10.1016/j.compag.2021.106242

Acknowledgements

This work was supported by National Natural Science Foundation of China (Grant No. 31871524), Modern Agricultural machinery equipment & technology demonstration and promotion of Jiangsu Province (Grant No. NJ2021-58), the Primary Research & Development Plan of Jiangsu Province of China (Grant No. BE2021304) and Six Talent Peaks Project in Jiangsu Province (Grant No. XYDXX-049).

Author information

Authors and Affiliations

Contributions

KX: Methodology, software, data curation and writing original draft. PY: Writing, review and editing. QX: Experiment design and data collection. YZ: Supervision and Validation. WC: Supervision, review and editing. JN: Supervision, writing, review, editing and funding acquisition.

Corresponding authors

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Xu, K., Yuen, P., Xie, Q. et al. WeedsNet: a dual attention network with RGB-D image for weed detection in natural wheat field. Precision Agric 25, 460–485 (2024). https://doi.org/10.1007/s11119-023-10080-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11119-023-10080-2