Abstract

Among machine learning techniques, classification techniques are useful for various business applications, but classification algorithms perform poorly with imbalanced data. In this study, we propose a classification technique with improved binary classification performance on both the minority and majority classes of imbalanced structured data. The proposed framework is composed of three steps. In the first step, a balanced training set is created via under-sampling. Then, each example is converted into an image depicting a line graph. In the last step, a Convolutional Neural Network (CNN) is trained using the images. In the experiments, we selected six datasets from the UCI Repository and applied the proposed framework to them. The proposed model achieved the best receiver operating characteristic (ROC) curve and Balanced Accuracy (BA) on all the datasets and five datasets, respectively. This demonstrates that the combination of under-sampling and CNNs is a viable approach for imbalanced structure data classification.

Similar content being viewed by others

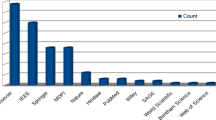

References

Abdel-Hamid, O., Deng, L., & Yu, D. (2013) Exploring convolutional neural network structures and optimization techniques for speech recognition. In Interspeech (Vol. 11, pp. 73–5)

Ando, S. (2016). Classifying imbalanced data in distance-based feature space. Knowledge and Information Systems, 46(3), 707–730

Awoyemi, J. O., Adetunmbi, A. O., & Oluwadare, S. A. (2017). Credit card fraud detection using machine learning techniques: a comparative analysis. In 2017 International Conference on Computing Networking and Informatics (ICCNI) (pp. 1–9). IEEE

Balachandran, P. V., Xue, D., Theiler, J., Hogden, J., Gubernatis, J. E., & Lookman, T. (2018). Importance of feature selection in machine learning and adaptive design for materials. In Materials Discovery and Design (pp. 59–79). Springer

Bang, C., Lee, J., & Rao, R. (2021). The Egyptian protest movement in the twittersphere: an investigation of dual sentiment pathways of communication. International Journal of Information Management, 58. https://doi.org/10.1016/j.ijinfomgt.2021.102328

Barandela, R., Valdovinos, R. M., & Sánchez, J. S. (2003). New applications of ensembles of classifiers. Pattern Analysis & Applications, 6(3), 245–256

Benfeldt, O., Persson, J. S., & Madsen, S. (2019). Data governance as a collective action problem. Information Systems Frontiers (pp. 1–15). Springer

Bessi, A., & Ferrara, E. (2016). Social bots distort the 2016 US presidential election online discussion. First Monday, 21, 11–17

Beyan, C., & Fisher, R. (2015). Classifying imbalanced data sets using similarity based hierarchical decomposition. Pattern Recognition, 48(5), 1653–1672

Braytee, A., Liu, W., & Kennedy, P. (2016). A cost-sensitive learning strategy for feature extraction from imbalanced data. In International Conference on Neural Information Processing (pp. 78–86). Springer

Breiman, L. (1996). Bagging predictors. Machine Learning, 24(2), 123–140

Bunkhumpornpat, C., Sinapiromsaran, K., & Lursinsap, C. (2009). Safe-Level-Smote: Safe-level-synthetic minority over-sampling technique for handling the class imbalanced problem. In Pacific-Asia Conference on Knowledge Discovery and Data Mining (pp. 475–482). Springer

Castro, C. L., & Braga, A. P. (2013). Novel cost-sensitive approach to improve the multilayer perceptron performance on imbalanced data. IEEE Transactions on Neural Networks and Learning Systems, 24(6), 888–899

Chan, K. K., & Misra, S. (1990). Characteristics of the opinion leader: a new dimension. Journal of Advertising, 19(3), 53–60. Taylor & Francis

Chawla, N. V., Lazarevic, A., Hall, L. O., & Bowyer, K. W. (2003). SMOTEBoost: Improving prediction of the minority class in boosting. In European Conference on Principles of Data Mining and Knowledge Discovery (pp. 107–119). Springer

Chen, S., He, H., & Garcia, E. A. (2010). RAMOBoost: ranked minority oversampling in boosting. IEEE Transactions on Neural Networks, 21(10), 1624–1642

Chen, T., & Guestrin, C. (2016). XGBoost: A scalable tree boosting system. Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (pp. 785–794). https://doi.org/10.1145/2939672.2939785

Chen, X., & Wasikowski, M. (2008). Fast: A roc-based feature selection metric for small samples and imbalanced data classification problems. In Proceedings of the 14th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (pp. 124–132). ACM

Chen, Z. Y., Fan, Z. P., & Sun, M. (2012). A hierarchical multiple kernel support vector machine for customer churn prediction using longitudinal behavioral data. European Journal of Operational Research, 223(2), 461–472

Colton, D., & Hofmann, M. (2019). Sampling techniques to overcome class imbalance in a cyberbullying context. Journal of Computer-Assisted Linguistic Research, 3(1), 21. https://doi.org/10.4995/jclr.2019.11112

D’Addabbo, A., & Maglietta, R. (2015). Parallel selective sampling method for imbalanced and large data classification. Pattern Recognition Letters, 62, 61–67

Dastile, X., Celik, T., & Potsane, M. (2020). Statistical and machine learning models in credit scoring: a systematic literature survey. Applied Soft Computing, 91, 106263. Elsevier

Datta, S., & Das, S. (2015). Near-bayesian support vector machines for imbalanced data classification with equal or unequal misclassification costs. Neural Networks, 70, 39–52

Dellarocas, C., & Wood, C. A. (2008). The sound of silence in online feedback: estimating trading risks in the presence of reporting bias. Management Science, 54, 3460–3476

Díez-Pastor, J. F., Rodríguez, J. J., García-Osorio, C., & Kuncheva, L. I. (2015). Random balance: ensembles of variable priors classifiers for imbalanced data. Knowledge-Based Systems, 85, 96–111

Drummond, C., & Holte, R. C. (2003). C4. 5, Class imbalance, and cost sensitivity: why under-sampling beats over-sampling. In Workshop on Learning from Imbalanced Datasets II (Vol. 11, pp. 1–8). Citeseer

Dullaghan, C., & Rozaki, E. (2017). Integration of machine learning techniques to evaluate dynamic customer segmentation analysis for mobile customers. ArXiv Preprint ArXiv:1702.02215

Dwivedi, Y. K., Kelly, G., Janssen, M., Rana, N. P., Slade, E. L., & Clement, M. (2018). Social media: the good, the bad, and the ugly. Information Systems Frontiers, 20(3), 419–423. Springer

Ezenkwu, C. P., Ozuomba, S., & Kalu, C. (2015). Application of K-Means Algorithm for Efficient Customer Segmentation: A Strategy for Targeted Customer Services. Citeseer

Fawcett, T. (2006). An introduction to ROC analysis. Pattern Recognition Letters, 27(8), 861–874. https://doi.org/10.1016/j.patrec.2005.10.010

Fertier, A., Barthe-Delanoë, A. M., Montarnal, A., Truptil, S., & Bénaben, F. (2020). A new emergency decision support system: the automatic interpretation and contextualisation of events to model a crisis situation in real-time,. Decision Support Systems, 133, 113260. Elsevier

Freund, Y., Schapire, R., & Abe, N. (1999). A short introduction to boosting. Journal-Japanese Society For Artificial Intelligence, 14, 771–7801612

Freund, Y., & Schapire, R. E. (1997). A decision-theoretic generalization of on-line learning and an application to boosting. Journal of Computer and System Sciences, 55(1), 119–139. Elsevier

Galar, M., Fernández, A., Barrenechea, E., & Herrera, F. (2013). EUSBoost: enhancing ensembles for highly imbalanced data-sets by evolutionary undersampling. Pattern Recognition, (46(12), 3460–3471

Gao, X., Chen, Z., Tang, S., Zhang, Y., & Li, J. (2016). Adaptive weighted imbalance learning with application to abnormal activity recognition. Neurocomputing, 173, 1927–1935

García, V., Sánchez, J. S., Rodríguez-Picón, L. A., Méndez-González, L. C., & de Jesús Ochoa-Domínguez, H. (2019). Using regression models for predicting the product quality in a tubing extrusion process. Journal of Intelligent Manufacturing, 30(6), 2535–2544. Springer

García-Pedrajas, N., & García-Osorio, C. (2013). Boosting for class-imbalanced datasets using genetically evolved supervised non-linear projections. Progress in Artificial Intelligence, 2(1), 29–44

Geller, J., Scherl, R., & Perl, Y. (2002). Mining the web for target marketing information. Proceedings of CollECTeR, Toulouse, France

Ghazikhani, A., Monsefi, R., & Yazdi, H. S. (2013). Ensemble of online neural networks for non-stationary and imbalanced data streams. Neurocomputing, 122, 535–544

Girshick, R., Donahue, J., Darrell, T., & Malik, J. (2014). Rich feature hierarchies for accurate object detection and semantic segmentation. In 2014 IEEE Conference on Computer Vision and Pattern Recognition (pp. 580–587). IEEE. https://doi.org/10.1109/CVPR.2014.81

Guo, C., & Berkhahn, F. (2016). Entity embeddings of categorical variables. ArXiv Preprint ArXiv:1604.06737

Guo, X., Yin, Y., Dong, C., Yang, G., & Zhou, G. (2008). On the class imbalance problem. In 2008 Fourth International Conference on Natural Computation (pp. 192–201). IEEE. https://doi.org/10.1109/ICNC.2008.871

Gupta, Y. (2018). Selection of important features and predicting wine quality using machine learning techniques. Procedia Computer Science, 125, 305–312. Elsevier

Ha, J., & Lee, J. S. (2016). A new under-sampling method using genetic algorithm for imbalanced data classification. In Proceedings of the 10th International Conference on Ubiquitous Information Management and Communication (p. 95). ACM

Haixiang, G., Yijing, L., Shang, J., Mingyun, G., Yuanyue, H., & Bing, G. (2017). Learning from class-imbalanced data: review of methods and applications. Expert Systems with Applications, 73, 220–239

Han, H., Wang, W. Y., & Mao, B. H. (2005). Borderline-SMOTE: A new over-sampling method in imbalanced data sets learning. In International Conference on Intelligent Computing (pp. 878–887). Springer

He, H., Bai, Y., Garcia, E. A., & Li, S. (2008). ADASYN: Adaptive synthetic sampling approach for imbalanced learning. In 2008 IEEE International Joint Conference on Neural Networks (IEEE World Congress on Computational Intelligence) (pp. 1322–1328). IEEE

Hosseini, H., Xiao, B., Jaiswal, M., & Poovendran, R. (2017). On the limitation of convolutional neural networks in recognizing negative images. In 2017 16th IEEE International Conference on Machine Learning and Applications (ICMLA) (pp. 352–358). IEEE

Hu, S., Liang, Y., Ma, L., & He, Y. (2009). MSMOTE: Improving classification performance when training data is imbalanced. In Computer Science and Engineering, 2009. WCSE’09. Second International Workshop On (Vol. 2, pp. 13–17). IEEE

Huang, C. K., Wang, T., & Huang, T. Y. (2020). Initial evidence on the impact of big data implementation on firm performance. Information Systems Frontiers, 22(2), 475–487. Springer

Ioffe, S., & Szegedy, C. (2015). Batch normalization: accelerating deep network training by reducing internal covariate shift. ArXiv Preprint ArXiv:1502.03167

Japkowicz, N., & Stephen, S. (2002). The class imbalance problem: a systematic study. Intelligent Data Analysis, 6(5), 429–449

Jing, L., Zhao, M., Li, P., & Xu, X. (2017). A convolutional neural network based feature learning and fault diagnosis method for the condition monitoring of gearbox. Measurement, 111, 1–10

Johnson, J. M., & Khoshgoftaar, T. M. (2020). The effects of data sampling with deep learning and highly imbalanced big data. Information Systems Frontiers, 22(5), 1113–1131. Springer

Kansal, T., Bahuguna, S., Singh, V., & Choudhury, T. (2018). Customer segmentation using K-Means clustering. In 2018 International Conference on Computational Techniques, Electronics and Mechanical Systems (CTEMS) (pp. 135–139). IEEE

Kim, S., Kim, H., & Namkoong, Y. (2016). Ordinal classification of imbalanced data with application in emergency and disaster information services. IEEE Intelligent Systems, 31(5), 50–56

Kizgin, H., Jamal, A., Dey, B. L., & Rana, N. P. (2018). The impact of social media on consumers’ acculturation and purchase intentions. Information Systems Frontiers, 20(3), 503–514. Springer

Kuko, M., & Pourhomayoun, M. (2020). Single and clustered cervical cell classification with ensemble and deep learning methods. Information Systems Frontiers, (22(5), 1039–1051. https://doi.org/10.1007/s10796-020-10028-1

Lane, P. C., Clarke, D., & Hender, P. (2012). On developing robust models for favourability analysis: model choice, feature sets and imbalanced data. Decision Support Systems, (53(4), 712–718

LeCun, Y., Bengio, Y., & Hinton, G. (2015). Deep learning. Nature, 521, 7553436

LeCun, Y., Haffner, P., Bottou, L., & Bengio, Y. (1999). Object recognition with gradient-based learning. In Shape, Contour and Grouping in Computer Vision (pp. 319–345). Springer

Li, Q., Yang, B., Li, Y., Deng, N., & Jing, L. (2013). Constructing support vector machine ensemble with segmentation for imbalanced datasets. Neural Computing and Applications, 22(1), 249–256

Li, Z., Kamnitsas, K., & Glocker, B. (2019). Overfitting of neural nets under class imbalance: analysis and improvements for segmentation. ArXiv:1907.10982 [Cs, Stat]. http://arxiv.org/abs/1907.10982

Liang, J., Bai, L., Dang, C., & Cao, F. (2012). The K-Means-Type algorithms versus imbalanced data distributions. IEEE Transactions on Fuzzy Systems, 20(4), 728–745

Lin, W. C., Tsai, C. F., Hu, Y. H., & Jhang, J. S. (2017). Clustering-based undersampling in class-imbalanced data. Information Sciences, 409, 17–26

Liu, B., & Tsoumakas, G. (2020). Dealing with class imbalance in classifier chains via random undersampling. Knowledge-Based Systems, 192, 105292. https://doi.org/10.1016/j.knosys.2019.105292

Liu, J., Timsina, P., & El-Gayar, O. (2018). A comparative analysis of semi-supervised learning: the case of article selection for medical systematic reviews. Information Systems Frontiers, 20(2), 195–207. https://doi.org/10.1007/s10796-016-9724-0

Liu, X. Y., Wu, J., & Zhou, Z. H. (2009). Exploratory undersampling for class-imbalance learning. IEEE Transactions on Systems, Man, and Cybernetics, Part B (Cybernetics), 39(2), 539–550

López, V., Río, D., Benítez, S., & Herrera, F. (2015). Cost-sensitive linguistic fuzzy rule based classification systems under the mapreduce framework for imbalanced big data. Fuzzy Sets and Systems, 258, 5–38

Loyola-González, O., Martínez-Trinidad, J. F., Carrasco-Ochoa, J. A., & García-Borroto, M. (2016). Study of the impact of resampling methods for contrast pattern based classifiers in imbalanced databases. Neurocomputing, 175, 935–947

Lu, J., Zhang, C., & Shi, F. (2016). A classification method of imbalanced data base on PSO algorithm. In International Conference of Pioneering Computer Scientists, Engineers and Educators (pp. 121–134). Springer

Maldonado, S., & López, J. (2014). Imbalanced data classification using second-order cone programming support vector machines. Pattern Recognition, 47(5), 2070–2079

Mäntymäki, M., Hyrynsalmi, S., & Koskenvoima, A. (2020). How do small and medium-sized game companies use analytics? An attention-based view of game analytics. Information Systems Frontiers, 22(5), 1163–1178. Springer

Mao, W., Wang, J., He, L., & Tian, Y. (2016). two-stage hybrid extreme learning machine for sequential imbalanced data. In Proceedings of ELM-2015 (Vol. 1, pp. 423–433). Springer

Maratea, A., Petrosino, A., & Manzo, M. (2014). Adjusted F-Measure and Kernel scaling for imbalanced data learning. Information Sciences, 257, 331–341

Moepya, S. O., Akhoury, S. S., & Nelwamondo, F. V. (2014). Applying cost-sensitive classification for financial fraud detection under high class-imbalance. In 2014 IEEE International Conference on Data Mining Workshop (pp.183–192). IEEE

Moreo, A., Esuli, A., & Sebastiani, F. (2016). Distributional random oversampling for imbalanced text classification. In Proceedings of the 39th International ACM SIGIR Conference on Research and Development in Information Retrieval (pp.805–808). ACM

Moscato, V., Picariello, A., & Sperlí, G. (2021). A benchmark of machine learning approaches for credit score prediction. Expert Systems with Applications, 165, 113986. https://doi.org/10.1016/j.eswa.2020.113986

Mustafaraj, E., Finn, S., Whitlock, C., & Metaxas, P. T. (2011). Vocal minority versus silent majority: discovering the opionions of the long tail. In 2011 IEEE Third International Conference on Privacy, Security, Risk and Trust and 2011 IEEE Third International Conference on Social Computing (pp. 103–110). IEEE

Nekooeimehr, I., & Lai-Yuen, S. K. (2016). Adaptive Semi-Unsupervised Weighted Oversampling (A-SUWO) for imbalanced datasets. Expert Systems with Applications, 46, 405–416

Oh, S., Lee, M. S., & Zhang, B. T. (2010). Ensemble learning with active example selection for imbalanced biomedical data classification. IEEE/ACM Transactions on Computational Biology and Bioinformatics, 8(2), 316–325

Ozan, Å. (2018). A case study on customer segmentation by using machine learning methods. In 2018 International Conference on Artificial Intelligence and Data Processing (IDAP) (pp. 1–6). IEEE

Perlich, C., Dalessandro, B., Raeder, T., Stitelman, O., & Provost, F. (2014). Machine learning for targeted display advertising: transfer learning in action. Machine Learning, 95, 1. https://doi.org/10.1007/s10994-013-5375-2

Powers, D. (2011). Evaluation: From Precision, Recall and F-Measure to ROC, Informedness, Markedness and Correlation. International Journal of Machine Learning Technology (2:1), pp 37–63

Quinlan, J. R. (2014). C4. 5: Programs for Machine Learning. Elsevier

Rahman, M. M., & Davis, D. N. (2013). Addressing the class imbalance problem in medical datasets. International Journal of Machine Learning and Computing, 224–228. https://doi.org/10.7763/IJMLC.2013.V3.307

Santurkar, S., Tsipras, D., Ilyas, A., & Madry, A. (2018). How does batch normalization help optimization?. In Advances in Neural Information Processing Systems (pp. 2483–2493)

Seiffert, C., Khoshgoftaar, T. M., Van Hulse, J., & Napolitano, A. (2010). RUSBoost: a hybrid approach to alleviating class imbalance. IEEE Transactions on Systems, Man, and Cybernetics - Part A: Systems and Humans, 40(1), 185–197. https://doi.org/10.1109/TSMCA.2009.2029559

Shao, Y. H., Chen, W. J., Zhang, J. J., Wang, Z., & Deng, N. Y. (2014). An efficient weighted Lagrangian twin support vector machine for imbalanced data classification. Pattern Recognition, 47(9), 3158–3167

Sharma, S., Bellinger, C., Krawczyk, B., Zaiane, O., & Japkowicz, N. (2018). Synthetic oversampling with the majority class: a new perspective on handling extreme imbalance, In 2018 IEEE International Conference on Data Mining (ICDM) (pp. 447–456). IEEE. https://doi.org/10.1109/ICDM.2018.00060

Smiti, S., & Soui, M. (2020). Bankruptcy prediction using deep learning approach based on borderline SMOTE. Information Systems Frontiers, 22(5), 1067–1083. Springer

Sokolova, M., Japkowicz, N., & Szpakowicz, S. (2006). Beyond accuracy, F-Score and ROC: a family of discriminant measures for performance evaluation. In Australasian Joint Conference on Artificial Intelligence (pp. 1015–1021). Springer

Sokolova, M., & Lapalme, G. (2009). A systematic analysis of performance measures for classification tasks. Information Processing & Management, (45(4), 427–437

Song, L., Hou, Y., & Cai, Z. (2014). Recovery-based error estimator for stabilized finite element methods for the stokes equation. Computer Methods in Applied Mechanics and Engineering, 272, 1–16

Straube, S., & Krell, M. M. (2014). How to evaluate an agent’s behavior to infrequent events?—Reliable performance estimation insensitive to class distribution. Frontiers in Computational Neuroscience, 8, 43

Sun, Y., Kamel, M. S., Wong, A. K., & Wang, Y. (2007). Cost-sensitive boosting for classification of imbalanced data. Pattern Recognition, 40(12), 3358–3378

Sun, Z., Song, Q., Zhu, X., Sun, H., Xu, B., & Zhou, Y. (2015). A novel ensemble method for classifying imbalanced data. Pattern Recognition, 48(5), 1623–1637

Sundarkumar, G. G., & Ravi, V. (2015). A novel hybrid undersampling method for mining unbalanced datasets in banking and insurance. Engineering Applications of Artificial Intelligence, 37, 368–377

Tahir, M. A., Kittler, J., & Yan, F. (2012). Inverse random under sampling for class imbalance problem and its application to multi-label classification. Pattern Recognition, 45(10), 3738–3750

Tian, H., Chen, S. C., & Shyu, M. L. (2020). Evolutionary programming based deep learning feature selection and network construction for visual data classification. Information Systems Frontiers, 22(5), 1053–1066. Springer

Timsina, P., Liu, J., & El-Gayar, O. (2016). Advanced analytics for the automation of medical systematic reviews. Information Systems Frontiers, 18(2), 237–252. Springer

Tompson, J., Goroshin, R., Jain, A., LeCun, Y., & Bregler, C. (2015). Efficient object localization using convolutional networks. In 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (June, pp. 648–656). IEEE. https://doi.org/10.1109/CVPR.2015.7298664

Tsai, C. F., Lin, W. C., Hu, Y. H., & Yao, G. T. (2019). Under-sampling class imbalanced datasets by combining clustering analysis and instance selection. Information Sciences, 477, 47–54

Varmedja, D., Karanovic, M., Sladojevic, S., Arsenovic, M., & Anderla, A. (2019). Credit card fraud detection-machine learning methods. In 2019 18th International Symposium INFOTEH-JAHORINA (INFOTEH) (pp. 1–5). IEEE

Vong, C. M., Ip, W. F., Chiu, C. C., & Wong, P. K. (2015). Imbalanced learning for air pollution by meta-cognitive online sequential extreme learning machine. Cognitive Computation, 7(3), 381–391

Wang, G., Ledwoch, A., Hasani, R. M., Grosu, R., & Brintrup, A. (2019). A generative neural network model for the quality prediction of work in progress products. Applied Soft Computing, 85, 105683. Elsevier

Wang, S., & Yao, X. (2009). Diversity analysis on imbalanced data sets by using ensemble models. In Computational Intelligence and Data Mining, 2009. CIDM’09. IEEE Symposium On (pp. 324–331). IEEE

Wu, D., Wang, Z., Chen, Y., & Zhao, H. (2016). Mixed-kernel based weighted extreme learning machine for inertial sensor based human activity recognition with imbalanced dataset. Neurocomputing, 190, 35–49

Xu, Y., Yang, Z., Zhang, Y., Pan, X., & Wang, L. (2016). A maximum margin and minimum volume hyper-spheres machine with pinball loss for imbalanced data classification. Knowledge-Based Systems, 95, 75–85

Yijing, L., Haixiang, G., Xiao, L., Yanan, L., & Jinling, L. (2016). Adapted ensemble classification algorithm based on multiple classifier system and feature selection for classifying multi-class imbalanced data. Knowledge-Based Systems, 94, 88–104

Zhang, C., Gao, W., Song, J., & Jiang, J. (2016). An imbalanced data classification algorithm of improved autoencoder neural network. In 2016 Eighth International Conference on Advanced Computational Intelligence (ICACI) (pp. 95–99). IEEE

Zhang, Y., Fu, P., Liu, W., & Chen, G. (2014). Imbalanced data classification based on scaling kernel-based support vector machine. Neural Computing and Applications, 25, 3–4927

Zhou, L. (2013). Performance of corporate bankruptcy prediction models on imbalanced dataset: the effect of sampling methods. Knowledge-Based Systems, 41, 16–25

Zolbanin, H. M., Delen, D., Crosby, D., & Wright, D. (2019). A predictive analytics-based decision support system for drug courts. Information Systems Frontiers, 1–20. Springer

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Lee, Y.S., Bang, C.C. Framework for the Classification of Imbalanced Structured Data Using Under-sampling and Convolutional Neural Network. Inf Syst Front 24, 1795–1809 (2022). https://doi.org/10.1007/s10796-021-10195-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10796-021-10195-9