Abstract

The minibatching technique has been extensively adopted to facilitate stochastic first-order methods because of their computational efficiency in parallel computing for large-scale machine learning and data mining. Indeed, increasing the minibatch size decreases the iteration complexity (number of minibatch queries) to converge, resulting in the decrease of the running time by processing a minibatch in parallel. However, this gain is usually saturated for too large minibatch sizes and the total computational complexity (number of access to an example) is deteriorated. Hence, the determination of an appropriate minibatch size which controls the trade-off between the iteration and total computational complexities is important to maximize performance of the method with as few computational resources as possible. In this study, we define the optimal minibatch size as the minimum minibatch size with which there exists a stochastic first-order method that achieves the optimal iteration complexity and we call such a method the optimal minibatch method. Moreover, we show that Katyusha (in: Proceedings of annual ACM SIGACT symposium on theory of computing vol 49, pp 1200–1205, ACM, 2017), DASVRDA (Murata and Suzuki, in: Advances in neural information processing systems vol 30, pp 608–617, 2017), and the proposed method which is a combination of Acc-SVRG (Nitanda, in: Advances in neural information processing systems vol 27, pp 1574–1582, 2014) with APPA (Cotter et al. in: Advances in neural information processing systems vol 27, pp 3059–3067, 2014) are optimal minibatch methods. In experiments, we compare optimal minibatch methods with several competitors on \(L_1\)-and \(L_2\)-regularized logistic regression problems and observe that iteration complexities of optimal minibatch methods linearly decrease as minibatch sizes increase up to reasonable minibatch sizes and finally attain the best iteration complexities. This confirms the computational efficiency of optimal minibatch methods suggested by the theory.

Similar content being viewed by others

Notes

In this case, the all methods converged within one or two epochs and performed very similarly to each other.

When \(\lambda _2 = 0\), we used dummy L2-regularization with \(\lambda _2 = 10^{-8}\), that was roughly \(\varepsilon * \Vert x_*\Vert ^2\), where \(\varepsilon \) is the desired accuracy and was set to \(10^{-4}\) and \(x_*\) is the optimal solution.

References

Allen-Zhu Z (2017) Katyusha: the first direct acceleration of stochastic gradient methods. In: Proceedings of annual ACM SIGACT symposium on theory of computing 49, pp 1200–1205. ACM

Murata T, Suzuki T (2017) Doubly accelerated stochastic variance reduced dual averaging method for regularized empirical risk minimization. Adv Neural Inf Process Syst 30:608–617

Nitanda A (2014) Stochastic proximal gradient descent with acceleration techniques. Adv Neural Inf Process Syst 27:1574–1582

Lin Q, Lu Z, Xiao L (2014) An accelerated proximal coordinate gradient method. Adv Neural Inf Process Syst 27:3059–3067

Cotter A, Shamir O, Srebro N, Sridharan K (2011) Better mini-batch algorithms via accelerated gradient methods. Adv Neural Inf Process Syst 24:1647–1655

Shalev-Shwartz S, Zhang T (2013) Accelerated mini-batch stochastic dual coordinate ascent. Adv Neural Inf Process Syst 26:378–385

Kai Z, Liang H (2013) Minibatch and parallelization for online large margin structured learning. In: Proceedings of the 2013 Conference of the North American chapter of the association for computational linguistics: human language technologies, pp 370–379

Martin T, Singh BA, Peter R, Nati S (2013) Mini-batch primal and dual methods for svms. In: ICML (3), pp 1022–1030

Mu L, Tong Z, Yuqiang C, Alexander JS (2014) Efficient mini-batch training for stochastic optimization. In: Proceedings of the 20th ACM SIGKDD international conference on Knowledge discovery and data mining, pp 661–670. ACM

Peilin Z, Tong Z (2014) Accelerating minibatch stochastic gradient descent using stratified sampling. arXiv preprintarXiv:1405.3080

Jakub Konečnỳ, Jie Liu, Peter Richtárik, Martin Takáč (2016) Mini-batch semi-stochastic gradient descent in the proximal setting. IEEE J Select Top Signal Process 10(2):242–255

Martin T, Peter R, Nathan S (2015) Distributed mini-batch sdca. arXiv preprintarXiv:1507.08322

Prateek J, Sham KM, Rahul K, Praneeth N, Aaron S (2016) Parallelizing stochastic approximation through mini-batching and tail-averaging. Stat 1050:12

Nicolas LR, Mark S, Francis RB (2012) A stochastic gradient method with an exponential convergence rate for finite training sets. In: Advances in neural information processing systems, pp 2663–2671

Johnson R, Zhang T (2013) Accelerating stochastic gradient descent using predictive variance reduction. Adv Neural Inf Process Syst 26:315–323

Defazio A, Bach F, Lacoste-Julien S (2014) Saga: A fast incremental gradient method with support for non-strongly convex composite objectives. Adv Neural Inf Process Syst 27:1646–1654

Lin H, Mairal J, Harchaoui Z (2015) A universal catalyst for first-order optimization. Adv Neural Inf Process Syst 28:3384–3392

Frostig R, Ge R, Kakade S, Sidford A (2015) Un-regularizing: Approximate proximal point and faster stochastic algorithms for empirical risk minimization. In: Proceedings of International Conference on Machine Learning vol 32, pp 2540–2548

Blake EW, Srebro N (2016) Tight complexity bounds for optimizing composite objectives. Adv Neural Inf Process Syst 29:3639–3647

Arjevani Y, Shamir O (2016) Dimension-free iteration complexity of finite sum optimization problems. Adv Neural Inf Process Syst 29:3540–3548

Mark S, Le RN (2013) Fast convergence of stochastic gradient descent under a strong growth condition. arXiv preprintarXiv:1308.6370

Lam MN, Liu J, Scheinberg K, Takáč M (2017) Sarah: A novel method for machine learning problems using stochastic recursive gradient. In: Proceedings of international conference on machine learning vol 34, pp 2613–2621

Ofer D, Ran G-B, Ohad S, Lin X (2012) Optimal distributed online prediction using mini-batches. J Mach Learn Res 13:165–202

Nidham G, Robert MG, Joseph S (2019) Optimal mini-batch and step sizes for saga. arXiv preprintarXiv:1902.00071

Yurii N (2004) Introductory lectures on convex optimization: a basic course. Kluwer Academic Publishers, New York

Panda B, Joshua SH, Basu S, Roberto JB (2009) Planet: massively parallel learning of tree ensembles with mapreduce. Proc VLDB Endow 2(2):1426–1437

Chi Z, Feifei L, Jeffrey J (2012) Efficient parallel knn joins for large data in mapreduce. In: Proceedings of the 15th international conference on extending database technology, pp 38–49

Tianyang S, Chengchun S, Feng L, Yu H, Lili M, Yitong F (2009) An efficient hierarchical clustering method for large datasets with map-reduce. In: 2009 International conference on parallel and distributed computing, applications and technologies, pp 494–499

Yaobin H, Haoyu T, Wuman L, Huajian M, Di M, Shengzhong F, Jianping F (2011) Mr-dbscan: an efficient parallel density-based clustering algorithm using mapreduce. In: 2011 IEEE 17th international conference on parallel and distributed systems, pp 473–480

Weizhong Z, Huifang M, Qing H (2009) Parallel k-means clustering based on mapreduce. In: IEEE international conference on cloud computing, pp 674–679

Xin J, Wang Z, Chen C, Ding L, Wang G, Zhao Y (2014) Elm: distributed extreme learning machine with mapreduce. World Wide Web 17(5):1189–1204

Wang B, Huang S, Qiu J, Yu L, Wang G (2015) Parallel online sequential extreme learning machine based on mapreduce. Neurocomputing 149:224–232

Bi X, Zhao X, Wang G, Zhang P, Wang C (2015) Distributed extreme learning machine with kernels based on mapreduce. Neurocomputing 149:456–463

Çatak FO (2017) Classification with boosting of extreme learning machine over arbitrarily partitioned data. Soft Comput 21(9):2269–2281

Xi C, Qihang L, Javier P (2012) Optimal regularized dual averaging methods for stochastic optimization. In: Advances in neural information processing systems, pp 395–403

Hongzhou L, Julien M, Zaid H (2017) Catalyst acceleration for first-order convex optimization: from theory to practice. arXiv preprintarXiv:1712.05654

Acknowledgements

AN was partially supported by JSPS KAKENHI (19K20337) and JST PRESTO. TS was partially supported by JSPS KAKENHI (18H03201, and 20H00576), Japan Digital Design and JST CREST.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Convergence Analysis of Acc-SVRG with APPA

We here provide a detailed description concerning convergence analyses for Acc-SVRG with or without APPA. The following convergence theorem is a special case of \(n \ge \sqrt{\kappa }\) in [3].

Theorem A

([3]). Consider Algorithm 1. Assume \(n\ge \sqrt{\kappa }\) and \(g_i\) are L-smooth and \(\mu \)-strongly convex functions. Let \(p\in (0,1)\) satisfy \(\frac{2p(2+p)}{1-p} \le \frac{1}{2}\). Set \(b\ge \frac{8\sqrt{\kappa }n}{\sqrt{2}p(n-1)+8\sqrt{\kappa }}\), \(\eta = \frac{1}{2L}\), \(m = \left\lceil \frac{\sqrt{2\kappa }}{1-p}\log \left( \frac{1-p}{p}\right) \right\rceil \). Then, we get

and we get the following iteration and total complexities by setting \(T=O(\log (1/\epsilon ))\).

When \(n\ge \kappa \), the minibatch size of \(b=O(n/\sqrt{\kappa })\) satisfies the assumption in this theorem, thus, we can deduce the first claim in the paper concerning Acc-SVRG without APPA:

The following lemma is key result to derive a total complexity of an optimization method accelerated by APPA. Concretely, Lemma A gives an iteration complexity of Algorithm 2 ignoring a complexity of an inner-solver \({\mathscr {M}}\).

Lemma A

([18]) Suppose that f is \(\mu \)-strongly convex and \(\gamma \ge 3\mu /2\). Consider Algorithm 2. If a generated sequence \(\{w_s\}_{s=1}^{T_c +1}\) satisfies the following

Then, there exists a positive value \(C_0\) which only depend on \(w_0\) such that

From Theorem A and Lemma A, we can derive complexities of Algorithm 1 with APPA in the case of \(n < \kappa \). To do so, we specify complexities of Algorithm 1 for solving subproblems with required accuracy (6) by APPA. Let \(\gamma = \frac{L}{n} - \mu \). Then, the condition number of subproblems are \(\frac{L+\gamma }{\mu +\gamma }= O(n)\). When \(\kappa > 3n\), \(\gamma > 3\mu /2\) follows. From Theorem A, we find that the required inequality (6) is satisfied with following parameters:

Therefore, the iteration and total complexities for each run of Acc-SVRG in APPA are \(O(\sqrt{n}\log (\gamma /\mu ))\) and \(O(n\log (\gamma /\mu ))\). Since the required number of iterates \(T_c\) of APPA to achieve \(\epsilon \)-accurate solution is \(O\left( \sqrt{\frac{\gamma }{\mu }}\log \left( \frac{1}{\epsilon }\right) \right) = O\left( \sqrt{\frac{\kappa }{n}}\log \left( \frac{1}{\epsilon }\right) \right) \). Therefore, we get

Experimental details

Here, the details of the experiments in Sect. 6 are provided.

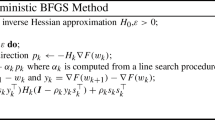

The details of the implemented algorithms and their tuning parameters were as follows:

-

SVRG [15]. We tuned the number of inner iterations m and the learning rate \(\eta \).

-

Acc-SVRG [3]. The number of inner iterations m and the learning rate \(\eta \) were tuned. We fixed the momentum parameter \(\beta \) as \((1 - \sqrt{\eta \lambda _2})/(1 + \sqrt{\eta \lambda _2})\), where \(\lambda _2\) is the \(L_2\)-regularization parameterFootnote 2.

-

SVRG with APPA (SVRG + Algorithm 2). In Addition to the above-described tuning parameters, the positive parameter \(\gamma \) described in Algorithm 2 was tuned. We fixed \(\mu \) as the \(L_2\)-regularization parameter \(\lambda _2\) \(^2\).

-

Acc-SVRG with APPA (Acc-SVRG + Algorithm 2). In the same manner as Acc-SVRG and SVRG with APPA, m, \(\eta \) and \(\gamma \) are tuned.

-

DASVRDA [2]. We tuned the number of inner iterations m, the learning rate \(\eta \), and additionally the restart interval S only for strongly convex objectives.

For tuning the above parameters, we automatically chose the values from appropriate ranges for each setting, by selecting the ones that led to the minimum objective value after running 50 passes over the data. The used parameter ranges were as follows:

-

Learning rate \(\eta \): \(\{0.1, 0.5, 1.0, 5.0, 10.0\}\).

-

The number of inner iterations m:

\(\{n/b, 5n/b, 10n/b, 50n/b, 100n/b\}\), where n is the size of data set and b is the minibatch size.

-

APPA parameter \(\gamma \): \(\{10^k \times \lambda _2 \mid k \in \{0, 1, 2, 3, 4\} \}\), where \(\lambda _2\) is the \(L_2\)-regularization parameter\(^2\).

-

Restart Interval S: \(\{1, 5, 10, 50, 100\}\) (only for \(\lambda _2 > 0\)).

Rights and permissions

About this article

Cite this article

Nitanda, A., Murata, T. & Suzuki, T. Sharp characterization of optimal minibatch size for stochastic finite sum convex optimization. Knowl Inf Syst 63, 2513–2539 (2021). https://doi.org/10.1007/s10115-021-01593-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-021-01593-1