Abstract

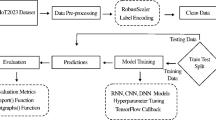

With the advent of the Internet of Things (IoT), network attacks have become more diverse and intelligent. In order to ensure the security of the network, Intrusion Detection system (IDS) has become very important. However, when met with the adversarial examples, IDS has itself become no longer secure, and the attackers can increase the success rate of attacks by misleading IDS. Therefore, it is necessary to improve the robustness of the IDS. In this paper, we employ Fast Gradient Sign Method (FGSM) to generate adversarial examples to test the robustness of three intrusion detection models based on convolutional neural network (CNN), long short-term memory (LSTM), and gated recurrent unit (GRU). We employ three training methods: the first is to train the models with normal examples, the second is to train the models directly with adversarial examples, and the last is to pretrain the models with normal examples, and then employ adversarial examples to train the models. We evaluate the performance of the three models under different training methods, and find that under normal training method, CNN is the most robust model to adversarial examples. After adversarial training, the robustness of GRU and LSTM to adversarial examples has greatly been improved.

Similar content being viewed by others

References

Elrawy MF, Awad AI, Hamed HFA (2018) Intrusion detection systems for IoT-based smart environments: a survey. J Cloud Comput 7:1–20

Weber M, Boban M (2016) Security challenges of the Internet of Things. In: 2016 39th International Convention on Information and Communication Technology, Electronics and Microelectronics (MIPRO), pp 638–643

Putchala MK (2017) Deep Learning Approach for Intrusion Detection System (IDS) in the Internet of Things (IoT) Network using Gated Recurrent Neural Networks (GRU)

Gendreau AA, Moorman M (2016) Survey of intrusion detection systems towards an end to end secure Internet of Things. In: 2016 IEEE 4th international conference on Future Internet of Things and Cloud (FiCloud), pp 84–90

Karatas G, Demir O., Sahingoz O.K. (2018) Deep learning in intrusion detection systems. In: 2018 international congress on big data, deep learning and fighting cyber terrorism (IBIGDELFT), pp 113–116

Szegedy C, Zaremba W, Sutskever I, Bruna J, Erhan D, Goodfellow I, Fergus R (2014) Intriguing properties of neural networks. CoRR, arXiv:1312.6199

Ibitoye O, Shafiq MO, Matrawy A (2019) Analyzing adversarial attacks against deep learning for intrusion detection in IoT networks. In: 2019 IEEE global communications conference (GLOBECOM), pp 1–6

Khamis RA, Shafiq MO (2020) Investigating resistance of deep learning-based IDS against adversaries using min-max Optimization. In: ICC 2020 - 2020 IEEE international conference on communications (ICC), pp 1–7

Khamis RA, Matrawy A (2020) Evaluation of adversarial training on different types of neural networks in deep learning-based IDSs. In: 2020 international symposium on networks, computers and communications (ISNCC), pp 1–6

Sharafaldin I, Lashkari AH, Ghorbani AA (2018) Towards generating a new intrusion detection dataset and intrusion traffic characterization. In: 4th international conference on information systems security and privacy (ICISSP), Portugal

Koroniotis N, Moustafa N, Sitnikova E, Turnbull B (2019) Towards the development of realistic botnet dataset in the Internet of Things for network forensic analytics: Bot-IoT Dataset. arXiv:1811.00701

Wang Z (2018) Deep learning-based intrusion detection with adversaries. IEEE Access 6:38367–38384

Warzynski A, Kolaczek G (2018) Intrusion detection systems vulnerability on adversarial examples. In: 2018 innovations in intelligent systems and applications (INISTA), pp 1–4

Khan RU, Zhang X, Kumar R (2018) Analysis of ResNet and GoogleNet models for malware detection. J Comput Virol Hacking Tech 15:29–37

Kottapalle P (2020) A cnn-lstm model for intrusion detection system from high dimensional data. J Inf Comput Sci 10(3):1362–1370

Athalye A, Engstrom L, Ilyas A, Kwok K (2018) Synthesizing robust adversarial examples. arXiv:1707.07397

Chen P, Zhang H, Sharma Y, Yi J, Hsieh C (2017) ZOO: zeroth order optimization based black-box attacks to deep neural networks without training substitute models. In: Proceedings of the 10th ACM workshop on artificial intelligence and security

Brunner T, Diehl F, Truong-Le M, Knoll A (2019) Guessing smart: biased sampling for efficient black-box adversarial attacks. In: 2019 IEEE/CVF international conference on computer vision (ICCV), pp 4957–4965

Szegedy C, Zaremba W, Sutskever I, Bruna J, Erhan D, Goodfellow I, Fergus R (2014) Intriguing properties of neural networks. CoRR, arXiv:1312.6199

Goodfellow IJ, Goodfellow I, Shlens J, Szegedy C (2015) Explaining and harnessing adversarial examples. CoRR, arXiv:1412.6572

Kurakin A, Goodfellow I, Bengio S (2017) Adversarial machine learning at scale. arXiv:1611.01236

Wang Q, Guo W, Zhang K, Ororbia A, Xing X, Liu X, Giles CL (2017) Adversary resistant deep neural networks with an application to malware detection. In: Proceedings of the 23rd ACM SIGKDD international conference on knowledge discovery and data Mining

Das N, Shanbhogue M, Chen S, Hohman F, Chen L, Kounavis M, Chau DH (2017) Keeping the bad guys out: protecting and vaccinating deep learning with JPEG compression. arXiv:1705.02900

Madry A, Makelov A, Schmidt L, Tsipras D, Vladu A (2018) Towards deep learning models resistant to adversarial attacks. arXiv:1706.06083

Acknowledgements

The authors thank the anonymous reviewers for helpful suggestions.

Funding

This work was supported by Science and Technology on Communication Networks Laboratory under Grant 6142104180413.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Fu, X., Zhou, N., Jiao, L. et al. The robust deep learning–based schemes for intrusion detection in Internet of Things environments. Ann. Telecommun. 76, 273–285 (2021). https://doi.org/10.1007/s12243-021-00854-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12243-021-00854-y