Abstract

The total least squares (TLS) method is a well-known technique for solving an overdetermined linear system of equations Ax ≈ b, that is appropriate when both the coefficient matrix A and the right-hand side vector b are contaminated by some noise. For ill-posed TLS poblems, regularization techniques are necessary to stabilize the computed solution; otherwise, TLS produces a noise-dominant output. In this paper, we show that the regularized total least squares (RTLS) problem can be reformulated as a nonlinear least squares problem and can be solved by the Gauss–Newton method. Due to the nature of the RTLS problem, we present an appropriate method to choose a good regularization parameter and also a good initial guess. Finally, the efficiency of the proposed method is examined by some test problems.

Similar content being viewed by others

1 Introduction

Many problems in data fitting and application areas such as signal and image processing give rise to an inexact linear system of equations of the form

where the matrix A or the vector b is contaminated by some unknown errors which may stem from modeling, measurement inaccuracies, or problem discretization. In the classical least squares (LS) approach [4, 31] to (1.1), the matrix A is assumed to be free of error, and all errors are confined to the vector b. However, in practical situations, this assumption is frequently unrealistic and the coefficient matrix A is also affected by the errors. For example, in many data fitting problems, not only the right-hand side b but also the entries of A are obtained by measurements. Another case occurs when the matrix A is a discrete approximation of the underlying continuous operator. In such cases, it is more appropriate to consider the total least squares (TLS) problem to find an accurate solution to the system. The TLS problem is defined as follows.

Definition 1

For given an overdetermined linear system of equations Ax ≈ b, where \(A\in \mathbb {R}^{m\times n}\), \(b\in \mathbb {R}^{m}\), and m ≥ n, the TLS problem is to find a matrix \(E\in {{\mathbb {R}}^{m\times n}}\) and a vector \(r\in {\mathbb {R}}^{m}\) such that b + r ∈Range(A + E) and \(\left \|[E,r] \right \|_{F}^{2}=\left \|E \right \|_{F}^{2}+\left \|r\right \|^{2}\) is minimal, i.e.,

If a minimizing pair (E,r) is found, then any x satisfying (A + E)x = b + r is called a TLS solution.

Throughout this paper, \(\left \| \cdot \right \|_{F}\) denotes the Frobenius norm, while \(\left \| \cdot \right \|\) denotes the Euclidean norm. It should be noted that the TLS problem may not have a solution and, when the solution exists, it may not be unique [4, 20]. However, in most problems, it has a unique solution which can be obtained by a simple scaling of the right singular vector of [A,b] corresponding to its smallest singular value, as is described in the following theorem [19, 47].

Theorem 1

Let C = UΣVT be the singular value decomposition of the matrix \(C=[A,b]\in {\mathbb {R}}^{m\times (n+1)}\), where

Let vn+ 1 be the last column of V and let vi,n+ 1 be the i-th component of vn+ 1. If vn+ 1,n+ 1≠ 0 and σn > σn+ 1 then the TLS problem (1.2) has a unique solution xTLS, which is given by

with the corresponding correction matrix \([E,r]=-\sigma _{n+1} u_{n+1} v_{n+1}^{T}\) for which \(\left \|[E,r] \right \|_{F}^{2}=\sigma _{n+1}^{2}\).

In this theorem, the condition vn+ 1,n+ 1≠ 0 implies that a TLS solution exists, and σn > σn+ 1, which means the smallest singular value σn+ 1 is simple, guarantees its uniqueness. It can be seen that these two conditions together are equivalent to \(\sigma _{n}^{\prime }>\sigma _{n+1}\), where \(\sigma _{n}^{\prime }\) is the smallest singular value of A; see [47, Corollary 3.4]. By using the above theorem, it can also be easily seen that the solution xTLS satisfies the following system of equations

and hence it can be determined from \((A^{T}A-\sigma _{n+1}^{2} I)x=A^{T}b\). From the above equation, the vector \(\hat {v}=[x_{TLS}^{T},-1]^{T}\) is an eigenvector corresponding to the smallest eigenvalue \(\lambda _{\min \limits }=\sigma _{n+1}^{2}\) of the matrix M = [A,b]T[A,b]. Therefore, \(\hat {v}\) minimizes the Rayleigh quotient vTMv/vTv of the matrix M. Hence, it follows that the unique solution xTLS to the TLS problem minimizes the following “orthogonal distance” function

The TLS problem was firstly introduced to the numerical linear algebra community by Golub and Van Loan [19] in 1980. However, under the names orthogonal regression and errors-in-variables, this fitting method has a long history in the statistical literature. The univariate problem, i.e., n = 1, was already studied in 1877 by Adcock [1]. See [36, 47] for more historical notes. So far, the TLS problems have been used in various fields of applied science such as signal processing, automatic control, system theory, statistics, physics, economics, biology, and medicine [47]. See also [35] for a comprehensive list of references about the total least squares problem and related methods and their applications.

The goal of this paper is to find the TLS solution of problems where the matrix A is severely ill-conditioned, i.e., the singular values of A decay gradually to zero without a significant gap and the ratio between the largest and the smallest nonzero singular values is large. Such problems arise, for instance, from the discretization of ill-posed problems, such as Fredholm integral equations of the first kind [13, 23]. In these problems, due to ill-conditioning of the matrix A and the presence of noise in the problem’s data, straightforward methods such as least squares, total least squares, or other classical methods often provide unreliable solutions. Therefore, regularization techniques are necessary to obtain an acceptable solution.

There are two main approaches to regularization of ill-posed TLS problems in the literature. The first approach is based on truncating the small singular values of the augmented matrix [A,b] which is called truncated TLS (TTLS) method. In [16], Fierro et al. studied filtering properties of the truncated TLS solution and they proposed an iterative algorithm based on Lanczos bidiagonalization for computing truncated TLS solutions. The second approach reduces the effect of the noise on the computed solution by adding a quadratic constraint as \({{\left \| Lx \right \|}^{2}}\!\le \! \delta ^{2} \) to the TLS problem [18]. By using this approach, the regularized TLS (RTLS) problem is given by

Here, \(L\in \mathbb {R}^{k\times n} (k\leq n)\) is the regularization matrix, which defines a (semi)norm on the solution, and δ > 0 is the regularization parameter, which controls the size of the solution. Typically, L is chosen as the identity matrix or a discrete approximation of the first- or second-order derivative operators. We say that the RTLS problem is in the standard form if L = I, where I is the identity matrix, and in general form if L≠I. As shown before, the problem of finding the solution xTLS to the TLS problem is equivalent to solve the following minimization problem:

Thus, the RTLS problem (1.3) can be reformulated as follows:

A closely related problem, studied in [2], is Tikhonov total least squares problem where regularization of the TLS problem is done by adding the typical Tikhonov term \(\lambda \left \| Lx \right \|^{2}\) to the TLS objective function (1.4):

By comparing the corresponding Lagrangian functions of problems (1.5) and (1.6), it can be easily seen that they are equivalent in the sense that for each value of λ > 0 there exists a corresponding value of δ > 0 such that the solutions of (1.5) and (1.6) are identical.

The following theorem gives a sufficient condition for the attainability of the minimum in (1.5) or (1.6).

Theorem 2

Let \(N\in \mathbb {R}^{n\times k}\) be a matrix whose columns form an orthogonal basis for the nullspace of L. If it holds that \(\sigma _{\min \limits }([AN, b])<\sigma _{\min \limits }(AN)\), then the minimum of the regularized total least squares problem (1.6) is attained with \({F}_{\lambda }^{*} \leq \sigma _{\min \limits }^{2}([AN, b])\), where

Proof

See [2], Theorem 3.1. □

An important difference between the RTLS problem and the Tikhonov regularization of least squares problems, is that the RTLS problem and its different formulations do not have closed-form solutions. However, in [18], Golub et al. proved the following characterization of the RTLS solution.

Theorem 3

If the constraint \(\left \| Lx \right \|^{2}\le \delta ^{2}\) of the RTLS problem (1.3) is active, then the RTLS solution x = xRTLS satisfies the equation

where the parameters λL and λI are given by

and μ > 0 is the Lagrange multiplier. Moreover, we have

The RTLS problem (1.3), and its different formulations, was extensively studied in the literature and is still an engaging source of attention among scholars [2, 3, 18, 21, 26,27,28, 30, 32, 33, 43, 45, 49]. In [18], Golub, Hansen, and O’Leary presented and analyzed the properties of the RTLS problem (1.3). They discussed its relation to the classical regularized least squares problem and also suggested a method for solving it. Utilizing an approach similar to Rayleigh quotient iteration for the TLS problem [5], Guo and Renaut [21, 43] derived an eigenproblem for the RTLS problem and solved it using the iterative inverse power method. Inspired by the fact that a quadratically constrained least squares problem can be solved via a quadratic eigenvalue problem [17], Sima, Van Huffel, and Golub [45] introduced an iterative method for solving the RTLS problem, where the solution is approximated in a few iterations, each consisting in solving a quadratic eigenvalue problem. Lampe and Voss [26, 27] studied the acceleration of Renaut and Guo’s algorithm and of the algorithm from Sima et al. and applied a nonlinear Arnoldi method, which projects the solution onto a lower dimension subspace determined by the algorithm. Lee, Fu, and Barlow [33] solved the RTLS problem by introducing a system of nonlinear equations and by applying a two-parameter Newton method for solving the nonlinear system. Later, in [32], Lee and Barlow applied two projection-based algorithms to this method, in order to solve the large-scale RTLS problems. Recently, Yang et al. [49] have reformulated the standard form of the RTLS problem as a zero finding problem of a univariate parametric function, and have applied the classical Newton method to the reformulated problem.

In this paper, we reformulate the RTLS problem (with a general regularization matrix) as a nonlinear least squares problem and then we apply the Gauss–Newton method to solve it. The rest of this paper is organized as follows. In Section 2, we review some preliminaries. In Section 3, we solve the RTLS problem via Gauss–Newton method. We also present a multi-objective optimization-based method for choosing an appropriate regularization parameter for the RTLS problem. In Section 4, we present some experimental results to show the efficiency of presented method. Finally, we give a conclusion in Section 5.

2 Preliminaries

2.1 The Gauss–Newton method for nonlinear least squares problems

In view of the formulation (1.4), the TLS problem can also be rewritten as the following nonlinear least squares problem:

This is a standard nonlinear optimization problem and thus any optimization technique such as a gradient descent or Newton’s method can be used here. Recently, Fasino and Fazzi [14] have proposed a Gauss–Newton iteration for solving the problem (2.1). The purpose of this paper is to solve the RTLS problems by the Gauss–Newton method. In this subsection, we briefly review the Gauss–Newton method for solving nonlinear least squares problems, then in the next section we will show how the Gauss–Newton method can be applied for solving the RTLS problems.

Consider the nonlinear least squares problem

where \(f:\mathbb {R}^{n}\rightarrow \mathbb {R}^{m}\) is a twice continuously differentiable function with m ≥ n. The i-th component of the m-vector f is denoted by fi. Let the Jacobian matrix of f be denoted by J, and let the matrix Gi denote the Hessian matrix of fi. Then the gradient and Hessian matrix of F are given by

where \(Q(x)={\sum }_{i=1}^{m}f_{i}(x)G_{i}(x)\). Note that the necessary conditions for x∗ to be a local minimizer of F are that g(x∗) = 0, and H(x∗) is positive semi-definite. Moreover, if g(x∗) = 0 and H(x∗) is positive definite, then x∗ is a strong local minimizer. In Newton’s method, for given iterate xk, the next iterate xk+ 1 is given by xk+ 1 = xk + hk, where H(xk)hk = −g(xk) or equivalently

The principal advantage of Newton’s method is that it has a quadratic rate of convergence near the optimal solution. Unfortunately, in spite of this attractive feature, it has several serious drawbacks:

-

At the very least, it may not be defined because of the singularity of H(xk) at a given point xk;

-

It requires that H(xk) be positive definite, otherwise the search direction hk = −H(xk)− 1g(xk) is not a descent direction;

-

We need to compute the full Hessian matrix. In many problems, the term Q(x) of the Hessian is either unavailable or inconvenient to obtain, and it is too expensive to approximate by finite differences;

-

It requires to solve a linear system at each iteration that is costly from a computational point of view.

The Gauss–Newton method [4, Section 9.2] is a modification of Newton’s method in which the term Q(x) is neglected in the Hessian. One motivation for this approach is that Q(x) vanishes for zero residual problems and therefore might be negligible for small residual problems. In the Gauss–Newton method, the next iterate is given by xk+ 1 = xk + hk, where the direction hk is obtained by the solution of the equations

It can be seen that the solution of (2.3) is a solution of the linear least squares problem

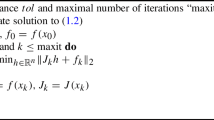

and is unique if J(xk) has full column rank. The linear least squares problem (2.4) can be solved efficiently by means of orthogonal transformations or iterative methods [4]. Algorithm 1 describes the Gauss–Newton method.

In practice, the Gauss–Newton method is used with the line search

which is the so-called damped Gauss–Newton method, where αk is a step length. Here, αk is computed by Armijo backtracking line search [38]. This strategy guarantees the descent of the objective function in each step and makes the Gauss–Newton algorithm locally convergent and often globally convergentFootnote 1. The backtracking procedure is described in Algorithm 2, where the initial step length \(\bar {\alpha }=1\), the contraction factor ρ = 1/2, and the constant c = 10− 4 are usually chosen in practical implementations.

2.2 Some basic concepts of the multi-objective optimization

Multi-objective optimization is a useful tool to optimize a number of conflicting objectives. This approach which is also referred to as multi-criteria or vector optimization is widely used in scientific and engineering applications. In Section 3.2, we present a method for choosing the regularization parameter for RTLS problems that involves solving a multi-objective optimization problem. Therefore, in this section, we review some basic concepts of the multi-objective optimization. For more details on multi-objective optimization problems, see [9, 11, 12, 37].

A multi-objective optimization problem can be formulated as

where \( \mathcal {S} \subseteq \mathbb {R}^{n}\) is a non-empty set and \(f_{i}:\mathbb {R}^{n} \rightarrow \mathbb {R}, i=1,\dots ,k\), are the conflicting objective functions to be minimized simultaneously. The set \(\mathcal {S}\) is called the feasible region and the set

is called the feasible objective region.

As pointed out in [37, p. 11], multi-objective problems are in a sense ill-defined because there is no natural ordering in the objective space. Therefore, the concept of minimization in single-objective optimization does not apply directly in the multi-objective framework. Here, the concept of Pareto optimality has to be introduced. To describe the concept, we define the following partial order relation on the feasible objective region \(\mathcal {Z}\): given two objective vectors z and \(z^{\prime }\), we say that \(z\leq z^{\prime }\) if and only if \( z_{i} \leq z_{i}^{\prime }\) for all \(i=1,2,\dots ,k\). The strict partial order relation < on \(\mathcal {Z}\) is defined in a similar way. Using these relations, the concept of dominance is defined as follows. Given two vectors x and \(x^{\prime }\) in the feasible region \(\mathcal {S}\), we say that:

-

\(x\preceq x^{\prime }\) (x weakly dominates \(x^{\prime }\)) if and only if \(f(x)\leq f(x^{\prime })\).

-

\(x\prec x^{\prime }\) (x dominates \(x^{\prime }\)) if and only if \(x\preceq x^{\prime }\) and at least one component of f(x) is strictly less than the corresponding one of \(f(x^{\prime })\).

-

\(x \sim x^{\prime }\) (x is indifferent to \(x^{\prime }\)) if neither x dominates \(x^{\prime }\) nor \(x^{\prime }\) dominates x.

We can now formally state the concept of Pareto optimality in the multi-objective optimization setting. A point \(x^{*}\in \mathcal {S}\) is called a Pareto optimal or a Pareto minimizer for the problem (2.5), if there is no other point in \(\mathcal {S}\) that dominates it. In other words, \(x^{*}\in \mathcal {S}\) is Pareto optimal if there does not exist \(x\in \mathcal {S}\) such that fi(x) ≤ fi(x∗) for \(i=1,2,\dots ,k\) and fj(x) < fj(x∗) for at least one index j. The set of all the Pareto optimal solutions is denoted by \(\mathcal {P}\). The set \(\mathcal {F}=\{ f(x^{*})|x^{*}\in \mathcal {P}\}\), containing the image of all Pareto optimal solutions, is called the Pareto front. The objective vector \(z^{*}\in \mathbb {R}^{k}\) is called the ideal objective vector if its components \(z_{i}^{*}\) are obtained by minimizing each of the objective functions individually subject to the feasible region, that is by solving,

for \(i=1,\dots ,k\). In general, because of conflicts among the objectives, the ideal objective vector is not attainable (i.e., \(z^{*} \notin \mathcal {Z}\)). However, it can be used as a reference point. Unlike the ideal objective vector which represents the lower bound of each objective in the entire feasible region \(\mathcal {S}\), the nadir objective vector, znad, represents the upper bound of each objective in the entire set of all the Pareto optimal solutions. In other words, the components of the nadir objective vector are given by

for \(i=1,\dots ,k\).

3 The Gauss–Newton method for solving RTLS problems

3.1 Solving RTLS problems by the Gauss–Newton method

In this section, our discussion is based on the formulation (1.6) of the RTLS problem. We notice that the objective function of this problem is not convex, and thus a descent algorithm may fail to converge. However, if the starting point x0 is sufficiently close to the optimal solution x∗, and the regularization parameter λ > 0 is chosen appropriately, one can expect good performance by applying standard minimization techniques. Note that the RTLS problem (1.6) can be rewritten as the following nonlinear least squares problem:

where

and the Jacobian matrix of fλ is given by

Thus, the Gauss–Newton method can also be applied to obtain the optimal solution of (1.6) if we can find an appropriate regularization parameter λ and a good initial guess x0. In this section, we discuss how to choose these parameters appropriately.

For a fixed λ > 0, the first-order optimality condition for the RTLS problem (1.6) yields:

where

From the set of solutions of (3.2), the solution that corresponds to the smallest value of |λI| is the solution xRTLS of (1.6). Note that the matrix \(\left (A^{T}A+\lambda _{L} L^{T}L + \lambda _{I}I\right )\) is symmetric positive definite if \(\sigma _{\min \limits }(A^{T}A+\lambda _{L} L^{T}L)>|\lambda _{I}|\), so this condition ensures that the RTLS problem has a unique solution. Unfortunately, this condition does not hold in general, and, as noted in [30], the matrix \(\left (A^{T}A+\lambda _{L} L^{T}L + \lambda _{I}I\right )\) is often indefinite. It can only be shown that at the solution xRTLS this matrix is positive semi-definite (where in case of a singular matrix, the RTLS solution might be non-unique). Some results on non-unique RTLS solutions can be found in [27]. A sufficient condition for \(\left (A^{T}A+\lambda _{L} L^{T}L + \lambda _{I}I\right )\) to be positive definite is given in [33, Theorem 2.1].

Let x(λL,λI) be a solution of (3.2). Clearly, to guarantee that x(λL,λI) is a stable solution, the parameters λL and λI should be adjusted in such a way that the matrix \(\left (A^{T}A+\lambda _{L} L^{T}L + \lambda _{I}I\right )\) is well-conditioned. On the other hand, from the basic idea of Tikhonov regularization, the solution x(λL,λI) should provide at the same time a small fractional residual \({\left \|Ax-b\right \|}^{2}/({1+\left \|x\right \|^{2})}\) and a moderate value of the penalty term \(\left \| Lx \right \|^{2}\). In the following, we use these two key properties to find a good regularization parameter λ. The main idea of our parameter-choice method is that if an appropriate approximation x(λL,λI) of the optimal regularized solution x∗ (in the sense of a minimal distance to the exact solution) is available, then from (3.3) one can obtain an appropriate approximation of the optimal regularization parameter via the relation \(\lambda = \lambda _{L}/(1+\left \|x(\lambda _{L},\lambda _{I})\right \|^{2})\). Moreover, x0 = x(λL,λI) can be used as an initial guess for the Gauss–Newton method.

We denote by \(x(\lambda _{L}^{*},\lambda _{I}^{*})\) the regularized solution for which \(\|x(\lambda _{L}^{*},\lambda _{I}^{*})-x_{\text {true}}\|\) is smallest. The corresponding optimal regularization parameter is denoted by λ∗. We assume that the matrix \(\left (A^{T}A+\lambda _{L}^{*} L^{T}L + \lambda _{I}^{*}I\right )\) is positive definite and thus \(x(\lambda _{L}^{*},\lambda _{I}^{*})\) is unique. We also assume that the error-free linear system Atruex = btrue associated with the available error-contaminated linear system Ax ≈ b is zero-residual (i.e., btrue ∈Range(Atrue)). As also pointed out in [47, p. 200], the TLS problem typically applies to such cases. This assumption implies that if the perturbations in the input data are sufficiently small then the value of \(|\lambda _{I}^{*}|\) is close to 0 (note that \(|\lambda _{I}^{*}|\) is equal to the fractional residual \({\left \|Ax(\lambda _{L}^{*},\lambda _{I}^{*})-b\right \|}^{2}/({1+\left \|x(\lambda _{L}^{*},\lambda _{I}^{*})\right \|^{2})}\) associated with the solution \(x(\lambda _{L}^{*},\lambda _{I}^{*})\)).

Remark 1

The optimal regularization parameter λ∗ can be accurately computed only in simulation examples, when xtrue is known. In practice, the exact solution xtrue is unknown, and thus any parameter-choice method can only give an approximation of λ∗, using the available data.

We notice that when λI≠ 0, it has a deregularizing effect on the solution of (3.2). Therefore, it seems reasonable to keep the value of |λI| as small as possible. Noting that the value of \(|\lambda _{I}^{*}|\) is expected to be close to 0, we may expect that the solutions \(x(\lambda _{L}^{*},\lambda _{I}^{*})\) and \(x(\lambda _{L}^{*})=x(\lambda _{L}^{*},0)\) to be quite close to each other. This is confirmed by the following theorem.

Theorem 4

Let \(x(\lambda _{L}^{*})\) and \(x(\lambda _{L}^{*},\lambda _{I}^{*})\) be the solutions of

and

respectively, where \(\lambda _{L}^{*}\) and \(\lambda _{I}^{*}\) are optimal parameters satisfying (3.3). Assume that Null(A) ∩Null(L) = {0} and that \(\left (A^{T}A+\lambda _{L}^{*} L^{T}L + \lambda _{I}^{*}I\right )\) is positive definite. Then

where \(\hat {\sigma }_{n}\) is the smallest singular value of \(\left [\begin {array}{cc} A \\ \sqrt {\lambda _{L}^{*}} L \end {array}\right ]\).

Proof

Since \(x(\lambda _{L}^{*})\) is the solution of \(\left (A^{T}A+\lambda _{L}^{*} L^{T}L \right )x=A^{T}b\), we have

Subtracting (3.5) from the equation \(\left (A^{T}A+\lambda _{L}^{*} L^{T}L +\lambda _{I}^{*} I\right )x(\lambda _{L}^{*},\lambda _{I}^{*})=A^{T}b\), we can obtain the relation

and hence by taking norms of the both sides we have

Now, the result follows from the fact that

□

Remark 2

If Ax = b is inconsistent, i.e., b∉Range(A), then from (3.3) the value of \(\lambda _{I}^{*}\) is negative and thus we have

where \(\hat {\sigma }_{1}\) and \(\hat {\sigma }_{n}\) are the largest and smallest singular values of \(\left [\begin {array}{cc} A \\ \sqrt {\lambda _{L}^{*}} L \end {array}\right ]\), respectively. We conclude that the linear system \(\left (A^{T}A+\lambda _{L}^{*} L^{T}L \right )x=A^{T}b\) is better conditioned than the linear system \(\left (A^{T}A+\lambda _{L}^{*} L^{T}L +\lambda _{I}^{*}I\right )x=A^{T}b\).

Remark 3

Under the assumption \(|\lambda _{I}^{*}|\ll \hat {\sigma }_{n}^{2}\), it follows from (3.4) that the solutions \(x(\lambda _{L}^{*},\lambda _{I}^{*})\) and \(x(\lambda _{L}^{*})\) differ only by terms of order \(O(|\lambda _{I}^{*}|)\). This result is similar to that for the difference of the LS and TLS solutions; see, e.g., [4, p. 180] and references therein.

The above discussion motivates us to find a good approximation of the optimal solution \(x(\lambda _{L}^{*},\lambda _{I}^{*})\) among all regularized solutions x(λL). For this purpose, we find the parameter λL such that the regularized least squares (RLS) solution x(λL) = (ATA + λLLTL)− 1ATb provides a good compromise between the smallness of the quantities \({\left \|Ax-b\right \|}^{2}/(1+\left \|x\right \|^{2})\) and \(\left \| Lx \right \|^{2}\). Then, the original regularization parameter λ can be approximated by the relation \(\lambda = \lambda _{L} /(1+\left \|x(\lambda _{L})\right \|^{2})\) and x(λL) can be used as an appropriate initial guess for the Gauss–Newton method. For ill-posed problems where the value of \(|\lambda _{I}^{*}|\) is quite small, the initial guess x0 = x(λL) obtained with this method is very close to the solution \(x(\lambda _{L}^{*},\lambda _{I}^{*})\). Thus, only a few iterations of the Gauss–Newton method are typically required to find the optimal solution. Another advantage of this construction technique for x0 is that the Jacobian J(x) in (3.1) can be approximated by \(\tilde {J}(x)=A/\sqrt {1+x^{T}x}\). In fact, we have

where f(x) is defined as in (2.1). This approximation may be useful in large-scale problems, since \(\tilde {J}(x)\) is obtained by a cheap scalar-matrix multiplication. We summarize the resulting method in Algorithm 3.

3.2 Determination of λ L

The purpose of this section is to present a method for finding an appropriate regularization parameter λL for line 1 of Algorithm 3. For regularized least squares problems (where only the right-hand side b is assumed to be contaminated by noise), there are several well-known methods in order to choose the regularization parameter, such as discrepancy principle, generalized cross-validation (GCV), and L-curve [23]. We refer the reader to [6, 41] and [23, Chapter 7] for surveys and comparative studies of the different parameter choice methods for regularized least squares problems. Some recent studies on choosing the regularization parameter are given in [15, 34, 39, 40, 44]. In principle, it is possible to apply all of the methods mentioned above in the RTLS problems, by considering the fractional residual \({\left \|Ax-b\right \|}^{2}/({1+\left \|x\right \|^{2})}\) instead of the ordinary residual \(\left \|Ax-b\right \|^{2}\). However, the L-curve is perhaps the most widely used strategy for choosing the regularization parameter in the RTLS problems; see e.g., [29, 43]. This method was originally designed for choosing the regularization parameter for regularized least squares problems [25, 31]. It is based on a parametric plot of the (semi)norm ∥Lxλ∥, versus the residual norm ∥Axλ − b∥, where xλ is the regularized solution corresponding to the regularization parameter λ. The scale can be logarithmic or classical, and the norms might be 2-norm or general p-norms [10, 48]. The obtained plot often has a characteristic L-shaped appearance and the chosen parameter is the one which is the closest to the left bottom corner. We note that in the recent decades some other versions of the L-curve have also been introduced in the literature; see e.g., [7, 8, 42].

In [50], inspired by the idea behind the L-curve, a multi-objective optimization-based method was introduced for choosing the regularization parameter. The main idea of this method is to scale the residual norm \(\left \| Ax_{\lambda }-b \right \|\) and the (semi)norm \(\left \| Lx_{\lambda } \right \|\) by a suitable method and then minimizing the sum of them. Here, we use a same technique to find an appropriate regularization parameter λL for the line 1 of Algorithm 3.

Consider the multi-objective optimization problem

where \(g_{1}(\lambda _{L})={\left \|Ax(\lambda _{L})-b\right \|}/\sqrt {1+\left \|x(\lambda _{L})\right \|^{2}}\), \(g_{2}(\lambda _{L})=\left \| Lx(\lambda _{L}) \right \|\) and \(x(\lambda _{L}) ={{(A^{T}A+\lambda _{L} L^{T}L)}^{-1}}{A^{T}}b\). We first investigate the impact of the regularization parameter λL on the objective functions g1 and g2 in the following theorem. This theorem is a straightforward generalization of the Theorem 2 in [50]. For simplicity, throughout this section, we denote the regularization parameter λL and the regularized solution x(λL) by β and xβ, respectively.

Theorem 5

Assume that Null(A) ∩Null(L) = {0}. Given two functions \(g_{1}(\upbeta )={\left \|Ax_{\upbeta }-b\right \|}/\sqrt {1+\left \|x_{\upbeta }\right \|^{2}}\) and \(g_{2}(\upbeta )=\left \| Lx_{\upbeta } \right \|\), where \(x_{\upbeta } ={{(A^{T}A+\upbeta L^{T}L)}^{-1}}{A^{T}}b\) the following statements are hold:

-

(a)

For 0 < β1 < β2 we have g1(β1) < g1(β2) and g2(β2) < g2(β1). In other words, g1 is a strictly increasing function of β whereas g2 is a strictly decreasing function of β.

-

(b)

\(g_{1}(\upbeta ) \rightarrow \left \| Ax_{LS} -b\right \|/\sqrt {1+\left \|x_{LS}\right \|^{2}}\) and \(g_{2}(\upbeta ) \rightarrow \left \| Lx_{LS} \right \| \) as \(\upbeta \rightarrow 0\).

-

(c)

\(g_{2}(\upbeta ) \rightarrow 0\) and \(g_{1}(\upbeta ) \rightarrow \left \|(A(AP_{L})^{\dagger }-I) b \right \|/\sqrt {1+ \left \|(AP_{L})^{\dagger }b\right \|^{2}}\) as \(\upbeta \rightarrow \infty \), where PL = I − L‡L and ‡ denotes the Moore–Penrose pseudoinverse.

Theorem 5 gives the lower and upper bounds of the two objectives g1(β) and g2(β). From this theorem, it can be seen that the vectors

are the ideal objective vector and the nadir objective vector for the problem (3.6), respectively. They will be applied later to normalize the objectives g1(β) and g2(β) such that their values are of approximately the same magnitude. It can be easily shown that composing each objective of the problem (3.6) with a strictly increasing function does not change the set of its Pareto minimizers, as is described in the following theorem. This theorem coincides essentially with Theorem 3 in [50].

Theorem 6

Let

where \(T_{1},T_{2}:\mathbb {R}\rightarrow \mathbb {R}\) are two strictly increasing real functions. Then, \(\tilde {\upbeta }\) is a Pareto minimizer of \(\hat {g}\) if and only if it is a Pareto minimizer of g, where g is the objective function of the problem (3.6). In other words, the two problems \(\underset {\upbeta >0}{\mathop {{\min \limits } }} g(\upbeta )\) and \(\underset {\upbeta >0}{\mathop {{\min \limits } }} \hat {g}(\upbeta )\) are equivalent.

One general approach in order to solve a multi-objective problem is converting the problem to a single-objective problem and then solving the single-objective problem by a standard optimization method. A well-known technique to convert a multi-objective problem to a single-objective problem, assuming that the components of the objective vector are non negative, is replacing the objective vector by the p-norm of the problem. Moreover, the objective function of the new problem, in the case where p is finite, is replaced by its p th power that is a differentiable function. For example, by using this method, the optimization problem (3.6) is replaced by:

In particular, when p = 1, this method is equivalent to another well-known method which is called weighted-sum method when the weights are considered to be uniform [37]. It can be easily seen that if \(\tilde {\upbeta }\) is a global minimizer for the single-objective problem (3.7), then \(\tilde {\upbeta }\) is a Pareto minimizer for the multi-objective problem (3.6). The problem (3.7) is useful for our purpose because it minimizes the distance between the feasible objective region of the problem (3.6) and the reference point (0,0), which is near the ideal objective vector.

Now, we are ready to present a new method for choosing the regularization parameter β = λL. We start from this point that when the regularization parameter β is chosen very small, the value of \(g_{2}(\upbeta )=\left \|Lx_{\upbeta }\right \|\) is very large whereas the value of \(g_{1}(\upbeta )={\left \|Ax_{\upbeta }-b\right \|}/\sqrt {1+\left \|x_{\upbeta }\right \|^{2}}\) is very small. See Fig. 1, for example. Moreover, when β is gradually increasing, the value of g1(β) increases slowly while the value of g2(β) rapidly tends to zero. Also, the functions g1 and g2 have very different ranges. Therefore, it is not easy to find a Pareto minimizer of

without suitable transformations.

The plots of the objective functions g1 and g2 for the baart (100) test problem from the “Regularization tools” package [24]. Here, L = I, and the matrix A and the right-hand side vector b have been perturbed as \(A+0.1 (E/\left \|E \right \|_{F})\) and \(b +0.1(e/\left \|e \right \|)\), respectively, where E and e have been created by using the randn function of MATLAB. The sub-figures from (B) to (D), are magnified forms of the sub-figure (A), respectively [50]

To overcome the above difficulties, we try to (approximately) equalize the ranges of the functions g1 and g2 via introducing some suitable transformations. Let \(T:\mathbb {R}^{+}\rightarrow \mathbb {R}\) be an increasing function and consider the following function:

According to Theorem 6, two problems \(\underset {\upbeta >0}{\mathop {{\min \limits } }} g(\upbeta )\) and \(\underset {\upbeta >0}{\mathop {{\min \limits } }} \hat {g}(\upbeta )\) are equivalent. Noting that generally it is necessary to reduce the range of g2, the function \(T(x)=\arctan (x)\) is a good choice for our purpose. In the next step, we scale \(\hat {g}_{1}\) and \( \hat {g}_{2}\) such that the scaled functions have the same range of values. We define the scaled functions as

where S is the following scaling function

and \(\min \limits \hat {g}_{i}\) and \(\max \limits \hat {g}_{i}\) are lower and upper bounds, respectively, for the objective function \(\hat {g}_{i}\). Note that by using this scaling, the values of \(\hat {g}_{i}\) lie in the interval [0,1]. Again, according to Theorem 6, two problems \(\underset {\upbeta >0}{\mathop {{\min \limits } }} g(\upbeta )\) and \(\underset {\upbeta >0}{\mathop {{\min \limits } }} \hat {g}_{s}(\upbeta )\) are equivalent. Finally, we choose the regularization parameter β∗ by finding the global minimizer of problem \(\underset {\upbeta >0}{\mathop {{\min \limits } }} {\left \| \hat {g}_{s}(\upbeta ) \right \|_{p}^{p}}\). By considering \(T(x)=\arctan (x)\), and p = 1, we need to minimize the following function

Notice that \(\min \limits \arctan (g_{1}(\upbeta ))\) and \(\min \limits \arctan (g_{2}(\upbeta ))\) are considered as zero in the above equation because according to Theorem 5, \(g_{1}(\upbeta ) \rightarrow \left \| Ax_{LS} -b\right \|^{2}/(1+\left \|x_{LS}\right \|^{2})\approx 0\) and \(g_{2}(\upbeta ) \rightarrow 0\), as \(\upbeta \rightarrow 0\) and \(\upbeta \rightarrow \infty \) respectively. To minimize the objective function (3.9), we need the values of \(\max \limits \arctan (g_{1}(\upbeta ))\) and \(\max \limits \arctan (g_{2}(\upbeta ))\). Since g1 is a strictly increasing function of β, by considering Theorem 5, the value of \(\max \limits \arctan (g_{1}(\upbeta ))\) can be approximated by evaluating \(\arctan (g_{1}(\upbeta ))\) at a sufficiently big number (say β = 10100). In a similar way, since g2 is a strictly decreasing function of β, one may get an approximation of \(\max \limits \arctan (g_{2}(\upbeta ))\) by evaluating \(\arctan (g_{2}(\upbeta ))\) at a sufficiently small number (say β = eps). One of the best characteristics of the proposed objective function (3.9) is its uni-modality over a sufficiently big interval containing the minimizer. See Fig. 2, for example. Therefore, the minimizer of (3.9) can be easily found by using a bracketing search procedure such as golden section search method [46].

The plot of the objective function K for the same test problem as in Fig. 1

To find an initial search interval [βf,βℓ] for the golden section search method, suppose that the minimum and the maximum values of the regularization parameter are β(min) and β(max), respectively. We generate a finite number of regularization parameters between β(min) and β(max) such that they form a geometric progression. If the number of all parameters is N, then they are given by

In the next step, we evaluate the function (3.9) at these parameters. If the minimum of these values is attained at βj, then the initial search interval can be chosen as

4 Numerical experiments

In this section, we apply the GNRTLS algorithm to solve a set of test problems, taken from the Hansen’s “Regularization Tools” package [24]. We select the BAART, FOXGOOD, GRAVITY, HEAT, PHILLIPS, and SHAW problems for generating the matrix A, the vector b, and the exact solution x. Each problem is derived from discretization of an integral equation of the first kind. These problems can be found in the manual of the package. For each test problem, we consider the dimensions 400 × 400, 800 × 800, 1000 × 1000, and 1200 × 1200. Using the data, we generate the noise-contaminated matrix A and the noise-contaminated vector b as

where the elements of the noises E and e are created by the MATLAB “randn” function and σ is the level of the noise. In the examples we consider the noise level σ = 1e − 2. We also consider the regularization matrix L as the following approximations to the first and second derivative operators:

For the GNRTLS algorithm, the regularization parameter λ and the initial guess x0 are determined by the relations \(\lambda = \lambda _{L}/(1+\left \|x(\lambda _{L})\right \|^{2})\), and x0 = x(λL) = (ATA + λLLTL)− 1ATb, respectively, where λL is computed by minimizing the function (3.9). We have illustrated a sample run of the presented method in Figs. 3, 4, 5, 6, 7, and 8. More comprehensive numerical results are reported in Tables 1 and 2. For the examples in these tables, we set ε = 1e − 6 and the maximum of iterations maxit = 10 for the GNRTLS algorithm. For finding an initial search interval for the golden section search algorithm, we choose β(min) = 16eps, where eps is the machine epsilon, β(max) = 100, and N = 20 in our numerical examples. Also, the iteration of the golden section search algorithm is terminated when |βℓ −βf| < ε = 10− 4. CPU times for computing the regularization parameter by the golden section search algorithm and computing the RTLS solution by the Gauss–Newton method are reported as an ordered pair (TGSS,TGN). All computations were performed in MATLAB R2015a on a Pentium(R) 2.2 GHz laptop with a 32-bit operating system and 4 GB RAM. Each experiment has been implemented 10 times, and the average of the results is reported. A sample comparison of the L-curve and the presented method for determining the regularization parameter is given in Table 3. To find the corner of the L-curve, the MATLAB routine l_corner in [24] is used. The table shows that the L-curve sometimes gives a starting point x0 that is far from the optimal solution.

Now, we give an image deblurring example to show that the approximated Jacobian \(\tilde {J}(x)=A/\sqrt {1+x^{T}x}\) can be useful in the implementation of the algorithm. We consider the blur test problem from “Regularization Tools” package [24] with the input arguments = 40, = 3 and = 0.7. Via considering \(A\in \mathbb {R}^{1600\times 1600}\) (the blurring matrix) and \(x_{\text {true}}\in \mathbb {R}^{1600}\) (the columnwise stacked version of the uncontaminated image) returned by this test problem, we construct the blurred and noisy image b as follows:

As before, the elements of the noises E and e are created by the MATLAB “randn” function and σ = 0.01 is the level of the noise. We use the following first-order discrete derivative operator for two space dimensions as the regularization matrix:

where \(L_{1}\in \mathbb {R}^{(N-1)\times N}\) is defined as before. Figure 9 shows the result of this example. Also, Fig. 10 shows the convergence history of this example when using J and \(\tilde {J}\).

From Tables 1 and 2 and Fig. 9, it can be seen that if the parameter λL is determined by minimization of the objective function (3.9), then generally the GNRTLS algorithm provides an appropriate approximate solution. Our experiments on the problems with a small or moderate size show that such problems can be effectively solved by the GNRTLS algorithm. However, as the size of problems increases, the computational cost of the GNRTLS algorithm increases. Thus, for large-scale problems, it is necessary to use iterative or projection methods to reduce the requirements of computation and storage. It would be interesting to combine the proposed method with a Krylov subspace method or to use an iteratively regularized Gauss–Newton method for solving large-scale problems.

5 Conclusions

We showed that the Gauss–Newton method can be applied for solving RTLS problems if a good initial guess and a good regularization parameter are available. We presented a multi-objective–based method for finding a regularization parameter λL such that the regularized least squares (RLS) solution x(λL) = (ATA + λLLTL)− 1ATb makes at the same time both \({\left \|Ax-b\right \|}^{2}/(1+\left \|x\right \|^{2})\) and \(\left \| Lx \right \|^{2}\) small, to the extent possible. We showed that if such a λL is available, then the regularization parameter λ for the RTLS problem can be approximated by the relation \(\lambda = \lambda _{L} /(1+\left \|x(\lambda _{L})\right \|^{2})\), and x(λL) can be used as an appropriate initial guess for the Gauss–Newton method. Finally, several numerical experiments showed that the presented method gives accurate approximate solutions for the RTLS problems.

Notes

Global convergence means convergence to a local minimum from any initial point [22].

References

Adcock, R. J.: Note on the method of least squares. Analyst 4 (6), 183–184 (1877)

Beck, A., Ben-Tal, A.: On the solution of the Tikhonov regularization of the total least squares problem. SIAM J. Optim. 17(1), 98–118 (2006)

Beck, A., Ben-Tal, A., Teboulle, M.: Finding a global optimal solution for a quadratically constrained fractional quadratic problem with applications to the regularized total least squares. SIAM J. Matrix Anal. Appl. 28(2), 425–445 (2006)

Björck, Å.: Numerical methods for least squares problems. SIAM (1996)

Björck, Å., Heggernes, P.x, Matstoms, P.: Methods for large scale total least squares problems. SIAM J. Matrix Anal. Appl. 22(2), 413–429 (2000)

Buccini, A., Park, Y., Reichel, L.: Comparison of a-posteriori parameter choice rules for linear discrete ill-posed problems. J. Comput. Appl. Math. 373, 112138 (2020)

Calvetti, D., Golub, G. H., Reichel, L.: Estimation of the L-curve via lanczos bidiagonalization. BIT Numer. Math. 39(4), 603–619 (1999)

Calvetti, D., Reichel, L., Shuibi, A.: L-Curve and curvature bounds for Tikhonov regularization. Numer. Algorithm. 35(2), 301–314 (2004)

Coello, C. A. C., Lamont, G. B., Van Veldhuizen, D. A., et al.: Evolutionary Algorithms for Solving Multi-Objective Problems, vol. 5. Springer (2007)

Cortiella, A., Park, K. -C., Doostan, A.: Sparse identification of nonlinear dynamical systems via reweighted ℓ1-regularized least squares. Comput. Methods Appl. Mech. Eng. 376, 113620 (2021)

Deb, K.: Multi-objective Optimization Using Evolutionary Algorithms, vol. 16. Wiley (2001)

Ehrgott, M.: Multicriteria Optimization, vol. 491. Springer Science & Business Media (2005)

Engl, H. W., Hanke, M., Neubauer, A.: Regularization of Inverse Problems, vol. 375. Springer Science & Business Media (2000)

Fasino, D., Fazzi, A.: A Gauss–Newton iteration for total least squares problems. BIT Numer. Math., 1–19 (2018)

Fenu, C., Reichel, L., Rodriguez, G., Sadok, H.: GCV for Tikhonov regularization by partial SVD. BIT Numer. Math. 57(4), 1019–1039 (2017)

Fierro, R. D., Golub, G. H., Hansen, P. C., O’Leary, D. P.: Regularization by truncated total least squares. SIAM J. Sci. Comput. 18(4), 1223–1241 (1997)

Gander, W., Golub, G. H., von Matt, U.: A constrained eigenvalue problem. Linear Algebra Appl. 114, 815–839 (1989)

Golub, G. H., Hansen, P. C., O’Leary, D. P.: TIkhonov regularization and total least squares. SIAM J. Matrix Anal. Appl. 21(1), 185–194 (1999)

Golub, G. H., Van Loan, C. F.: An analysis of the total least squares problem. SIAM J. Numer. Anal. 17(6), 883–893 (1980)

Golub, G. H., Van Loan, C. F.: Matrix Computations. Johns Hopkins University Press, Baltimore (2013)

Guo, H., Renaut, R. A.: A regularized total least squares algorithm. In Total Least Squares and Errors-in-Variables Modeling, pp. 57–66. Springer (2002)

Hansen, P., Pereyra, V., Scherer, G.: Least squares data fitting with applications. Johns Hopkins University Press (2013)

Hansen, P. C.: Rank-deficient and Discrete Ill-posed Problems: Numerical aspects of linear inversion. SIAM (1998)

Hansen, P. C.: Regularization tools version 4.0 for matlab 7.3. Numer. Algorithm. 46(2), 189–194 (2007)

Hansen, P. C., O’Leary, D. P.: The use of the L-curve in the regularization of discrete ill-posed problems. SIAM J. Sci. Comput. 14(6), 1487–1503 (1993)

Lampe, J., Voss, H.: On a quadratic eigenproblem occurring in regularized total least squares. Comput. Stat. Data Anal. 52(2), 1090–1102 (2007)

Lampe, J., Voss, H.: A fast algorithm for solving regularized total least squares problems. Electron. Trans. Numer Anal. 31, 12–24 (2008)

Lampe, J., Voss, H.: Solving regularized total least squares problems based on eigenproblems. Taiwan. J. Math. 14(3A), 885–909 (2010)

Lampe, J., Voss, H.: Efficient determination of the hyperparameter in regularized total least squares problems. Appl. Numer. Math. 62(9), 1229–1241 (2012)

Lampe, J., Voss, H.: Large-scale Tikhonov regularization of total least squares. J. Comput. Appl. Math. 238, 95–108 (2013)

Lawson, C. L., Hanson, R. J.: Solving Least Squares Problems, vol. 15. SIAM (1995)

Lee, G., Barlow, J. L.: Two projection methods for regularized total least squares approximation. Linear Algebra Appl. 461, 18–41 (2014)

Lee, G., Fu, H., Barlow, J. L.: Fast high-resolution image reconstruction using Tikhonov regularization based total least squares. SIAM J. Sci. Comput. 35(1), B275–B290 (2013)

Levin, E., Meltzer, A. Y.: Estimation of the regularization parameter in linear discrete ill-posed problems using the Picard parameter. SIAM J. Sci. Comput. 39(6), A2741–A2762 (2017)

Markovsky, I.: Bibliography on total least squares and related methods. Stat. Interface 3(3), 329–334 (2010)

Markovsky, I., Van Huffel, S.: Overview of total least-squares methods. Signal Process. 87(10), 2283–2302 (2007)

Miettinen, K.: Nonlinear Multiobjective Optimization, vol. 12. Springer Science & Business Media (2012)

Nocedal, J., Wright, S.: Numerical optimization. Springer Science & Business Media (2006)

Park, Y., Reichel, L., Rodriguez, G., Yu, X.: Parameter determination for Tikhonov regularization problems in general form. J. Comput. Appl. Math. 343, 12–25 (2018)

Reddy, G.: The parameter choice rules for weighted Tikhonov regularization scheme. Comput. Appl. Math. 37(2), 2039–2052 (2018)

Reichel, L., Rodriguez, G.: Old and new parameter choice rules for discrete ill-posed problems. Numer. Algorithm. 63(1), 65–87 (2013)

Reichel, L., Sadok, H.: A new L-curve for ill-posed problems. J. Comput. Appl. Math. 219(2), 493–508 (2008)

Renaut, R. A., Guo, H.: Efficient algorithms for solution of regularized total least squares. SIAM J. Matrix Anal. Appl. 26(2), 457–476 (2005)

Renaut, R. A., Horst, M., Wang, Y., Cochran, D., Hansen, J.: Efficient estimation of regularization parameters via downsampling and the singular value expansion. BIT Numer. Math. 57(2), 499–529 (2017)

Sima, D. M., Van Huffel, S., Golub, G. H.: Regularized total least squares based on quadratic eigenvalue problem solvers. BIT Numer. Math. 44 (4), 793–812 (2004)

Snyman, J. A.: Practical mathematical optimization. Springer (2005)

Van Huffel, S., Vandewalle, J.: The Total Least Squares Problem: Computational Aspects and Analysis, vol. 9. SIAM (1991)

Wang, F., Zhao, X. -L., Ng, M. K.: Multiplicative noise and blur removal by framelet decomposition and l1-based L-curve method. IEEE Trans. Image Process. 25(9), 4222–4232 (2016)

Yang, M., Xia, Y., Wang, J., Peng, J.: Efficiently solving total least squares with Tikhonov identical regularization. Comput. Optim. Appl. 70(2), 571–592 (2018)

Zare, H., Hajarian, M.: Determination of regularization parameter via solving a multi-objective optimization problem. Appl. Numer. Math. 156, 542–554 (2020)

Acknowledgements

The authors would like to express their heartfelt thanks to the editor and anonymous referees for their useful comments and constructive suggestions which substantially improved the quality and presentation of this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Zare, H., Hajarian, M. An efficient Gauss–Newton algorithm for solving regularized total least squares problems. Numer Algor 89, 1049–1073 (2022). https://doi.org/10.1007/s11075-021-01145-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11075-021-01145-2