Abstract

This study concerns the problem of compatibility of state constraints with a multiagent control system. Such a system deals with a number of agents so large that only a statistical description is available. For this reason, the state variable is described by a probability measure on \({\mathbb {R}}^d\) representing the density of the agents and evolving according to the so-called continuity equation which is an equation stated in the Wasserstein space of probability measures. The aim of the paper is to provide a necessary and sufficient condition for a given constraint (a closed subset of the Wasserstein space) to be compatible with the controlled continuity equation. This new condition is characterized in a viscosity sense as follows: the distance function to the constraint set is a viscosity supersolution of a suitable Hamilton–Jacobi–Bellman equation stated on the Wasserstein space. As a byproduct and key ingredient of our approach, we obtain a new comparison theorem for evolutionary Hamilton–Jacobi equations in the Wasserstein space.

Similar content being viewed by others

1 Introduction

In classical control theory, a single agent controls a dynamics (here represented by a differential inclusion)

where \(F:{\mathbb {R}}^d \rightrightarrows {\mathbb {R}}^d\) is a set valued map, associating with each \(x\in {\mathbb {R}}^d\) the subset F(x) of \({\mathbb {R}}^d\) of the admissible velocities from x. A multiagent system involves a large number of agents having all a dynamics of the form (1.1). In this model, the number of agents is so large that at each time only a statistical (macroscopic) description of the state is available. We thus describe the configuration of the system at time t by a Borel measure \(\mu _t\) on \({\mathbb {R}}^d\), where for every Borel set \(A \subseteq {\mathbb {R}}^d\) the quotient \(\dfrac{\mu _t(A)}{\mu _t({\mathbb {R}}^d)}\) represents the fraction of the total amount of agents that are present in A at the time t. Since the total amount of agents is supposed to be fixed in time, \(\mu _t({\mathbb {R}}^d)\) is constant, and thus, we choose to normalize the measure \(\mu _t\) assuming \(\mu _t({\mathbb {R}}^d)=1\), i.e., \(\mu _t\in {\mathscr {P}}({\mathbb {R}}^d)\), the set of Borel probability measures on \({\mathbb {R}}^d\).

Hence, the evolution of the controlled multi-agent system can be represented by the following two-scale dynamics

-

Microscopic dynamics: each agent’s position at time t is given by x(t), which evolves according to the dynamical system

$$\begin{aligned} \dot{x}(t) \in F(\mu _t,x(t)),&\text{ for } \text{ a.e. } t>0\, , \end{aligned}$$(1.2)where F is a set-valued map. It is worth pointing out that each agent’s dynamics is nonlocal since it depends also on the instantaneous configuration \(\mu _t\) of the crowd of agents at time t, described by a probability measure on \({\mathbb {R}}^d\).

-

Macroscopic dynamics: the configuration of the crowd of agents at time t is given by a time-depending measure \(\mu _t\in {\mathscr {P}}({\mathbb {R}}^d)\) whose evolution satisfies the following continuity equation (to be understood in the sense of distributions)

$$\begin{aligned} \partial _t \mu _t+\mathrm {div}(v_t \mu _t)=0,&t>0, \end{aligned}$$(1.3)coupled with the control constraint

$$\begin{aligned} v_t(x) \in F(\mu _t, x) \text{ for } \mu _t\text{-a.e. } x \in {\mathbb {R}}^d \text{ and } \text{ for } \text{ a.e. } t \ge 0. \end{aligned}$$(1.4)which represents the possible (Eulerian) velocity \(v_t(x)\) chosen by an external planner for an agent at time t and at the position x.

The investigation of (deterministic) optimal control problems in the space of measures is attracting an increasing interest by the mathematical community in the last years, due to the potential applications in the study of complex systems, or multi-agent systems (see, e.g., [16, 18, 19]). Indeed, in the framework of mean field approximation of multi agent system, starting from a control problem for a large number of the (discrete) agents, the problem is recasted in the framework of probability measures (see the recent [15] or the preprint [12] for \(\Gamma \)-convergence results for optimal control problems with nonlocal dynamics). This procedure reduces the dimensionality and the number of equations, possibly leading to a simpler and treatable problem from the point of view of numerics. The reader can find a comprehensive overview of the literature about such formulations and applications, together with some insights on research perspective, in the recent survey [1], and references therein. We refer to [7] for further results on mean field control problems.

The problem we address in this paper is the compatibility of the above dynamical system (1.3)–(1.4) with a given closed constraint \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\). Here, \({\mathscr {P}}_2({\mathbb {R}}^d)\) is the set of Borel probability measures with finite second moment; this set is equipped with the 2-Wasserstein distance (see Sect. 2). This compatibility property could be understood in two ways

-

\({\mathscr {K}}\) is viable for the dynamics F if and only if for any \(\mu \in {\mathscr {K}}\) there exists a solution \( t \mapsto \mu _t \) of the controlled continuity Eqs. (1.3)–(1.4) with \(\mu _0 =\mu \) such that \(\mu _t \in {\mathscr {K}}\) for all \(t \ge 0\);

-

\({\mathscr {K}}\) is invariant for the dynamics F if and only if for any \(\mu \in {\mathscr {K}}\) and for any solution \( t \mapsto \mu _t \) of the controlled continuity Eqs. (1.3)–(1.4) with \(\mu _0 =\mu \) we have \(\mu _t \in {\mathscr {K}}\) for all \(t \ge 0\).

Inspired by a characterization of the viability property via supersolution of Hamilton–Jacobi–Bellman equations, which was first obtained in [9] in the framework of stochastic control, we develop an approach for the present multiagent control problem with deterministic dynamics (1.3)–(1.4).

The main result of our paper (Theorems 6.6 and 6.7) can be roughly summarized as follows

Theorem 1.1

Let \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\) be a closed set and \(d_{{\mathscr {K}}}\) the associated distance function. Assume that the set valued map F is L-Lipschitz.

-

\({\mathscr {K}}\) is viable iff the function \(\mu \mapsto d_{{\mathscr {K}}}(\mu )\) is a viscosity supersolution of

$$\begin{aligned} (L+2) u(\mu ) +{\mathscr {H}}_F^{\mathrm {viab}}(\mu ,D_\mu u(\mu ))=0, \end{aligned}$$where, for all \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\), \(p\in L^2_\mu ({\mathbb {R}}^d;{\mathbb {R}}^d)\),

$$\begin{aligned} {\mathscr {H}}_F^{\mathrm {viab}}(\mu ,p):=-d_{{\mathscr {K}}}(\mu )-\mathop {{{\,\mathrm{inf}\,}}}\limits _{\begin{array}{c} v(\cdot )\in L^2_\mu ({\mathbb {R}}^d)\\ v(x)\in F(\mu ,x)\mu -\text { a.e. }x \end{array}}\int _{{\mathbb {R}}^\mathrm{d}}\langle v(x),p(x)\rangle \,\mathrm{d}\mu (x). \end{aligned}$$ -

\({\mathscr {K}}\) is invariant iff the function \(\mu \mapsto d_{{\mathscr {K}}}(\mu )\) is a viscosity supersolution of

$$\begin{aligned} (L+2) u(\mu ) +{\mathscr {H}}_F^{\mathrm {inv}}(\mu ,D_\mu u(\mu ))=0, \end{aligned}$$where, for all \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\), \(p\in L^2_\mu ({\mathbb {R}}^d;{\mathbb {R}}^d)\),

$$\begin{aligned}{\mathscr {H}}_F^{\mathrm {inv}}(\mu ,p):=-d_{{\mathscr {K}}}(\mu )-\sup _{\begin{array}{c} v(\cdot )\in L^2_\mu ({\mathbb {R}}^d)\\ v(x)\in F(\mu ,x)\,\mu -\text {a.e.}\,x \end{array}}\int _{{\mathbb {R}}^\mathrm{d}}\langle v(x),p(x)\rangle \,\mathrm{d}\mu (x).\end{aligned}$$

For a completely different approach to the viability problem, we refer to [5], where the author provides a characterization of the viability property for a closed set \({\mathscr {K}}\subseteq {\mathscr {P}}_1({\mathbb {T}}^d)\) by mean of a condition involving a suitable notion of tangent cone to \({\mathscr {K}}\) in the Wasserstein space \({\mathscr {P}}_1({\mathbb {T}}^d)\), where \({\mathbb {T}}^d\) denotes the d-dimensional torus.

The paper is organized as follows: in Sect. 2, we fix the notations and provide some background results; Sect. 3 is devoted to the properties of the set of solutions of the controlled continuity Eqs. (1.3)–(1.4); Sect. 4 establishes the link between the viability/invariance problem with the value function of a suitable control problem in Wasserstein space; Sect. 5 introduces the viscosity solutions of Hamilton–Jacobi–Bellman equations in the Wasserstein space, together with a uniqueness result; in Sect. 6, we apply the results of Sect. 5 to the problem outlined in Sect. 4 deriving our main characterization of viability/invariance. Finally, in Sect. 7 we provide an example illustrating the main results. Some proofs of technical results are postponed to “Appendix.”

2 Notations

Throughout the paper, we will use the following notation and we address to [2] as a relevant resource for preliminaries on measure theory.

- B(x, r):

-

the open ball of radius r of a metric space \((X,d_X)\), i.e., \(B(x,r):=\{y\in X:\,d_X(y,x)<r\}\);

- \({\overline{K}}\):

-

the closure of a subset K of a topological space X;

- \(d_K(\cdot )\):

-

the distance function from a subset K of a metric space (X, d), i.e., \(d_K(x):={{\,\mathrm{inf}\,}}\{d(x,y):\,y\in K\}\);

- \(C^0_b(X;Y)\):

-

the set of continuous bounded functions from a Banach space X to Y, endowed with \(\Vert f\Vert _{\infty }=\displaystyle \sup _{x\in X}|f(x)|\) (if \(Y={\mathbb {R}}\), Y will be omitted);

- \(C^0_c(X;Y)\):

-

the set of compactly supported functions of \(C^0_b(X;Y)\), with the topology induced by \(C^0_b(X;Y)\);

- \(BUC(X;{\mathbb {R}})\):

-

the space of bounded real-valued uniformly continuous functions defined on X

- \(\Gamma _I\):

-

the set of continuous curves from a real interval I to \({\mathbb {R}}^d\);

- \(\Gamma _T\):

-

the set of continuous curves from [0, T] to \({\mathbb {R}}^d\);

- \(e_t\):

-

the evaluation operator \(e_t:{\mathbb {R}}^d\times \Gamma _I\rightarrow {\mathbb {R}}^d\) defined by \(e_t(x,\gamma )=\gamma (t)\) for all \(t\in I\);

- \({\mathscr {P}}(X)\):

-

the set of Borel probability measures on a Banach space X, endowed with the \(\hbox {weak}^*\) topology induced from \(C^0_b(X)\);

- \({\mathscr {M}}({\mathbb {R}}^d;{\mathbb {R}}^d)\):

-

the set of vector-valued Borel measures on \({\mathbb {R}}^d\) with values in \({\mathbb {R}}^d\), endowed with the \(\hbox {weak}^*\) topology induced from \(C^0_c({\mathbb {R}}^d;{\mathbb {R}}^d)\);

- \(|\nu |\):

-

the total variation of a measure \(\nu \in {\mathscr {M}}({\mathbb {R}}^d;{\mathbb {R}}^d)\);

- \(\ll \):

-

the absolutely continuity relation between measures defined on the same \(\sigma \)-algebra;

- \(\mathrm {m}_2(\mu )\):

-

the second moment of a probability measure \(\mu \in {\mathscr {P}}(X)\);

- \(r\sharp \mu \):

-

the push-forward of the measure \(\mu \) by the Borel map r;

- \(\mu \otimes \pi _x\):

-

the product measure of \(\mu \in {\mathscr {P}}(X)\) with the Borel family of measures \(\{\pi _x\}_{x\in X}\subseteq {\mathscr {P}}(Y)\) (see Theorem 2.1);

- \(\mathrm {pr}_i\):

-

the i-th projection map \(\mathrm {pr}_i(x_1,\dots ,x_N)=x_i\);

- \(\Pi (\mu ,\nu )\):

-

the set of admissible transport plans from \(\mu \) to \(\nu \);

- \(\Pi _o(\mu ,\nu )\):

-

the set of optimal transport plans from \(\mu \) to \(\nu \);

- \(W_2(\mu ,\nu )\):

-

the 2-Wasserstein distance between \(\mu \) and \(\nu \);

- \({\mathscr {P}}_2(X)\):

-

the subset of the elements \({\mathscr {P}}(X)\) with finite second moment, endowed with the 2-Wasserstein distance;

- \(\dfrac{\nu }{\mu }\):

-

the Radon–Nikodym derivative of the measure \(\nu \) w.r.t. the measure \(\mu \);

- \(\mathrm {Lip}(f)\):

-

the Lipschitz constant of a function f;

- \((f)^+\):

-

the positive part of a real valued function f, i.e., \((f)^+=\max \{0,f\}\).

Given Banach spaces X, Y, we denote by \({\mathscr {P}}(X)\) the set of Borel probability measures on X endowed with the \(\hbox {weak}^*\) topology induced by the duality with the Banach space \(C^0_b(X)\) of the real-valued continuous bounded functions on X with the uniform convergence norm. The second moment of \(\mu \in {\mathscr {P}}(X)\) is defined by \(\displaystyle \mathrm {m}_2(\mu )=\int _{X}\Vert x\Vert _X^2\,\mathrm{d}\mu (x)\), and we set \({\mathscr {P}}_2(X)=\{\mu \in {\mathscr {P}}(X):\, \mathrm {m}_2(\mu )<+\infty \}\). For any Borel map \(r:X\rightarrow Y\) and \(\mu \in {\mathscr {P}}(X)\), we define the push forward measure \(r\sharp \mu \in {\mathscr {P}}(Y)\) by setting \(r\sharp \mu (B)=\mu (r^{-1}(B))\) for any Borel set B of Y. In other words,

for any bounded Borel measurable function \(\varphi :Y\rightarrow {\mathbb {R}}\).

We denote by \({\mathscr {M}}(X;Y)\) the set of Y-valued Borel measures defined on X. The total variation measure of \(\nu \in {\mathscr {M}}(X;Y)\) is defined for every Borel set \(B\subseteq X\) as

where the sup ranges on countable Borel partitions of B.

We now recall the definitions of transport plans and Wasserstein distance (cf. for instance Chapter 6 in [2]). Let X be a complete separable Banach space, \(\mu _1,\mu _2\in {\mathscr {P}}(X)\). The set of admissible transport plans between \(\mu _1\) and \(\mu _2\) is

where for \(i=1,2\), \(\mathrm {pr}_i:{\mathbb {R}}^d\times {\mathbb {R}}^d\rightarrow {\mathbb {R}}^d\) is a projection \(\mathrm {pr}_i(x_1,x_2)=x_i\). The Wasserstein distance between \(\mu _1\) and \(\mu _2\) is

If \(\mu _1,\mu _2\in {\mathscr {P}}_2(X)\), then the above infimum is actually a minimum, and the set of minima is denoted by

Recall that \({\mathscr {P}}_2(X)\) endowed with the \(W_2\)-Wasserstein distance is a complete separable metric space.

The following result is Theorem 5.3.1 in [2].

Theorem 2.1

(Disintegration) Let \({\mathbb {X}},X\) be complete separable metric spaces. Given a measure \(\mu \in {\mathscr {P}}({\mathbb {X}})\) and a Borel map \(r:{\mathbb {X}}\rightarrow X\), there exists a Borel family of probability measures \(\{\mu _x\}_{x\in X}\subseteq {\mathscr {P}}({\mathbb {X}})\), uniquely defined for \(r\sharp \mu \)-a.e. \(x\in X\), such that \(\mu _x({\mathbb {X}}\setminus r^{-1}(x))=0\) for \(r\sharp \mu \)-a.e. \(x\in X\), and for any Borel map \(\varphi :{\mathbb {X}}\rightarrow [0,+\infty ]\) we have

We will write \(\mu =(r\sharp \mu )\otimes \mu _x\). If \({\mathbb {X}}=X\times Y\) and \(r^{-1}(x)\subseteq \{x\}\times Y\) for all \(x\in X\), we can identify each measure \(\mu _x\in {\mathscr {P}}(X\times Y)\) with a measure on Y.

3 Admissible trajectories

The goal of this section is to give a precise definition of the macroscopic dynamics (1.3 , 1.4) and to study its trajectories. To maintain the flow of the paper, the proofs of the results of this section are postponed to “Appendix A.”

Definition 3.1

(Admissible trajectories) Let \(I=[a,b]\) be a closed real interval, \({\varvec{\mu }}=\{\mu _t\}_{t\in I}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\), \({\varvec{\nu }}=\{\nu _t\}_{t\in I}\subseteq {\mathscr {M}}({\mathbb {R}}^d;{\mathbb {R}}^d)\), \(F:{\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d \rightrightarrows {\mathbb {R}}^d\) be a set-valued map.

We say that \({\varvec{\mu }}\) is an admissible trajectory driven by \({\varvec{\nu }}\) defined on I with underlying dynamics F if

-

\(|\nu _t|\ll \mu _t\) for a.e. \(t\in I\);

-

\(v_t(x):=\dfrac{\nu _t}{\mu _t}(x)\in F(\mu _t,x)\) for a.e. \(t\in I\) and \(\mu _t\)-a.e. \(x\in {\mathbb {R}}^d\);

-

\(\partial _t\mu _t+\mathrm {div}\,\nu _t=0\) in the sense of distributions in \([a,b]\times {\mathbb {R}}^d\).

Given \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\), we define the set of admissible trajectories as

We make the following assumptions on the set-valued map F:

- \(({\varvec{F}}_1)\):

-

\(F:{\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d\rightrightarrows {\mathbb {R}}^d\) is continuous with convex, compact and nonempty images, where on \({\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d\) we consider the metric

$$\begin{aligned}d_{{\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d}((\mu _1,x_1),(\mu _2,x_2))=W_2(\mu _1,\mu _2)+|x_1-x_2|.\end{aligned}$$ - \(({\varvec{F}}_2)\):

-

there exists \(L>0\), a compact metric space U and a continuous map \(f:{\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d\times U\rightarrow {\mathbb {R}}^d\) satisfying

$$\begin{aligned}|f(\mu _1,x_1,u)-f(\mu _2,x_2,u)|\le L (W_2(\mu _1,\mu _2)+|x_1-x_2|),\end{aligned}$$for all \(\mu _i\in {\mathscr {P}}_2({\mathbb {R}}^d)\), \(x_i\in {\mathbb {R}}^d\), \(i=1,2\), \(u\in U\), such that the set-valued map F can be represented as

$$\begin{aligned}F(\mu ,x)=\left\{ f(\mu ,x,u):u\in U\right\} .\end{aligned}$$

As pointed out also in Remark 2 of [16], from the Lipschitz continuity of the set-valued map F coming from assumption \(({\varvec{F}}_2)\), we easily get

for all \(\mu ,\nu \in {\mathscr {P}}_2({\mathbb {R}}^d)\) and \(x,y\in {\mathbb {R}}^d\). From which, for all \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\) and \(x\in {\mathbb {R}}^d\), we have

where \(C:=\max \{1,L\,\max \{|y|\,:\,y\in F(\delta _0,0)\}\}\).

Definition 3.2

Let \(\{\varvec{\mu }^{(n)}\}_{n\in {\mathbb {N}}}\subseteq \mathrm {AC}([a,b];{\mathscr {P}}_2({\mathbb {R}}^d))\). We say that \(\{{\varvec{\mu }}^{(n)}\}_{n\in {\mathbb {N}}}\) uniformly \(W_2\)-converges to \({\varvec{\mu }}\), \(\varvec{\mu }^{(n)}\rightrightarrows {\varvec{\mu }}\), if we have

We recall the following result taken from [16].

Lemma 3.3

(Grönwall-like estimate (Prop. 2 in [16])) Assume \(\varvec{(F_1)-(F_2)}\). Let \(\mu _0,\theta _0\in {\mathscr {P}}_2({\mathbb {R}}^d)\), and \(\varvec{\mu }=\{\mu _t\}_{t\in [a,b]}\in {\mathcal {A}}_{[a,b]}(\mu _0)\) an admissible trajectory. Then, there exists an admissible trajectory \(\varvec{\theta }=\{\theta _t\}_{t\in [a,b]}\in {\mathcal {A}}_{[a,b]}(\theta _0)\), such that for all \(t\in [a,b]\) we have

where L is as in \(\varvec{(F_2)}\).

Proposition 3.4

Assume \(\varvec{(F_1)-(F_2)}\). Let \({\varvec{\mu }}=\{\mu _t\}_{t\in [a,b]}\) be an admissible trajectory, with \(0\le a< b<+\infty \). Then, there exists \({\varvec{\eta }}\in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[a,b]})\) such that \(e_t\sharp {\varvec{\eta }}=\mu _t\) for all \(t\in [a,b]\), and for \({\varvec{\eta }}\)-a.e. \((x,\gamma )\)

Moreover, for any \({\varvec{\eta }}\) as above and for all \(t,s\in [a,b]\) with \(s<t\), we have

-

(1)

for \({\varvec{\eta }}\)-a.e. \((x,\gamma )\in {\mathbb {R}}^d\times \Gamma _{[a,b]}\),

$$\begin{aligned}&\dfrac{e_t-e_s}{t-s}(x,\gamma )\in F(\mu _s,\gamma (s))+\\&\qquad +\left[ \dfrac{L}{t-s}\int _s^t\left[ W_2(\mu _\tau ,\mu _s)+|(e_\tau -e_s)(x,\gamma )|\right] \,\mathrm {d}\tau \right] \cdot \overline{B(0,1)}; \end{aligned}$$ -

(2)

\(\Vert e_t-e_s\Vert _{L^2_{{\varvec{\eta }}}}\le e^{L(t-s)}\left[ (t-s)(K+2L \mathrm {m}^{1/2}_2(\mu _s))+L\displaystyle \int _s^tW_2(\mu _\tau ,\mu _s)\,\mathrm{d}\tau \right] =:h(t,s)\);

-

(3)

\(\lim _{t\rightarrow s^+}\left\| \dfrac{e_t-e_s}{t-s}\right\| _{L^2_{{\varvec{\eta }}}}=K+2 L\mathrm {m}_2^{1/2}(\mu _s)\),

where \(L=\mathrm {Lip}(F)\) and \(K=\max \{|y|:\,y\in F(\delta _0,0)\}\).

In particular, there exists a Borel map \(w:{\mathbb {R}}^d\times \Gamma _{[a,b]}\rightarrow {\mathbb {R}}^d\), with \(w(x,\gamma )\in F(\mu _s,\gamma (s))\) for \({\varvec{\eta }}\)-a.e. \((x,\gamma )\in {\mathbb {R}}^d\times \Gamma _{[a,b]}\), such that

Proposition 3.5

(Compactness of \({\mathcal {A}}_{[a,b)}(\mu )\)] Assume \(({\varvec{F}}_1)-({\varvec{F}}_2)\) and let \(0\le a<b<+\infty \) and \(\mu _0\in {\mathscr {P}}_2({\mathbb {R}}^d)\). Then, the set of admissible trajectories \({\mathcal {A}}_{[a,b]}(\mu _0)\) is nonempty and compact w.r.t. uniform \(W_2\)-convergence (see Definition 3.2).

4 Viability problem and the value function

Throughout the paper, let \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\) be closed w.r.t. the metric \(W_2\). We are interested in the definitions of compatibility of our dynamics w.r.t. the state constraint given by \({\mathscr {K}}\) (cf. introduction of the present paper).

Notice that, since concatenation of admissible trajectories is an admissible trajectory (see the note before Prop. 3 in [16]), if \({\mathscr {K}}\) is viable (resp. invariant) in \([t_0,T]\) then it is viable (resp. invariant) in \([0,{\hat{T}}]\) for any \({\hat{T}}>T\).

As we will investigate in Sect. 5, the viability and invariance properties of a closed set \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\) are closely related to the following optimal control problems, with fixed time-horizon \(T>0\).

Definition 4.1

(Value functions) Given \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\) closed, \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\) and \(t_0\in [0,T]\) , we set

-

(1)

\(V^{\mathrm {viab}}:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow [0,+\infty )\) as follows

$$\begin{aligned} V^{\mathrm {viab}}(t_0,\mu ):=\mathop {{{\,\mathrm{inf}\,}}}\limits _{{\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )}\int _{t_0}^T d_{{\mathscr {K}}}(\mu _t)\,\mathrm{d}t, \end{aligned}$$(4.1)where \(d_{{\mathscr {K}}}:{\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow [0,+\infty )\), \(d_{{\mathscr {K}}}(\mu ):=\mathop {{{\,\mathrm{inf}\,}}}\limits _{\sigma \in {\mathscr {K}}} W_2(\mu ,\sigma )\).We say that \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\) is an optimal trajectory for \(V^{\mathrm {viab}}\) starting from \(\mu \) at time \(t_0\) if it achieves the minimum in (4.1).

-

(2)

\(V^{\mathrm {inv}}:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow [0,+\infty )\) as follows

$$\begin{aligned} V^{\mathrm {inv}}(t_0,\mu ):=\sup _{{\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )}\int _{t_0}^T d_{{\mathscr {K}}}(\mu _t)\,\mathrm{d}t. \end{aligned}$$(4.2)We say that \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\) is an optimal trajectory for \(V^{\mathrm {inv}}\) starting from \(\mu \) at time \(t_0\) if it achieves the maximum in (4.2).

The main interest in the above value functions lies in the fact that they give a characterization of the viability/invariance as explained in Proposition 4.3. We first state a regularity result of the above value functions and the existence of optimal trajectories.

Proposition 4.2

Assume \(\varvec{(F_1)-(F_2)}\). Given \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\), \(t_0\in [0,T]\), there exist an optimal trajectory \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\) for \(V^{\mathrm {viab}}\) and an optimal trajectory \({\varvec{\mu }}'\in {\mathcal {A}}_{[t_0,T]}(\mu )\) for \(V^{\mathrm {inv}}\).

Proof

We prove the existence of an optimal trajectory for \(V^{\mathrm {viab}}\). Take any \(\mu ^1,\mu ^2\in {\mathscr {P}}_2({\mathbb {R}}^d)\). By passing to the infimum over \(\sigma \in {\mathscr {K}}\) on the triangular inequality

we have \(d_{{\mathscr {K}}}(\mu ^1)\le W_2(\mu ^1,\mu ^2)+d_{{\mathscr {K}}}(\mu ^2)\). Reversing the roles of \(\mu ^1\) and \(\mu ^2\), we get the 1-Lipschitz continuity of \(d_{{\mathscr {K}}}\). Hence, by Fatou’s Lemma, we get the l.s.c. of the cost functional, i.e.,

for any sequence \(\{{\varvec{\mu }}^{(n)}\}_{n\in {\mathbb {N}}}\subseteq \mathrm {AC}([t_0,T];{\mathscr {P}}_2({\mathbb {R}}^d))\) uniformly \(W_2\)-converging to \({\varvec{\mu }}\). Combining this with the \(W_2\)-compactness property of Proposition 3.5, we get the desired result.

We prove the existence of an optimal trajectory for \(V^{\mathrm {inv}}\). We fix \(\{{\varvec{\mu }}^{(n)}\}_{n\in {\mathbb {N}}}\subset {\mathcal {A}}_{[t_0,T]}(\mu )\) and \({\hat{\sigma }}\in {\mathscr {K}}\). For any \(t\in [t_0,T]\), by triangular inequality and recalling that by definition we have the equivalence \(\mathrm {m}_2^{1/2}(\rho )=W_2(\rho ,\delta _0)\), we get the following uniform bound

for some constant \({\tilde{C}}>0\) coming from estimate (A.2) proved in “Appendix A”. Thus, as for the proof of the existence of a minimizer for \(V^{\mathrm {viab}}\), we can apply Fatou’s Lemma to get the u.s.c. of the cost functional and conclude. \(\square \)

We state here a first characterization of viability/invariance in terms of the optimal control problems introduced in Definition 4.1.

Proposition 4.3

Assume \(\varvec{(F_1)-(F_2)}\). Let \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\) be closed in the \(W_2\)-topology, \(t_0\in [0,T]\). Then,

-

(1)

\({\mathscr {K}}\) is viable for F if and only if \(V^{\mathrm {viab}}(t_0,\mu _0)=0\) for all \(\mu _0\in {\mathscr {K}}\);

-

(2)

\({\mathscr {K}}\) is invariant for F if and only if \(V^{\mathrm {inv}}(t_0,\mu _0)=0\) for all \(\mu _0\in {\mathscr {K}}\).

Proof

We just prove (1), since the proof of (2) is similar. One implication follows directly by definition, so we prove the other direction assuming \(V^{\mathrm {viab}}(t_0,\mu _0)=0\) for all \(\mu _0\in {\mathscr {K}}\). By Proposition 4.2, for all \(\mu _0\in {\mathscr {K}}\), there exists an optimal trajectory \(\varvec{{\bar{\mu }}}\in {\mathcal {A}}_{[t_0,T]}(\mu _0)\) such that

This implies that \(d_{{\mathscr {K}}}({\bar{\mu }}_t)=0\) for a.e. \(t\in [t_0,T]\). By continuity of \(\varvec{{\bar{\mu }}}\) and by closedness of \({\mathscr {K}}\) w.r.t. \(W_2\)-topology, we obtain the viability property for \({\mathscr {K}}\). \(\square \)

As usual, the value function satisfies a Dynamic Programming Principle.

Lemma 4.4

(DPP) The function \(V^{\mathrm {viab}}:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow [0,+\infty )\) satisfies

Furthermore, for any \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\), the map

is nondecreasing in \([t_0,T]\), and it is constant if and only if \({\varvec{\mu }}\) is an optimal trajectory.

Proof

We prove one inequality (\(\ge \)). By definition of \(V^{\mathrm {viab}}(t_0,\mu )\), for any \(\varepsilon >0\) there exists \({\varvec{\mu }}^\varepsilon \in {\mathcal {A}}_{[t_0,T]}(\mu )\) s.t.

for any \(t\in [t_0,T]\), since the truncated trajectory \(\varvec{{\hat{\mu }}}:={\varvec{\mu }}^\varepsilon _{|[t,T]}\) belongs to \({\mathcal {A}}_{[t,T]}(\mu ^\varepsilon _t)\). We conclude by passing to the infimum on \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\) and \(t\in [t_0,T]\) on the right-hand side and then letting \(\varepsilon \rightarrow 0^+\).

Concerning the other inequality, fix any \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\) and \(t\in [t_0,T]\). By definition of \(V^{\mathrm {viab}}(t,\mu _t)\), for all \(\varepsilon >0\) there exists \({\varvec{\mu }}^\varepsilon \in {\mathcal {A}}_{[t,T]}(\mu _t)\) s.t. \(V^{\mathrm {viab}}(t,\mu _t)+\varepsilon \ge \int _t^T d_{{\mathscr {K}}}(\mu ^\varepsilon _s)\,\mathrm{d}s\). Now, defining

we see that \(\varvec{{\hat{\mu }}}\in {\mathcal {A}}_{[t_0,T]}(\mu )\). Thus,

By passing to the \({{\,\mathrm{inf}\,}}\) on \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\), and then letting \(\varepsilon \rightarrow 0^+\), we conclude.

The proof of the second part of the statement is standard and follows straightforwardly from (4.3) (see for instance Prop. 3 in [16]). \(\square \)

We come now to the formulation of a Dynamic Programming Principle for the value function \(V^{\mathrm {inv}}\) whose proof is omitted since it is similar to that of Lemma 4.4.

Lemma 4.5

(DPP) The function \(V^{\mathrm {inv}}:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow [0,+\infty )\) satisfies

Furthermore, for any \({\varvec{\mu }}\in {\mathcal {A}}_{[t_0,T]}(\mu )\), the map

is nonincreasing in \([t_0,T]\), and it is constant if and only if \({\varvec{\mu }}\) is an optimal trajectory.

As in the classical case, the infinitesimal version of the Dynamic Programming Principle gives rise to a Hamilton–Jacobi–Bellman equation. The next section is devoted to such a Hamilton–Jacobi equation.

Proposition 4.6

Assume \(\varvec{(F_1)-(F_2)}\). The value functions \(V^{\mathrm {viab}}(t,\mu )\) and \(V^{\mathrm {inv}}(t,\mu )\) are uniformly continuous in \(t\in [0,T]\) and Lipschitz continuous in \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\) w.r.t. the \(W_2\)-metric.

Proof

We prove the statement for \(V^{\mathrm {viab}}\), since the proof for \(V^{\mathrm {inv}}\) is analogous. Fix \(t_0\in [0,T]\) and take any \(\mu ^1,\mu ^2\in {\mathscr {P}}_2({\mathbb {R}}^d)\). By Proposition 4.2, there exists an optimal trajectory \(\varvec{{\bar{\mu }}}^2\in {\mathcal {A}}_{[t_0,T]}(\mu ^2)\) starting from \(\mu ^2\). Thus, for any admissible \({\varvec{\mu }}^1\in {\mathcal {A}}_{[t_0,T]}(\mu ^1)\), we have

We can now choose \({\varvec{\mu }}^1\in {\mathcal {A}}_{[t_0,T]}(\mu ^1)\) such that the Grönwall-like inequality of Lemma 3.3 holds, thus getting

We now prove the uniform continuity in time of \(V^{\mathrm {viab}}\). Let \(0\le t_1\le t_2\le T\), \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\) and \({\varvec{\mu }}\in {\mathcal {A}}_{[t_1,T]}(\mu )\) an optimal trajectory. Then by the second part of the statement of Lemma 4.4, noticing that in particular \(g_{{\varvec{\mu }}}(t_1)=V^{\mathrm {viab}}(t_1,\mu )\), we have

By continuity of \(d_{{\mathscr {K}}}(\cdot )\) and of \(t\mapsto \mu _t\) we have the convergence to zero of the right-hand-side as \(t_2\rightarrow t_1\). Reversing the roles of \(t_1\) and \(t_2\) we conclude. \(\square \)

5 Hamilton Jacobi Bellman equation

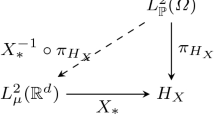

As reported in p. 352 in [11] and at the beginning of Sec. 6.1 in [10], we recall the following crucial fact. Throughout the paper, let \((\Omega ,{\mathcal {B}},{\mathbb {P}})\) be a sufficiently “rich” probability space, i.e., \(\Omega \) is a complete, separable metric space, \({\mathcal {B}}\) is the Borel \(\sigma \)-algebra on \(\Omega \), and \({\mathbb {P}}\) is an atomless Borel probability measure. We use the notation \(L^2_{{\mathbb {P}}}(\Omega )=L^2_{{\mathbb {P}}}(\Omega ;{\mathbb {R}}^d)\). Then, given any \(\mu _1,\mu _2\in {\mathscr {P}}_2({\mathbb {R}}^d)\), there exist \(X_1,X_2\in L^2_{{\mathbb {P}}}(\Omega )\) such that \(\mu _i=X_i\sharp {\mathbb {P}}\), \(i=1,2\), and \(W_2(\mu _1,\mu _2)=\Vert X_1-X_2\Vert _{L^2_{{\mathbb {P}}}}\).

Definition 5.1

-

(1)

Given a function \(u:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\), we define its lift \(U:[0,T]\times L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) by setting \(U(t,X)=u(t,X\sharp {\mathbb {P}})\) for all \(X\in L^2_{{\mathbb {P}}}(\Omega )\).

-

(2)

Let \({\mathscr {H}}={\mathscr {H}}(\mu ,p)\) be a Hamiltonian function mapping \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\), \(p\in L^2_\mu ({\mathbb {R}}^d)\) into \({\mathbb {R}}\). We say that the Hamiltonian function \(H:L^2_{{\mathbb {P}}}(\Omega )\times L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) is a lift of \({\mathscr {H}}\), if \(H(X,p\circ X)={\mathscr {H}}(X\sharp {\mathbb {P}},p)\), for all \(X\in L^2_{{\mathbb {P}}}(\Omega )\), \(p\in L^2_{X\sharp {\mathbb {P}}}({\mathbb {R}}^d)\).

Definition 5.2

(Viscosity solution) Let \({\mathscr {H}}\) and H be as in Definition 5.1(2). Given \(\lambda \ge 0\), we consider a first-order HJB equation of the form

and its lifted form

We say that \(u:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\) is a viscosity subsolution (resp. supersolution) of (5.1) in \([0,T)\times {\mathscr {P}}_2({\mathbb {R}}^d)\) if and only if its lift is a viscosity subsolution (resp. supersolution) of (5.2) in \([0,T)\times L^2_{{\mathbb {P}}}(\Omega )\). We recall that \(U:[0,T]\times L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) is a

-

viscosity subsolution of (5.2) if for any test function \(\phi \in C^1([0,T]\times L^2_{{\mathbb {P}}}(\Omega ))\) such that \(U-\phi \) has a local maximum at \((t_0,X_0)\in [0,T)\times L^2_{{\mathbb {P}}}(\Omega )\) it holds \(-\partial _t \phi (t_0,X_0)+\lambda U(t_0,X_0)+H(X_0,D\phi (t_0,X_0))\le 0\);

-

viscosity supersolution of (5.2) if for any test function \(\phi \in C^1([0,T]\times L^2_{{\mathbb {P}}}(\Omega ))\) such that \(U-\phi \) has a local minimum at \((t_0,X_0)\in [0,T)\times L^2_{{\mathbb {P}}}(\Omega )\) it holds \(-\partial _t \phi (t_0,X_0)+\lambda U(t_0,X_0)+H(X_0,D\phi (t_0,X_0))\ge 0\);

-

viscosity solution of (5.2) if it is both a supersolution and a subsolution.

Remark 5.3

Assume \(u:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\) is constant in time, i.e., with slight abuse of notation we can identify \(u(t,\mu )=u(\mu )\) for any \((t,\mu )\in [0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\), with \(u:{\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\). Then, (5.1) and (5.2) become, respectively

where \(U:L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) is the lift of u. Moreover, the test functions in Definition 5.2 can be taken independent of t, i.e.,

-

U is a viscosity subsolution of (5.3) if for any test function \(\phi \in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) such that \(U-\phi \) has a local maximum at \(X_0\in L^2_{{\mathbb {P}}}(\Omega )\) it holds \(\lambda U(X_0)+H(X_0,D\phi (X_0))\le 0\);

-

U is a viscosity supersolution of (5.3) if for any test function \(\phi \in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) such that \(U-\phi \) has a local minimum at \(X_0\in L^2_{{\mathbb {P}}}(\Omega )\) it holds \(\lambda U(X_0)+H(X_0,D\phi (X_0))\ge 0\).

-

U is a viscosity solution of (5.3) if it is both a supersolution and a subsolution.

Theorem 5.4

(Comparison principle) Assume that there exists \(L,C>0\) such that the Hamiltonian function \(H:L^2_{{\mathbb {P}}}(\Omega )\times L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) satisfies the following assumption:

- \(\varvec{(H)}\):

-

for any \(X,Y\in L^2_{{\mathbb {P}}}(\Omega )\), any \(a,b_1,b_2>0\) and \(C_1,C_2\in L^2_{{\mathbb {P}}}(\Omega )\),

$$\begin{aligned} \begin{aligned}&H(Y,a(X-Y)-b_1 Y-C_1)-H(X,a(X-Y)+b_2 X+C_2)\\ {}&\le \Vert X-Y\Vert _{L^2_{{\mathbb {P}}}}+2aL\Vert X-Y\Vert ^2_{L^2_{{\mathbb {P}}}}+\\ {}&\quad +C(1+\mathrm {m}_2^{1/2}(Y\sharp {\mathbb {P}}))\,(1+\Vert Y\Vert _{L^2_{{\mathbb {P}}}})\,(\Vert C_1\Vert _{L^2_{{\mathbb {P}}}}+b_1\Vert Y\Vert _{L^2_{{\mathbb {P}}}})+\\ {}&\quad +C(1+\mathrm {m}_2^{1/2}(X\sharp {\mathbb {P}}))\,(1+\Vert X\Vert _{L^2_{{\mathbb {P}}}})\,(\Vert C_2\Vert _{L^2_{{\mathbb {P}}}}+b_2\Vert X\Vert _{L^2_{{\mathbb {P}}}}). \end{aligned} \end{aligned}$$

Let \(\lambda \ge 0\). Then, if \(u_1,u_2\in UC([0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d))\) are a subsolution and a supersolution of (5.1), respectively, we have

Proof

The proof follows the line of the corresponding classical finite-dimensional argument (see, e.g., Theorem II.2.12 p. 107) in [6]. In the following, we define \({\mathbb {G}}:={\mathbb {R}}\times L^2_{{\mathbb {P}}}(\Omega )\) and, for any \((t,X)\in {\mathbb {G}}\), we set \(\Vert (t,X)\Vert ^2_{{\mathbb {G}}}:=|t|^2+\Vert X\Vert ^2_{L^2_{{\mathbb {P}}}}\). We denote \({\mathbb {A}}:=[0,T]\times L^2_{{\mathbb {P}}}(\Omega )\subset {\mathbb {G}}\), that is a complete metric space with distance induced by the norm \(\Vert \cdot \Vert _{{\mathbb {G}}}\) of \({\mathbb {G}}\).

Let \(U_1,U_2:{\mathbb {A}}\rightarrow {\mathbb {R}}\) be, respectively, the lift functionals for \(u_1\) and \(u_2\) as in Definition 5.1(1). We define the functional \(\Phi :{\mathbb {A}}^2\rightarrow {\mathbb {R}}\) by setting

where \(\varepsilon ,\beta ,m,\eta >0\) are positive constants which will be chosen later. Notice that since \(u_i\in UC([0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d))\), \(i=1,2\), we have \(U_i\in UC([0,T]\times L^2_{{\mathbb {P}}}(\Omega ))\). Indeed, for all \(X,Y\in L^2_{{\mathbb {P}}}(\Omega )\), \(t,s\in [0,T]\),

where \(\omega _{u_i}(\cdot )\) is the modulus of continuity of \(u_i\) and where we used the fact that \(W_2(X\sharp {\mathbb {P}},Y\sharp {\mathbb {P}})\le \Vert X-Y\Vert _{L^2_{{\mathbb {P}}}}\). Set

For \(R'>0\), \(i=1,2\), set

by uniform continuity we have

Thus,

for all \((t,X,s,Y)\in {\mathbb {A}}^2\). By (5.5), there exists \({\mathscr {C}}>0\) such that

The proof proceeds by contradiction: assume that there exist \(({\tilde{t}},{\tilde{\mu }})\in [0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\) and \(\delta >0\) such that \(u_1({\tilde{t}},{\tilde{\mu }})-u_2({\tilde{t}},{\tilde{\mu }})=A+\delta \). In particular, for any \({\tilde{X}}\in L^2_{{\mathbb {P}}}(\Omega )\) such that \({\tilde{X}}\sharp {\mathbb {P}}={\tilde{\mu }}\), it holds \(U_1({\tilde{t}},{\tilde{X}})-U_2({\tilde{t}},{\tilde{X}})=A+\delta \).

Select \(\beta ,\eta >0\) such that

Noting that \(\Phi \in C^0({\mathbb {A}}^2)\), by taking \(\varepsilon <\frac{1}{2{\mathscr {C}}}\) and recalling (5.6), we have

for any \(t,s\in [0,T]\). Therefore, there exists \(R>0\) such that

Thus, by Stegall’s Variational Principle (see, e.g., Theorem 6.3.5 in [8]) for any fixed \(\xi >0\), there exists a linear and continuous functional \(\Lambda :{\mathbb {G}}^2\rightarrow {\mathbb {R}}\) with \(\Vert \Lambda \Vert _{{{\mathbb {G}}^2}^*}<\xi \) and such that \(\Phi -\Lambda \) attains a strong maximum in \(([0,T]\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)})^2\). Moreover, on \(([0,T]\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)})^2\), we have

and so

Let \(({\bar{t}},{\bar{X}},{\bar{s}},{\bar{Y}})\in ([0,T]\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)})^2\) be a maximizer of \(\Phi -\Lambda \) on \(([0,T]\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)})^2\), obtained by choosing \(\xi >0\) s.t. \(2\xi \sqrt{T^2+R^2}\le \frac{\delta }{8}\). In particular, we get

and so

leading to

By choosing \(0<\eta <1\), we get for all \(\varepsilon >0\), \(m\in (0,1]\)

By Riesz’ representation theorem, there exist unique \((\lambda _1,\lambda _2,\lambda _3,\lambda _4)\in {\mathbb {G}}^2\) such that

From (5.7), we have

and so

which leads to

Take \(0<\xi<\varepsilon <1\). From the previous inequality, the boundedness of \(U_1, U_2\) in \([0,T]\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)}\) gives

for suitable constants \(B',B>0\) independent on \(\varepsilon \).

By uniform continuity of \(U_i\), \(i=1,2\), and by plugging the previous relation in (5.10), we can build a modulus of continuity \(\omega (\cdot )\) such that

We show that neither \({\bar{t}}\) nor \({\bar{s}}\) can be equal to T. Indeed, in \({\bar{t}}=T\),

by definition of A. We thus get a contradiction with (5.8) by choosing \(\varepsilon \) and \(\eta \) small enough s.t. \(\omega _{u_2}(B\sqrt{\varepsilon })+2\eta T<\frac{\delta }{4}\). The same reasoning applies for proving \({\bar{s}}<T\).

We define the \(C^1({\mathbb {A}})\) test functions

Notice that \((U_1-\phi )(t,X)=(\Phi -\Lambda )(t,X,{\bar{s}},{\bar{Y}})\), hence, \(U_1-\phi \) attains its maximum at \(({\bar{t}},{\bar{X}})\in [0,T)\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)}\) and, similarly, \(U_2-\psi \) attains its minimum at \(({\bar{s}},{\bar{Y}})\in [0,T)\times \overline{B_{L^2_{{\mathbb {P}}}}(0,R)}\). We have

Since \({\bar{t}},{\bar{s}}\in [0,T)\), by definition of viscosity sub/supersolution, we have

Now, by (5.8), we have

and we can choose \(\eta \) sufficiently small so that \(A+\frac{\delta }{4}-2T\eta \ge 0\). Then, we get

We can now invoke assumption \(\varvec{(H)}\) with

recalling that \(\lambda _1,\lambda _3\le \varepsilon \) and \(\Vert \lambda _2\Vert _{L^2_{{\mathbb {P}}}},\Vert \lambda _4\Vert _{L^2_{{\mathbb {P}}}}\le \varepsilon \) by the bound on the dual norm of the operator \(\Lambda \). We get

By (5.11), (5.12) and recalling that \({\bar{X}},{\bar{Y}}\in \overline{B_{L^2_{{\mathbb {P}}}}(0,R)}\), we have

where we defined \(D_R:=D\,(1+R) \,R\), where \(D:=\max \{ C(1+\mathrm {m}_2^{1/2}({\bar{Y}}\sharp {\mathbb {P}})),\,C(1+\mathrm {m}_2^{1/2}({\bar{X}}\sharp {\mathbb {P}}))\}>0\). Finally, by (5.9) we get

where for the last passage we choose \(m\le \frac{\eta }{D_R d}\), and o(1) is a function of \(\varepsilon \) going to 0 as \(\varepsilon \rightarrow 0^+\). This leads to a contradiction as \(\varepsilon \rightarrow 0^+\). \(\square \)

Remark 5.5

As highlighted also in Remark 3.8 p. 154 of [6], if \(\lambda =0\) in (5.1), we can drop the symbol of the positive part in (5.4) and conclude that

6 Viscosity characterization of viability and invariance

We now provide the main results of the paper: Theorems 6.6 and 6.7. As pointed out also in Remark 4.2 in [18], by Theorem 8.2.11 in [4], the Hamiltonian \({\mathscr {H}}_F^{\mathrm {viab}}\) defined in Theorem 1.1 satisfies

Definition 6.1

(Lifted Hamiltonian for viability) We define the lifted Hamiltonian in \(L^2_{{\mathbb {P}}}(\Omega )\) associated with \({\mathscr {H}}_F^{\mathrm {viab}}\)

for all \(X,Q\in L^2_{{\mathbb {P}}}(\Omega )\). Note that \(H_F^{\mathrm {viab}}\) is a lift of \({\mathscr {H}}_F^{\mathrm {viab}}\) according to Definition 5.1.

By disintegrating \({\mathbb {P}}=(X\sharp {\mathbb {P}})\otimes {\mathbb {P}}_x\) (see Theorem 2.1), we have

where in the last equality we used Theorem 8.2.11 in [4] (or Theorem 6.31 in [14]).

Definition 6.2

(Lifted Hamiltonian for invariance) Related with the invariance problem and associated with \({\mathscr {H}}_F^{\mathrm {inv}}\), we define the following lifted Hamiltonian in \(L^2_{{\mathbb {P}}}(\Omega )\)

for all \(X,Q\in L^2_{{\mathbb {P}}}(\Omega )\). Notice that \(H_F^{\mathrm {inv}}\) is a lift of \({\mathscr {H}}_F^{\mathrm {inv}}\) according to Definition 5.1. Moreover, the equivalences (6.1) and (6.2) hold also in this case replacing, respectively, \({\mathscr {H}}_F^{\mathrm {viab}}\), \(H_F^{\mathrm {viab}}\) with \({\mathscr {H}}_F^{\mathrm {inv}}\), \(H_F^{\mathrm {inv}}\), and \({{\,\mathrm{inf}\,}}\) with \(\sup \).

Lemma 6.3

Assume \(\varvec{(F_1)-(F_2)}\). Then, both the Hamiltonian functions \(H_F^{\mathrm {viab}}\) and \(H_F^{\mathrm {inv}}\) satisfy assumption \(\varvec{(H)}\) with L and C, respectively, as in \(\varvec{(F_2)}\) and (3.1).

Proof

We prove here the assertion for \(H_F^{\mathrm {viab}}\) since the assertion for \(H_F^{\mathrm {inv}}\) can be proved in the same way. Fix any \(X,Y\in L^2_{{\mathbb {P}}}\), \(a,b_1,b_2>0\) and \(C_1,C_2\in L^2_{{\mathbb {P}}}\), and denote \(\mu _1:=X\sharp {\mathbb {P}}\), \(\mu _2:=Y\sharp {\mathbb {P}}\). We have

Let \(p\in {\mathbb {R}}^d\). For any \(x,y\in {\mathbb {R}}^d\), define \(\delta _{x,y}:=L(W_2(\mu _1,\mu _2)+|x-y|)\). Given any \(\varepsilon >0\), there exists \(z_{\varepsilon ,p}\in F(\mu _1,x)+\delta _{x,y}\overline{B(0,1)}\) such that

where the first inequality comes from Lipschitz continuity of the set-valued map F. In particular, we can write \(z_{\varepsilon ,p}={\hat{w}}_{\varepsilon ,p}+\delta _{x,y}w_{\varepsilon ,p}\), with \({\hat{w}}_{\varepsilon ,p}\in F(\mu _1,x)\) and \(w_{\varepsilon ,p}\in \overline{B(0,1)}\), thus getting

Hence, we have

Thus, for any \(x,y,c_1, c_2\in {\mathbb {R}}^d\) and by choosing \(p=x-y\), it holds

where we used the Cauchy–Schwarz’s inequality. Integrating with respect to the measure \((X,Y,C_1,C_2)\sharp {\mathbb {P}}\) on the variables \((x,y,c_1,c_2)\) and by (3.1), we get

recalling that \(W_2(X\sharp {\mathbb {P}},Y\sharp {\mathbb {P}})\le \Vert X-Y\Vert _{L^2_{{\mathbb {P}}}}\).

We conclude from (6.3), thanks to the Lipschitz continuity of \(d_{{\mathscr {K}}}(\cdot )\). \(\square \)

Remark 6.4

Assume \(\varvec{(F_1)-(F_2)}\). Let \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\) be fixed. Then, the set of continuous selections of \(F(\mu ,\cdot )\) is dense in \(L^2_{\mu }({\mathbb {R}}^d)\) in the set of Borel selections of \(F(\mu ,\cdot )\). Indeed, let \(v(\cdot )\) be a Borel selection of \(F(\mu ,\cdot )\). By Lusin’s Theorem, for any \(\varepsilon >0\) there exists a compact \(K_\varepsilon \subseteq {\mathbb {R}}^d\) and a continuous map \(w_\varepsilon :{\mathbb {R}}^d\rightarrow {\mathbb {R}}^d\) such that \(v=w_\varepsilon \) on \(K_\varepsilon \) and \(\mu ({\mathbb {R}}^d\setminus K_\varepsilon )<\varepsilon \). By Corollary 9.1.3 in [4], we can extend \(w_{\varepsilon |K_\varepsilon }\) to a continuous selection \(v_\varepsilon \) of \(F(\mu ,\cdot )\). Moreover, we have

Since \(|F(\mu ,x)|\le |F(\delta _0,0)|+L\mathrm {m}^{1/2}_2(\mu )+L|x|\), we have that

and the right hand side tends to 0 as \(\varepsilon \rightarrow 0^+\).

Now, we deduce that the value functions \(V^{\mathrm {viab}}\) and \(V^{\mathrm {inv}}\) satisfy the following Hamilton–Jacobi equations.

Proposition 6.5

Assume \(\varvec{(F_1)-(F_2)}\). Then,

-

(1)

\(V^{\mathrm {viab}}\) is a viscosity solution of

$$\begin{aligned} -\partial _t u(t,\mu )+{\mathscr {H}}_F^{\mathrm {viab}}(\mu ,D_\mu u(t,\mu ))=0;\end{aligned}$$(6.5) -

(2)

\(V^{\mathrm {inv}}\) is a viscosity solution of

$$\begin{aligned} -\partial _t u(t,\mu )+{\mathscr {H}}_F^{\mathrm {inv}}(\mu ,D_\mu u(t,\mu ))=0.\end{aligned}$$(6.6)

Proof

We prove (1). Let \(U:[0,T]\times L^2(\Omega ;{\mathbb {R}}^d)\rightarrow {\mathbb {R}}\) be the lift of \(V^{\mathrm {viab}}\) according to Definition 5.1.

Claim 1

U is a viscosity supersolution of \(-\partial _t U(t,X)+H_F(X,DU(t,X)=0\).

Proof of Claim 1

Let \(\phi :[0,T]\times L^2_{{\mathbb {P}}}(\Omega ;{\mathbb {R}}^d)\rightarrow {\mathbb {R}}\) be a \(C^1\) map such that \(U-\phi \) attains its minimum at (s, X), and define \(\mu =X\sharp {\mathbb {P}}\). Let \({\varvec{\mu }}=\{\mu _t\}_{t\in [s,T]}\) be an optimal trajectory defined on [s, T] with \(\mu _s=\mu \), its existence being assured by Proposition 4.2, and let \({\varvec{\eta }}\in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[s,T]})\) such that \(e_t\sharp {\varvec{\eta }}=\mu _t\) for all \(t\in [s,T]\). Fix \(\varepsilon >0\) and choose a family \(\{Y^\varepsilon _t\}_{t\in [s,T]}\subseteq L^2_{{\mathbb {P}}}(\Omega )\) of random variables satisfying the properties of Corollary A.3 related to \({\varvec{\mu }}\). Then, by the Dynamic Programming Principle in Lemma 4.4 and optimality of \({\varvec{\mu }}\),

where the equality \(U(s,Y^\varepsilon _s)=U(s,X)\) holds since \(Y^\varepsilon _s\sharp {\mathbb {P}}=X\sharp {\mathbb {P}}=\mu \) and since U, as a lift, is law dependent. Therefore, there exists a continuous increasing function \(\varrho :[0,+\infty [\rightarrow [0,+\infty [\) with \(\varrho (k)/k\rightarrow 0\) as \(k\rightarrow 0^+\) such that we have

Dividing by \(t-s>0\), by Corollary A.3(3), we have

By letting \(\varepsilon \rightarrow 0^+\), we obtain

Recalling the boundedness of \(\left\| \dfrac{e_t-e_s}{t-s}\right\| _{L^2_{{\varvec{\eta }}}}\) coming from Proposition 3.4, by letting \(t\rightarrow s^+\), we have

i.e., \(-\partial _t\phi (s,X)+H_F^{\mathrm {viab}}(X,D\phi (s,X))\ge 0\), where, as already discussed, we have

Thus, U is a viscosity supersolution of \(-\partial _t U(t,X)+H_F^{\mathrm {viab}}(X,DU(t,X))=0\).

Claim 2

U is a viscosity subsolution of \(-\partial _t U(t,X)+H_F^{\mathrm {viab}}(X,DU(t,X)=0\).

Proof of Claim 2

Let \(\phi :[0,T]\times L^2_{{\mathbb {P}}}(\Omega ;{\mathbb {R}}^d)\rightarrow {\mathbb {R}}\) be a \(C^1\) map such that \(U-\phi \) attains its maximum at (s, X) and define \(\mu =X\sharp {\mathbb {P}}\). Fix \(\varepsilon >0\), and let \(v_\varepsilon \in L^2_{\mu }({\mathbb {R}}^d)\) be such that \(v_\varepsilon (x)\in F(\mu ,x)\) for \(\mu \)-a.e. \(x\in {\mathbb {R}}^d\) and

By Remark 6.4, we can suppose that \(v_\varepsilon \in C^0\), and by Lemma A.4 there exists an admissible trajectory \(\varvec{\mu ^\varepsilon }=\{\mu ^\varepsilon _t\}_{t\in [s,T]}\) defined on [s, T] with \(\mu ^\varepsilon _s=\mu \), and \(\varvec{\eta ^\varepsilon }\in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[s,T]})\) such that \(e_t\sharp \varvec{\eta ^\varepsilon }=\mu ^\varepsilon _t\) for all \(t\in [s,T]\) and

By density, we can find \({\hat{v}}_\varepsilon \in C^0_b({\mathbb {R}}^d)\) such that \(\Vert v_\varepsilon -{\hat{v}}_\varepsilon \Vert _{L^2_{\mu }}\le \varepsilon \).

Denote by \({\mathscr {V}}_\varepsilon :\Omega \rightarrow {\mathbb {R}}^d\times \Gamma _{[s,T]}\) a Borel map satisfying \(\varvec{\eta ^\varepsilon }={\mathscr {V}}_\varepsilon \sharp {\mathbb {P}}\). Recalling Lemma A.2, since for all \(\varepsilon >0\) we have \(\mu =\mu ^\varepsilon _s=e_s\sharp \varvec{\eta ^\varepsilon }=(e_s\circ {\mathscr {V}}_\varepsilon )\sharp {\mathbb {P}}=X\sharp {\mathbb {P}}\), we can find a sequence of measure-preserving Borel maps \(\{r^\varepsilon _{n}(\cdot )\}_{n\in {\mathbb {N}}}\) such that

and we set \(Y^{\varepsilon ,n}_t=e_t\circ {\mathscr {V}}_\varepsilon \circ r^\varepsilon _{n}\) for all \(t\in [s,T]\). In particular, \(Y^{\varepsilon ,n}_t\sharp {\mathbb {P}}=\mu ^\varepsilon _t\) for all \(t\in [s,T]\). We then have

Recalling the choice of \({\hat{v}}_\varepsilon \), we have also

Since, by Lemma A.2, \(\Vert Y^{\varepsilon ,n}_s-X\Vert _{L^2_{{\mathbb {P}}}}\le \frac{1}{n}\), we can find a subsequence \(\{Y^{\varepsilon ,n_h}_s\}_{h\in {\mathbb {N}}}\) such that for \({\mathbb {P}}\)-a.e. \(\omega \in \Omega \) it holds \(\lim _{h\rightarrow +\infty }Y^{\varepsilon ,n_h}_s(\omega )=X(\omega )\). Therefore,

where we used the Dominated Convergence Theorem to pass to the limit under the integral sign, exploiting the global boundedness of \({\hat{v}}_\varepsilon \).

From the Dynamic Programming Principle, for all \(t\in [s,T]\) we have

Therefore, there exists a continuous increasing function \(\varrho :[0,+\infty [\rightarrow [0,+\infty [\) with \(\varrho (k)/k\rightarrow 0\) as \(k\rightarrow 0^+\) such that we have

Dividing by \(t-s>0\), and recalling the choice of \(v_\varepsilon \), we have

By letting \(h\rightarrow +\infty \) and thanks to (6.7), we have

By letting \(t\rightarrow s^+\) and recalling the boundedness of \(\left\| \dfrac{e_t-e_s}{t-s}\right\| _{L^2_{\varvec{\eta ^\varepsilon }}}\) coming from Proposition 3.4, we have

Finally, letting \(\varepsilon \rightarrow 0^+\) yields

i.e., in view of Definition 6.1, \(-\partial _t\phi (s,X)+H_F^{\mathrm {viab}}(X,D\phi (s,X))\le 0\).

The proof of item (2) is omitted since it is a straightforward adaption of the previous argument just provided for item (1). We specify that, in this case, the proofs of the assertions regarding subsolutions and supersolutions are reversed, minimum has to be replaced by maximum and vice versa, the inequality signs are reversed and the signs of the terms involving \(\rho \) and \(\varepsilon \) need to be changed accordingly. \(\square \)

We finish the section with our main results: a viscosity characterization of viability (Theorem 6.6) and invariance (Theorem 6.7).

Theorem 6.6

(Characterization of viability) Assume \(\varvec{(F_1)-(F_2)}\) and let \(L=\mathrm {Lip}(F)\) and \({\mathscr {H}}_F^{\mathrm {viab}}\) as in Definition 6.1. Consider a \(W_2\)-closed subset \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\). The following are equivalent:

-

(1)

the function \(z:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\), defined by \(z(t,\mu ):=d_{{\mathscr {K}}}(\mu )\), is a viscosity supersolution of

$$\begin{aligned} (L+2) u(t,\mu ) +{\mathscr {H}}_F^{\mathrm {viab}}(\mu ,D_\mu u(t,\mu ))=0,\quad \text {in }[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d); \end{aligned}$$(6.8) -

(2)

there exists \(T>0\) such that the function \(w:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\), defined by

$$\begin{aligned} w(t,\mu ):=\dfrac{e^{-(L+1)(t-T)}-1}{L+1}d_{{\mathscr {K}}}(\mu ), \end{aligned}$$(6.9)is a viscosity supersolution of

$$\begin{aligned} -\partial _t u(t,\mu )+{\mathscr {H}}_F^{\mathrm {viab}}(\mu ,D_\mu u(t,\mu ))=0,\quad \text {in }[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d); \end{aligned}$$(6.10) -

(3)

\({\mathscr {K}}\) is viable for the dynamics F.

Proof

For any \(T>0\), consider the decreasing function \(\alpha :[0,T]\rightarrow {\mathbb {R}}\) defined as

We denote by \(W(t,X):=w(t,X\sharp {\mathbb {P}})\) the lift of \(w(\cdot )\) according to Definition 5.1(1).

Proof of \((1\Rightarrow 2)\). Let \(d_{{\mathscr {K}}}\) be a supersolution to (6.8) (cf. Remark 5.3). Fix \(t\in [0,T)\), \(\mu \) and \(X \in L^2 _{ {\mathbb {P}}}(\Omega )\). Let \(\Psi :[0,T]\times L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) be a \(C^1\) test function such that \(W-\Psi \) has a local minimum at (t, X). We want to prove that

Since \(s\mapsto \alpha (s) d_{{\mathscr {K}}}(Y\sharp {\mathbb {P}})=W(s,Y)\) is regular for any \(Y\in L^2_{{\mathbb {P}}}(\Omega )\), then by the minimality we should have

Hence, for all \((s,Y) \in [0,T]\times L^2 _{ {\mathbb {P}}}(\Omega )\) in a small enough neighborhood \(I_{t,X}\) of (t, X),

and \(\varphi , g\) such that

by local minimality of (t, X). By definition of W and (6.13), we get

for any \((s,Y)\in I_{t,X}\). In particular, by choosing \(s=t\), we obtain

with equality holding when \(Y=X\). Thus, denoting with \(\Phi _t:L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) the function given by

we notice that \(\Phi _t\in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) and that the map \(Y\mapsto d_{{\mathscr {K}}}(Y\sharp {\mathbb {P}})-\Phi _t(Y)\) attains a local minimum in X. Thus, recalling also Remark 5.3, we can employ \(\Phi _t\) as a test function for \(d_{{\mathscr {K}}}\) to get

Notice that by (6.12),

Recalling the definition of the lifted Hamiltonian \(H_F^{\mathrm {viab}}\), by (6.14) we obtain

Multiplying by \(\alpha (t)\), we finally get

thus

which concludes that w is a supersolution of (6.10).

Proof of \((2\Rightarrow 3)\). Let \(T>0\) and assume that \(w(t,\mu )=\alpha (t)d_{{\mathscr {K}}}(\mu )\) is a viscosity supersolution of (6.10). We recall that \(H_F^{\mathrm {viab}}\), given in Definition 6.1, satisfies the assumptions of Theorem 5.4 as proved in Lemma 6.3. In particular, if we denote by \(U(t,X):=V^{\mathrm {viab}}(t,X\sharp {\mathbb {P}})\) the lift of the value function of Definition 4.1, we have

Therefore, since both w and \(V^{\mathrm {viab}}\) are uniformly continuous (see Proposition 4.6), by Theorem 5.4 and Proposition 6.5, we have \(U(t,X)\le W(t,X)\) for all \((t,X)\in [0,T]\times L^2_{{\mathbb {P}}}(\Omega )\). Thus, for all \(\mu \in {\mathscr {K}}\) and all \(X\in L^2_{{\mathbb {P}}}(\Omega )\) with \(X\sharp {\mathbb {P}}=\mu \) we obtain \(V^{\mathrm {viab}}(t,\mu )=U(t,X)=W(t,X)=0\) for all \(t\in [0,T]\). By Proposition 4.3, we conclude that there exists an admissible trajectory starting from \(\mu \) and defined on [0, T], which is entirely contained in \({\mathscr {K}}\). So \({\mathscr {K}}\) is viable.

Proof of \((3\Rightarrow 1)\). Assume that \({\mathscr {K}}\) is viable. Set \({\hat{d}}_{{\mathscr {K}}}(Y):=d_{{\mathscr {K}}}(Y\sharp {\mathbb {P}})\) for all \(Y\in L^2_{{\mathbb {P}}}(\Omega )\), i.e., \({\hat{d}}_{{\mathscr {K}}}\) is the lift of \(d_{{\mathscr {K}}}\). Let \(\phi \in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) and \(X\in L^2_{{\mathbb {P}}}(\Omega )\) be such that \({\hat{d}}_{{\mathscr {K}}}-\phi \) has a local minimum at X, and set \(\mu =X\sharp {\mathbb {P}}\in {\mathscr {P}}_2({\mathbb {R}}^d)\).

For any \(\varepsilon >0\) and \(T>0\), there exist \({\bar{\mu }}^\varepsilon \in {\mathscr {K}}\), and \(\varvec{{\bar{\mu }}}^\varepsilon \in {\mathcal {A}}_{[0,T]}({\bar{\mu }}^\varepsilon )\) satisfying \(W_2(\mu ,{\bar{\mu }}^\varepsilon )\le d_{{\mathscr {K}}}(\mu )+\varepsilon \) and \(\varvec{{\bar{\mu }}}^\varepsilon \subseteq {\mathscr {K}}\). By Grönwall’s inequality (Lemma 3.3), there exists \(\varvec{\mu }^\varepsilon \in {\mathcal {A}}_{[0,T]}(\mu )\), \(\varvec{\eta }^\varepsilon \in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[0,T]})\) such that \(\mu ^\varepsilon _t=e_t\sharp \varvec{\eta }^\varepsilon \), and

for all \(t\in [0,T]\).

According to Corollary A.3 applied to \(\varvec{\mu }^\varepsilon \), set

there exists a family \(\{Y^\varepsilon _t\}_{t\in [0,T]}\subseteq L^2_{{\mathbb {P}}}(\Omega )\) satisfying \(Y^\varepsilon _t\sharp {\mathbb {P}}=\mu _t^\varepsilon \) for all \(t\in [0,T]\) and

for any \(p\in L^2_{{\mathbb {P}}}(\Omega )\) (recall that \(\mu =\mu _0=X\sharp {\mathbb {P}}=Y^\varepsilon _0\sharp {\mathbb {P}}\)). According to the choice of X, we have

We estimate the first term as follows

Concerning the right hand side of (6.16), we have that there exists a continuous increasing map \(\varrho :[0,+\infty )\rightarrow [0,+\infty )\) with \(\varrho (r)/r\rightarrow 0\) as \(r\rightarrow 0^+\) such that

where in the third inequality we employed the definition of \(Y_t^\varepsilon \) provided in the proof of Corollary A.3, i.e., \(Y_t^\varepsilon =e_t\circ {\mathscr {W}}_\varepsilon \) for any \(t\in [0,T]\), for some \({\mathscr {W}}_\varepsilon :\Omega \rightarrow {\mathbb {R}}^d\times \Gamma _{[0,T]}\) s.t. \({\mathscr {W}}_\varepsilon \sharp {\mathbb {P}}=\varvec{\eta }^\varepsilon \). Recalling now the uniform boundedness in \(\varepsilon \) of \(\Vert \frac{e_t-e_0}{t}\Vert _{L^2_{\varvec{\eta }^\varepsilon }}\) coming from Proposition 3.4(3), by letting \(\varepsilon \rightarrow 0^+\) and \(t\rightarrow 0^+\), and by setting

we have

This leads to \((L+2)d_{{\mathscr {K}}}(X\sharp {\mathbb {P}})+H_F^{\mathrm {viab}}(X,D\phi (X))\ge 0\), i.e., \(d_{{\mathscr {K}}}(\mu )\) is a supersolution of (6.8). \(\square \)

Theorem 6.7

(Characterization of invariance) Assume \(\varvec{(F_1)-(F_2)}\) and let \(L=\mathrm {Lip}(F)\) and \({\mathscr {H}}_F^{\mathrm {inv}}\) as in Definition 6.2. Consider a \(W_2\)-closed subset \({\mathscr {K}}\subseteq {\mathscr {P}}_2({\mathbb {R}}^d)\). The following is equivalent:

-

(1)

the function \(z:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\), defined by \(z(t,\mu ):=d_{{\mathscr {K}}}(\mu )\), is a viscosity supersolution of

$$\begin{aligned} (L+2) u(t,\mu ) +{\mathscr {H}}_F^{\mathrm {inv}}(\mu ,D_\mu u(t,\mu ))=0\quad \text {in }[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d); \end{aligned}$$(6.17) -

(2)

there exists \(T>0\) such that the function \(w:[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d)\rightarrow {\mathbb {R}}\), defined by (6.9), is a viscosity supersolution of

$$\begin{aligned} -\partial _t u(t,\mu )+{\mathscr {H}}_F^{\mathrm {inv}}(\mu ,D_\mu u(t,\mu ))=0\quad \text {in }[0,T]\times {\mathscr {P}}_2({\mathbb {R}}^d); \end{aligned}$$(6.18) -

(3)

\({\mathscr {K}}\) is invariant for the dynamics F.

Proof

For any \(T>0\), consider the decreasing function \(\alpha :[0,T]\rightarrow {\mathbb {R}}\) defined as in (6.11).

We denote by \(W(t,X):=w(t,X\sharp {\mathbb {P}})\) the lift of \(w(\cdot )\) defined in (6.9) according to Definition 5.1(1).

Proof of \((1\Rightarrow 2)\). This part of the proof is the same as the one developed in Theorem 6.6 with \(H_F^{\mathrm {inv}}\) in place of \(H_F^{\mathrm {viab}}\).

Proof of \((2\Rightarrow 3)\). Same as in Theorem 6.6, with \(V^{\mathrm {viab}}\) replaced by \(V^{\mathrm {inv}}\).

Proof of \((3\Rightarrow 1)\). Assume that \({\mathscr {K}}\) is invariant. Set \(\hat{d}_{{\mathscr {K}}}(Y)=d_{{\mathscr {K}}}(Y\sharp {\mathbb {P}})\) for all \(Y\in L^2_{{\mathbb {P}}}(\Omega )\), i.e., \(\hat{d}_{{\mathscr {K}}}\) is the lift of \(d_{{\mathscr {K}}}\). Let \(\phi \in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) and \(X\in L^2_{{\mathbb {P}}}(\Omega )\) be such that \(\hat{d}_{{\mathscr {K}}}-\phi \) has a local minimum at X, and set \(\mu =X\sharp {\mathbb {P}}\in {\mathscr {P}}_2({\mathbb {R}}^d)\).

Fix \(\varepsilon >0\), and let \(v_\varepsilon \in L^2_{\mu }({\mathbb {R}}^d)\) be such that \(v_\varepsilon (x)\in F(\mu ,x)\) for \(\mu \)-a.e. \(x\in {\mathbb {R}}^d\) and

By Remark 6.4, we can suppose that \(v_\varepsilon \in C^0\), and by Lemma A.4 there exists an admissible trajectory \(\varvec{\mu }^\varepsilon =\{\mu ^\varepsilon _t\}_{t\in [0,T]}\) defined on [0, T] with \(\mu ^\varepsilon _s=\mu \), and \(\varvec{\eta }^\varepsilon \in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[0,T]})\) such that \(e_t\sharp \varvec{\eta }^\varepsilon =\mu ^\varepsilon _t\) for all \(t\in [0,T]\) and

By density, we can find \({\hat{v}}_\varepsilon \in C^0_b({\mathbb {R}}^d)\) such that \(\Vert v_\varepsilon -{\hat{v}}_\varepsilon \Vert _{L^2_{\mu }}\le \varepsilon \).

Denote by \({\mathscr {V}}_\varepsilon :\Omega \rightarrow {\mathbb {R}}^d\times \Gamma _{[0,T]}\) a Borel map satisfying \(\varvec{\eta }^\varepsilon ={\mathscr {V}}_\varepsilon \sharp {\mathbb {P}}\). Recalling Lemma A.2, since for all \(\varepsilon >0\) we have \(\mu =\mu ^\varepsilon _0=e_0\sharp \varvec{\eta }^\varepsilon =(e_0\circ {\mathscr {V}}_\varepsilon )\sharp {\mathbb {P}}=X\sharp {\mathbb {P}}\), we can find a sequence of measure-preserving Borel maps \(\{r^\varepsilon _{n}(\cdot )\}_{n\in {\mathbb {N}}}\) such that

and we set \(Y^{\varepsilon ,n}_t=e_t\circ {\mathscr {V}}_\varepsilon \circ r^\varepsilon _{n}\) for all \(t\in [0,T]\). In particular, \(Y^{\varepsilon ,n}_t\sharp {\mathbb {P}}=\mu ^\varepsilon _t\) for all \(t\in [0,T]\). We then have

Recalling the choice of \({\hat{v}}_\varepsilon \), we have also

Since, by Lemma A.2, \(\Vert Y^{\varepsilon ,n}_0-X\Vert _{L^2_{{\mathbb {P}}}}\le \frac{1}{n}\), we can find a subsequence \(\{Y^{\varepsilon ,n_h}_0\}_{h\in {\mathbb {N}}}\) such that for \({\mathbb {P}}\)-a.e. \(\omega \in \Omega \) it holds \(\lim _{h\rightarrow +\infty }Y^{\varepsilon ,n_h}_0(\omega )=X(\omega )\). Therefore,

where we used the Dominated Convergence Theorem to pass to the limit under the integral sign, exploiting the global boundedness of \({\hat{v}}_\varepsilon \).

Now, let \({\bar{\mu }}^{n_h}\in {\mathscr {K}}\) such that \(W_2(\mu ,{\bar{\mu }}^{n_h})\le d_{{\mathscr {K}}}(\mu )+\dfrac{1}{n_h}\). By Grönwall’s inequality (Lemma 3.3), given \(\varvec{\mu }^\varepsilon \) as before there exist \(\varvec{{\bar{\mu }}}^{\varepsilon ,n_h}\in {\mathcal {A}}_{[0,T]}({\bar{\mu }}^{n_h})\) such that

for all \(t\in [0,T]\), where we used the fact that \(\varvec{{\bar{\mu }}}^{\varepsilon ,n_h}\subseteq {\mathscr {K}}\) by invariance of the set \({\mathscr {K}}\) and since \({\bar{\mu }}^{\varepsilon ,n_h}_0={\bar{\mu }}^{n_h}\in {\mathscr {K}}\). According to the choice of X, we have

We estimate the first term as follows

Concerning the right hand side of (6.20), we have that there exists a continuous increasing map \(\varrho :[0,+\infty )\rightarrow [0,+\infty )\) with \(\varrho (r)/r\rightarrow 0\) as \(r\rightarrow 0^+\) such that

Recalling the choice of \(v_\varepsilon \), we have

Recalling now the uniform boundedness in \(\varepsilon \) of \(\Vert \frac{e_t-e_0}{t}\Vert _{L^2_{\varvec{\eta }^\varepsilon }}\) coming from Proposition 3.4(3), by letting \(h\rightarrow +\infty \), \(t\rightarrow 0^+\) and \(\varepsilon \rightarrow 0^+\), and by setting

we have, thanks also to (6.19),

Thus, by passing to the limit also in (6.21) and combining that estimate with (6.22), we get

This leads to \((L+2)d_{{\mathscr {K}}}(X\sharp {\mathbb {P}})+H_F^{\mathrm {inv}}(X,D\phi (X))\ge 0\), i.e., \(d_{{\mathscr {K}}}(\mu )\) is a supersolution of (6.17) (cf. Remark 5.3). \(\square \)

7 An example

Given \(\mu \in {\mathscr {P}}_2({\mathbb {R}}^d)\), \(x\in {\mathbb {R}}^d\), \(u\in {\mathbb {R}}\), let \(U=[1/2,3/2]\), \(U'=[-3/2,3/2]\) and define the functions \(f,g:{\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d\times {\mathbb {R}}\rightarrow {\mathbb {R}}^d\) as

Define the set-valued maps \(F,G:{\mathscr {P}}_2({\mathbb {R}}^d)\times {\mathbb {R}}^d\rightrightarrows {\mathbb {R}}^d\) as

and the closed set

Notice that F, G satisfy the assumptions \((\varvec{F_1})-(\varvec{F_2})\) and \(G(\mu ,x)\supseteq F(\mu ,x)\). In particular,

We have

Indeed, to prove that \(d_{{\mathscr {K}}}(\mu )\le \mathrm {m}^{1/2}_2(\mu )-1\) for all \(\mu \notin {\mathscr {K}}\), take a \(W_2\)-geodesic \(\{\xi _t\}_{t\in [0,\mathrm {m}^{1/2}_2(\mu )]}\) with constant speed joining \(\delta _0\) to \(\mu \notin {\mathscr {K}}\). We have \(\mathrm {m}^{1/2}_2(\xi _1)=W_2(\delta _0,\xi _1)=1\), and \(W_2(\mu ,\delta _0)=W_2(\mu ,\xi _1)+1\). So \(\xi _1\in {\mathscr {K}}\) and \(d_{{\mathscr {K}}}(\mu )\le \mathrm {m}^{1/2}_2(\mu )-1\). Conversely, fix \(\varepsilon >0\) and let \(\mu _\varepsilon \in {\mathscr {K}}\) be such that \(d_{{\mathscr {K}}}(\mu )\ge W_2(\mu ,\mu _\varepsilon )-\varepsilon \). Then, recalling that \(W_2(\mu _\varepsilon ,\delta _0)\le 1\), we have

By letting \(\varepsilon \rightarrow 0^+\), we have the desired inequality.

The lift of \(d_{{\mathscr {K}}}(\cdot )\) is the convex function \({\hat{U}}:L^2_{{\mathbb {P}}}(\Omega )\rightarrow {\mathbb {R}}\) defined as

The function \({\hat{U}}(\cdot )\) is \(C^1\) in the open set \(D:=\{X\in L^2_{{\mathbb {P}}}:\, \Vert X\Vert _{L^2_{{\mathbb {P}}}}\ne 1\}\). Thus, if \(\psi \in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) is such that \({\hat{U}}-\psi \) attains a local minimum at \(X\in D\) then

Let \(\psi \in C^1(L^2_{{\mathbb {P}}})\) such that \({\hat{U}}-\psi \) attains a local minimum at \(X\in L^2_{{\mathbb {P}}}\) with \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}=1\). By Propositions 1.2 and 1.5 in [17], we have that

Conversely, given \(\xi \in \partial {\hat{U}}(X)\), set \(\psi (Y)={\hat{U}}(X)+\langle \xi ,Y-X\rangle _{L^2_{{\mathbb {P}}}}\). Then, \(\psi \in C^1\), \({\hat{U}}-\psi \) has a minimum at X, and \(D\psi (X)=\xi \).

We want to prove that if \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}=1\), then \(\partial {\hat{U}}(X)=\{\lambda X:\, \lambda \in [0,1]\}\).

We prove \(\supseteq \). Given \(X,Y\in L^2_{{\mathbb {P}}}\) with \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}=1\), and \(\lambda \in [0,1]\), it holds

Thus, in any case \(\langle \lambda X,Y-X\rangle _{L^2_{{\mathbb {P}}}}\le {\hat{U}}(Y)-{\hat{U}}(X)\), proving \(\supseteq \).

Conversely, we prove \(\subseteq \). Let \(X\in L^2_{{\mathbb {P}}}(\Omega )\), \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}=1\), so \({\hat{U}}(X)=0\). Assume that \(\xi =\lambda X+\hat{\lambda } Z\in \partial {\hat{U}}(X)\), with \(\Vert Z\Vert _{L^2_{{\mathbb {P}}}}=1\), \(\langle Z,X\rangle _{L^2_{{\mathbb {P}}}}=0\) and \(\lambda ,\hat{\lambda }\in {\mathbb {R}}\). We want to prove that \(\lambda \in [0,1]\) and \(\hat{\lambda }=0\). Indeed, for all \(Y\in L^2_{{\mathbb {P}}}(\Omega )\) it holds

By taking \(Y=aX+bZ\), we have \({\hat{U}}(Y)=\max \{0,\sqrt{|a|^2+|b|^2}-1\}\), and so

-

Choosing \((a,b)=(2,0)\) leads to \(\lambda \le 1\). Choosing \((a,b)=(1/2,0)\) leads to \(\lambda \ge 0\). Therefore, \(0\le \lambda \le 1\).

-

Choose \(a=1\). Then for all \(b>0\), we have \(\dfrac{\sqrt{1+b^2}-1}{b}\ge \hat{\lambda }\), and by passing to the limit as \(b\rightarrow 0^+\) we have \(0\ge \hat{\lambda }\). For all \(b<0\), we have \(\dfrac{\sqrt{1+b^2}-1}{b}\le \hat{\lambda }\), and by passing to the limit as \(b\rightarrow 0^-\) we have \(0\le \hat{\lambda }\). Therefore, \(\hat{\lambda }=0\).

We prove now that \({\mathscr {K}}\) is invariant for the dynamics F. Thanks to Theorem 6.7, we have to prove that for every \(\psi \in C^1(L^2_{{\mathbb {P}}})\) such that \({\hat{U}}-\psi \) attains a local minimum at \(X\in L^2_{{\mathbb {P}}}\) it holds

We distinguish two cases

-

when \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}<1\), we have \(d_{{\mathscr {K}}}(X\sharp {\mathbb {P}})=0\) and \(D\psi (X)=0\), which implies \(H^{\mathrm {inv}}_F(X,D\psi (X))=0\), so the equation is trivially satisfied.

-

when \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}\ge 1\), we have \(d_{{\mathscr {K}}}(X\sharp {\mathbb {P}})=\Vert X\Vert _{L^2_{{\mathbb {P}}}}-1\) and \(D\psi (X)=\lambda \dfrac{X}{\Vert X\Vert _{L^2_{{\mathbb {P}}}}}\), with \(\lambda =1\) if \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}> 1\), and \(\lambda \in [0,1]\) otherwise, which implies

$$\begin{aligned} H^{\mathrm {inv}}_F(X,D\psi (X))=&1-\Vert X\Vert _{L^2_{{\mathbb {P}}}}-\dfrac{1}{2}\lambda \int _{\Omega }\arctan (1-\Vert X\Vert _{L^2_{{\mathbb {P}}}})e^{-|X(\omega )|^2}|X(\omega )|^2\,\mathrm{d}{\mathbb {P}}(\omega )\\ \ge&1-\Vert X\Vert _{L^2_{{\mathbb {P}}}}, \end{aligned}$$So, also in this case, we have

$$\begin{aligned}(L+2)d_{{\mathscr {K}}}(X\sharp {\mathbb {P}})+H^{\mathrm {inv}}_F(X,D\psi (X))\ge (L+2)(\Vert X\Vert _{L^2_{{\mathbb {P}}}}-1)+1-\Vert X\Vert _{L^2_{{\mathbb {P}}}}\ge 0,\end{aligned}$$

from which we get the invariance, and thus the viability, of the set \({\mathscr {K}}\) for the dynamics F. Since all the admissible trajectories for F are also admissible for G, we have that \({\mathscr {K}}\) is viable for G. We prove now that \({\mathscr {K}}\) is not invariant for G. Indeed, take \(X\in L^2_{{\mathbb {P}}}(\Omega )\) with \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}=1\). Then, we can consider \(\psi \in C^1(L^2_{{\mathbb {P}}}(\Omega ))\) s.t. \(\psi (Y)=\Vert Y\Vert _{L^2_{{\mathbb {P}}}}\) in a neighborhood V of X. Given \(Y\in V\), we have \({\hat{U}}(Y)-\psi (Y)=-1\) if \(\Vert Y\Vert _{L^2_{{\mathbb {P}}}}\ge 1\) and \({\hat{U}}(Y)-\psi (Y)=-\Vert Y\Vert _{L^2_{{\mathbb {P}}}}\ge -1\) if \(\Vert Y\Vert _{L^2_{{\mathbb {P}}}}<1\). In particular, \({\hat{U}}(X)-\psi (X)=-1\), so \({\hat{U}}-\psi \) attains in V a minimum at X, and \(D\psi (X)=X\). Set

we obtain (recalling that \(\Vert X\Vert _{L^2_{{\mathbb {P}}}}=1\))

Thus,

and therefore \({\hat{U}}(\cdot )\) is not a supersolution of the invariance equation.

On the other hand, set (see Definition 6.1)

For every \(v\in L^2_{{\mathbb {P}}}(\Omega )\) with \(v(\cdot )\in F(Y\sharp {\mathbb {P}},Y(\cdot ))\subseteq G(Y\sharp {\mathbb {P}},Y(\cdot ))\), it holds

and by taking the supremum in the right-hand side over the set

we obtain

and therefore for every \(\psi \in C^1(L^2_{{\mathbb {P}}})\) such that \({\hat{U}}-\psi \) attains a local minimum at \(X\in L^2_{{\mathbb {P}}}\) it holds

Thus, \({\mathscr {K}}\) is viable for G, as already noticed.

References

G. Albi, N. Bellomo, L. Fermo, S.-Y. Ha, J. Kim, L. Pareschi, D. Poyato, and J. Soler, Vehicular traffic, crowds, and swarms: From kinetic theory and multiscale methods to applications and research perspectives, Mathematical Models and Methods in Applied Sciences 29 (2019), no. 10, 1901–2005, https://doi.org/10.1142/S0218202519500374.

Luigi Ambrosio, Nicola Gigli, and Giuseppe Savaré, Gradient flows in metric spaces and in the space of probability measures, 2nd ed., Lectures in Mathematics ETH Zürich, Birkhäuser Verlag, Basel, 2008.

Luigi Ambrosio and Bernd Kirchheim, Rectifiable sets in metric and Banach spaces, Math. Ann. 318 (2000), no. 3, 527–555, https://doi.org/10.1007/s002080000122.

Jean-Pierre Aubin and Hélène Frankowska, Set-valued analysis, Modern Birkhäuser Classics, Birkhäuser Boston Inc., Boston, MA, 2009. Reprint of the 1990 edition.

Yurii Averboukh, Viability theorem for deterministic mean field type control systems, Set-Valued Var. Anal. 26 (2018), no. 4, 993–1008.

Martino Bardi and Italo Capuzzo-Dolcetta, Optimal control and viscosity solutions of Hamilton-Jacobi-Bellman equations, Systems & Control: Foundations & Applications, Birkhäuser Boston Inc., Boston, MA, 1997. With appendices by Maurizio Falcone and Pierpaolo Soravia.

Alain Bensoussan, Jens Frehse, and Phillip Yam, Mean field games and mean field type control theory, SpringerBriefs in Mathematics, Springer, New York, 2013.

Jonathan M. Borwein and Qiji J. Zhu, Techniques of variational analysis, CMS Books in Mathematics/Ouvrages de Mathématiques de la SMC, vol. 20, Springer-Verlag, New York, 2005.

Rainer Buckdahn, Shige Peng, Marc Quincampoix, and Catherine Rainer, Existence of stochastic control under state constraints, C. R. Acad. Sci. Paris Sér. I Math. 327 (1998), no. 1, 17–22.

Pierre Cardaliaguet, Notes on Mean Field Games, 2013. From P.-L. Lions’ lectures at College de France, link to the notes.

René Carmona and François Delarue, Probabilistic Theory of Mean Field Games with Applications, vol I, Probability Theory and Stochastic Modeling, vol. 84, Springer International Publishing, 2018.

G. Cavagnari, S. Lisini, C. Orrieri, and G. Savaré, Lagrangian, Eulerian and Kantorovich formulations of multi-agent optimal control problems: equivalence and Gamma-convergence, Preprint: arXiv:2011.07117 [math.OC].

Giulia Cavagnari, Antonio Marigonda, and Benedetto Piccoli, Superposition principle for differential inclusions, Large-scale scientific computing, Lecture Notes in Comput. Sci., vol. 10665, Springer, Cham, 2018, pp. 201–209.

Francis Clarke, Functional analysis, calculus of variations and optimal control, Graduate Texts in Mathematics, vol. 264, Springer, London, 2013.

M. Fornasier, S. Lisini, C. Orrieri, and G. Savaré, Mean-field optimal control as gammalimit of finite agent controls, European J. Appl. Math. 30 (2019), no. 6, 1153–1186, https://doi.org/10.1017/s0956792519000044.

Chloé Jimenez, Antonio Marigonda, and Marc Quincampoix, Optimal Control of Multiagent Systems in the Wasserstein Space, Calculus of Variations and Partial Differential Equations 59 (2020), https://doi.org/10.1007/s00526-020-1718-6.

Alexander Kruger, On Fréchet Subdifferentials, Journal of Mathematical Sciences 116 (2003), no. 3, 3325-3358, https://doi.org/10.1023/A:1023673105317.

Antonio Marigonda and Marc Quincampoix, Mayer control problem with probabilistic uncertainty on initial positions, J. Differential Equations 264 (2018), no. 5, 3212–3252.

Nikolay Pogodaev, Optimal control of continuity equations, NoDEA Nonlinear Differential Equations Appl. 23 (2016), no. 2, Art. 21, 24, https://doi.org/10.1007/s00030-016-0357-2.

Acknowledgements

G.C. thanks LMBA, Univ Brest, where this research started. G.C. is also indebted to the University of Pavia where this research has been partially carried out, in particular G.C. has been supported by Cariplo Foundation and Regione Lombardia via project Variational Evolution Problems and Optimal Transport, and by MIUR PRIN 2015 project Calculus of Variations, together with FAR funds of the Department of Mathematics of the University of Pavia. G.C. thanks also the support of the INdAM-GNAMPA Project 2019 Optimal transport for dynamics with interaction (“Trasporto ottimo per dinamiche con interazione”). M.Q. benefited from the support of the FMJH Program Gaspard Monge in optimization and operation research (PGMO 2016-1570H) and of the Air Force Office of Scientific Research under Award Number FA9550-18-1-0254.

Funding

Open access funding provided by Politecnico di Milano within the CRUI-CARE Agreement.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A: Essential technical results

Appendix A: Essential technical results

Here, we report the proofs of the preliminary results presented in Sect. 3 as well as other technical results which have been significantly used in order to prove the main propositions and theorems of the present paper. In our opinion, these results could also be interesting by themselves.

1.1 A.1: Proof of Proposition 3.4

Let \({\varvec{\mu }}=\{\mu _t\}_{t\in [a,b]}\) be an admissible trajectory defined in [a, b]. According to the superposition principle (Theorem 8.2.1 in [2] or Theorem 1 in [16]), there exists \({\varvec{\eta }}\in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[a,b]})\) such that \(\mu _t=e_t\sharp {\varvec{\eta }}\) for all \(t\in [a,b]\) and, for \({\varvec{\eta }}\)-a.e. \((x,\gamma )\), \({\dot{\gamma }}(t)\in F(\mu _t,\gamma (t))\), \(\gamma (a)=x\).

Set \(|F(\mu _s,x)|=\max \{|y|:\,y\in F(\mu _s,x)\}\). For \({\varvec{\eta }}\)-a.e. \((x,\gamma )\in {\mathbb {R}}^d\times \Gamma _{[a,b]}\), we have

Grönwall’s inequality yields

where

By taking the \(L^2_{{\varvec{\eta }}}\)-norm, \(\Vert e_t-e_s\Vert _{L^2_{{\varvec{\eta }}}}\le g(t,s,\mathrm {m}^{1/2}_2(\mu _s))\), and so

By continuity, the right-hand side tends to \(K+2L \mathrm {m}^{1/2}_2(\mu _s)\) as \(t\rightarrow s^+\), and so \(t\mapsto \dfrac{e_t-e_s}{t-s}\) is uniformly bounded in \(L^2_{{\varvec{\eta }}}\) in a right neighborhood of s.

Let \(p\in {\mathbb {R}}^d\), \(t\in ]s,b]\). For \({\varvec{\eta }}\)-a.e. \((x,\gamma )\in {\mathbb {R}}^d\times \Gamma _{[a,b]}\), reasoning as in the first part of the proof of Lemma 6.3, we have

Therefore,

By Filippov’s theorem (Theorem 8.2.10 in [4]), there exists a Borel map \(w:{\mathbb {R}}^d\times \Gamma _{[a,b]}\rightarrow {\mathbb {R}}^d\), satisfying \(w(x,\gamma )\in F(\mu _s,\gamma (s))\) for \({\varvec{\eta }}\)-a.e. \((x,\gamma )\in {\mathbb {R}}^d\times \Gamma _{[a,b]}\) such that

Thus,

1.2 A.2: Proof of Proposition 3.5

For a proof of the nonemptiness of the set \({\mathcal {A}}_{[a,b]}(\mu )\), we refer the reader to Theorem 1 in [16], where the authors perform a fixed point argument.

Let \(\{{\varvec{\mu }}^{(n)}\}_{n\in {\mathbb {N}}}\subseteq {\mathcal {A}}_{[a,b]}(\mu _0)\). By Proposition 3.4(2), for any \(n\in {\mathbb {N}}\) there exists \(\varvec{\eta }^{(n)}\in {\mathscr {P}}({\mathbb {R}}^d\times \Gamma _{[a,b]})\) such that \(e_t\sharp \varvec{\eta }^{(n)}=\mu _t^{(n)}\) for all \(t\in [a,b]\). Moreover, for \(s\in [a,b]\) with \(s<t\), we have

Notice that \(W_2(\mu _t^{(n)},\mu _s^{(n)})\le \Vert e_t-e_s\Vert _{L^2_{\varvec{\eta }^{(n)}}}\). Indeed, it suffices to consider the admissible plan \(\sigma :=(e_t,e_s)\sharp \varvec{\eta }^{(n)}\in \Pi (\mu ^{(n)}_t,\mu ^{(n)}_s)\). Thus,