Abstract

Materials and machines are often designed with particular goals in mind, so that they exhibit desired responses to given forces or constraints. Here we explore an alternative approach, namely physical coupled learning. In this paradigm, the system is not initially designed to accomplish a task, but physically adapts to applied forces to develop the ability to perform the task. Crucially, we require coupled learning to be facilitated by physically plausible learning rules, meaning that learning requires only local responses and no explicit information about the desired functionality. We show that such local learning rules can be derived for any physical network, whether in equilibrium or in steady state, with specific focus on two particular systems, namely disordered flow networks and elastic networks. By applying and adapting advances of statistical learning theory to the physical world, we demonstrate the plausibility of new classes of smart metamaterials capable of adapting to users’ needs in situ.

2 More- Received 10 November 2020

- Revised 2 April 2021

- Accepted 5 April 2021

DOI:https://doi.org/10.1103/PhysRevX.11.021045

Published by the American Physical Society under the terms of the Creative Commons Attribution 4.0 International license. Further distribution of this work must maintain attribution to the author(s) and the published article’s title, journal citation, and DOI.

Published by the American Physical Society

Physics Subject Headings (PhySH)

Popular Summary

The design of physical materials or machines to have particular properties or functionalities often requires detailed knowledge about microscopic aspects of the system and a solution to the “inverse problem” of how to modify those aspects to achieve desired properties. Machine learning solves these inverse problems. For example, a neural network learns by observing examples and the computer modifies its microscopic properties by penalizing incorrect performance. While such frameworks have proven extremely powerful for computer-based learning, they cannot be applied directly to physical materials without knowing and manipulating microscopic details. Inspired by advances in neuroscience and machine learning, we propose learning rules that could enable us to “teach” physical networks desired functions by showing them examples while they modify their microscopic properties on their own.

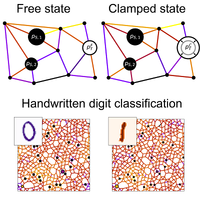

Systems such as elastic spring networks or flow networks could potentially be designed to implement a general class of physically plausible “coupled learning rules.” We show numerically how such networks can be taught by example to learn complex user-defined functions, such as the classification of handwritten digits. We further discuss limitations for applying the coupled learning rules experimentally and consider how they can be overcome.

Our work clarifies how physically plausible learning rules can be used to create learning machines that can autonomously adapt to external influence and gain desired functionality. Such machines are essentially rudimentary brains, composed of simple mechanical parts. Adaptable “learning machines” are expected to be particularly useful if desired physical properties or functionalities are not known in advance or need to adapt to changing circumstances.