Abstract

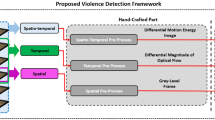

Recently, surveillance cameras are deployed in many public places to monitor human activities. Detecting violence in videos through automatic analysis means significant for law enforcement. But almost many monitoring systems require to manually identify violent scenes in the video which leads to slow response. However, violence detection is a challenging problem because of the broad definition of violence. In this work, we will concern with physical violence that involved two persons or more. This work proposed a novel method to detect violence using automated mobile neural architecture search network and convolution long short-term-memory to extract spatiotemporal features in the video, and then adding two types of pooling layers max and average pooling to capture richer features, standard scaling these features and reducing the dimension using linear discriminative analysis to remove redundant features, and making classifier algorithms working well in low dimension. For classification, we trained and tested various machine learning models which are random forest, support vector machine (SVM), and K-nearest neighbor classifiers. We develop a combined dataset that contains violence and non-violence scenes from public datasets: hockey, movie, and violent flow. The performance of the proposed method is evaluated on a combined dataset in addition to three benchmark datasets, hockey, movie, and violent flow datasets in terms of detection accuracy. The results of our model showed high performance in combined, movie, and violent flow datasets using SVM classifier with accuracies of 97.5%, 100%, and 96%, respectively, whereas in the hockey dataset, we achieve the best result of 99.3% using the random forest classifier.

Similar content being viewed by others

References

Caetano, C.; Bremond, F.; Schwartz, W.: Skeleton image representation for 3D action recognition based on tree structure and reference joints. In: 2019 32nd SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI) 2019, pp. 1–8 (2019).

Rahmani, H.; Mian, A.; Shah, M.: Learning a deep model for human action recognition from novel viewpoints. IEEE Trans. Pattern Anal. Mach. Intell. 40(3), 667–681 (2018)

Li, X.; Chuah, M.: ReHAR: robust and efficient human activity recognition. In: IEEE Winter Conference on Applications of Computer Vision. Lake Tahoe (2018)

Park, K.J.; Yang, S.: Deep neural networks for activity recognition with multi-sensor data in a smart home. In: 2018 IEEE 4th World Forum on Internet of Things (WF-IoT). Singapore, Singapore (2018)

Ullah, F.; Ullah, A.; Muhammad, K.; Haq, I.; Baik, S.: Violence detection using spatiotemporal features with 3D convolutional neural network. Sensors 19(11), 2472 (2019)

Ramzan, M.; et al.: A review on state-of-the-art violence detection techniques. IEEE Access 7, 107560–107575 (2019)

Yi, Y.; Wang, H.: Motion keypoint trajectory and covariance descriptor for human action recognition. Vis. Comput. 34(3), 391–403 (2017)

Li, X.; Wang, D.; Zhang, Y.: Representation for action recognition using trajectory-based low-level local feature and mid-level motion feature. Appl. Comput. Intell. Soft Comput. 2017, 1–7 (2017)

Zhou, L.; Nagahashi, H.: Real-time action recognition based on key frame detection. In: Proceedings of the 9th International Conference on Machine Learning and Computing, pp. 272–277. Singapore (2018)

Shao, L.; Zhen, X.; Tao, D.; Li, X.: Spatio-temporal laplacian pyramid coding for action recognition. IEEE Trans. Cybern. 44(6), 817–827 (2014)

Sudhakaran, S.; Lanz, O.: Learning to detect violent videos using convolutional long short-term memory. In: International Conference on Advanced Video and Signal Based Surveillance (2017)

Ten, M.; Chen, B.; Pang, R.; Vasudevan, V.; Sandler, M.; Howard, A.; Le, V.: MnasNet: Platform-aware neural architecture search for mobile. In: CVPR (2019)

Naik, A.; Gopalakrishna, M.: Violence detection in surveillance video-a survey. Int. J. Latest Res. Eng. Technol. IJLRET, pp. 11–17 (2016)

Hassner, T.; Itcher, Y.; Kliper-Gross, O.: Violent flows: real-time detection of violent crowd behaviour. In: IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops. Providence (2012)

Gao, Y.; Liu, H.; Sun, X.; Wang, C.; Liu, Y.: Violence detection using oriented violent flows. Image Vis. Comput. 48–49, 37–41 (2016)

Zhang, T.; Yang, Z.; Jia, W.; Yang, B.; Yang, J.; He, X.: A new method for violence detection in surveillance scenes. Multimedia Tools Appl. 75(12), 7327–7349 (2015)

Moreira, D.; et al.: Temporal robust features for violence detection. In: 2017 IEEE Winter Conference on Applications of Computer Vision (WACV) (2017)

Bermejo Nievas, E.; Deniz Suarez, O.; Bueno García, G.; Sukthankar, R.: Violence detection in video using computer vision techniques. In: Real, P., Diaz-Pernil, D., Molina-Abril, H., Berciano, A., Kropatsch, W. (eds.) Computer Analysis of Images and Patterns (CAIP) (2011)

Deniz, O.; Serrano, I.; Bueno, G.; Kim, T.: Fast violence detection in video. In: 2014 International Conference on Computer Vision Theory and Applications (VISAPP) (2014)

Vashistha, P.; Bhatnagar, C.; Khan, M.: An architecture to identify violence in video surveillance system using ViF and LBP. In: 2018 4th International Conference on Recent Advances in Information Technology (RAIT) (2018)

Serrano Gracia, I.; Deniz Suarez, O.; Bueno Garcia, G.; Kim, T.: Fast fight detection. PLoS ONE 10(4), e0120448 (2015)

Xia, Q.; Zhang, P.; Wang, J.; Tian, M.; Fei, C.: Real time violence detection based on deep spatio-temporal features. In: Zhou, J. et al. (eds.) Biometric Recognition (CCBR), pp. 157–165 (2018)

Zhou, P.; Ding, Q.; Luo, H.; Hou, X.: Violent interaction detection in video based on deep learning. J. Phys. Conf. Ser. 844, 012044 (2017)

Ding, C.; Fan, S.; Zhu, M.; Feng, W.; Jia, B.: Violence detection in video by using 3D convolutional neural networks. In: Bebis, G. et al. (eds.) Advances in Visual Computing (ISVC), pp. 551–558 (2014)

Dong, Z.; Qin, J.; Wang, Y.: Multi-stream deep networks for person to person violence detection in videos. In: Tan, T., Li, X., Chen, X., Zhou, J., Yang, J., Cheng, H. (eds.) Pattern Recognition (CCPR), pp. 517–531 (2016)

Xingjian, S.; Chen, Z.; Wang, H.; Yeung, D.; Wong, Y.: Convolutional LSTM Network: A machine learning approach for precipitation nowcasting. In: Advances in Neural Information Processing Systems, pp. 802–810 (2015)

Hanson, A.; Pnvr, K.; Krishnagopal, S.; Davis, L.: Bidirectional convolutional LSTM for the detection of violence in videos. In: Lecture Notes in Computer Science, pp. 280–295 (2019)

Peixoto, B.; Lavi, B.; Pereira Martin, J.P.; Avila, S. Dias, Z.; Rocha, A.: Toward subjective violence detection in videos. In: 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 8276–8280 (2019)

Halder, R.; Chatterjee, R.: CNN-BiLSTM model for violence detection in smart surveillance. In: SN Computer Science (2020) 1:201, pp. 1–9 (2020)

Sharma, M.; Baghel, R.: Video surveillance for violence detection using deep learning. In: Borah, S., Emilia Balas, V., Polkowski, Z. (eds.) Advances in Data Science and Management, pp. 411–420 (2020)

Aktı, S.; Ayse, G.; Ekenel, H.: Vision-based fight detection from surveillance cameras. In: IEEE/EURASIP 9th International Conference on Image Processing Theory, Tools and Applications (2020)

Serrano, I.; Deniz, O.; Espinosa-Aranda, J.; Bueno, G.: Fight recognition in video using hough forests and 2D convolutional neural network. IEEE Trans. Image Process. 27(10), 4787–4797 (2018)

Meng, Z.; Yuan, J.; Li, Z.: Trajectory-pooled deep convolutional networks for violence detection in videos. In: Lecture Notes in Computer Science, pp. 437–447 (2017)

Tan, M.: MnasNet: towards automating the design of mobile machine learning models. Google AI Blog (2019). [Online]. Available: https://ai.googleblog.com/2018/08/mnasnet-towards-automating-design-of.html. Accessed 12 Sept 2019

Ryan, J.; Savakis, A.: Anomaly detection in video using predictive convolutional long short-term memory networks. In: Computer Vision and Pattern Recognition (cs.CV) (2016)

Patraucean, A.H.; Cipolla, R.: Spatio-temporal video autoencoder with differentiable memory. In: ICLR Workshop (2016)

Asaithambi, S.: Why, how and when to scale your features. Medium (2018). [Online]. Available: https://medium.com/greyatom/why-how-and-when-to-scale-your-features-4b30ab09db5e. Accessed 5 Sept 2019.

Maklin, C.: Linear discriminant analysis in python. Medium (2019). [Online]. Available: https://towardsdatascience.com/linear-discriminant-analysis-in-python-76b8b17817c2. Accessed 12 Oct 2019

Ben Fraj, M.: In depth: parameter tuning for random forest. Medium (2019). [Online]. Available: https://medium.com/all-things-ai/in-depth-parameter-tuning-for-random-forest-d67bb7e920d. Accessed 12 Sept 2019

Plapinger, T.: Tuning a random forest classifier. Medium (2019). [Online]. Available: https://medium.com/@taplapinger/tuning-a-random-forest-classifier-1b252d1dde92. Accessed 12 Sept 2019.

Pupale, R.: Support vector machines (SVM)—an overview. Medium (2019). [Online]. Available: https://towardsdatascience.com/https-medium-com-pupalerushikesh-svm-f4b42800e989. Accessed 12 Sept 2019

Ben Fraj, M.: In depth: parameter tuning for KNN. Medium (2019). [Online]. Available: https://medium.com/@mohtedibf/in-depth-parameter-tuning-for-knn-4c0de485baf6. Accessed 12 Sept 2019

Deis, A.: Data augmentation for deep learning. Medium (2019). [Online]. Available: https://towardsdatascience.com/data-augmentation-for-deep-learning-4fe21d1a4eb9. Accessed 7 Sept 2019

torchvision.transforms—PyTorch master documentation. Pytorch.org (2019). [Online]. Available: https://pytorch.org/docs/stable/torchvision/transforms.html. Accessed 7 Sept 2019

Cioloboc, F.: Ideas on how to fine-tune a pre-trained model in PyTorch. Medium (2019). [Online]. Available: https://medium.com/udacity-pytorch-challengers/ideas-on-how-to-fine-tune-a-pre-trained-model-in-pytorch-184c47185a20. Accessed 5 Sept 2019

scikit-learn developers: Linear and quadratic discriminant analysis. scikti-learn 0.23.2 (2020). [online]- Available: https://scikit-learn.org/stable/modules/lda_qda.html. [Accessed: 11- Nov- 2020].

Shung, K.: Accuracy, precision, recall or F1? Medium (2018). [Online]. Available: https://towardsdatascience.com/accuracy-precision-recall-or-f1-331fb37c5cb9. Accessed 08 Oct 2019

Acknowledgements

The authors are grateful to DSR technical and financial support in King Abdulaziz university. This project was funded by the Deanship of Scientific Research (DSR), King Abdulaziz University, Jeddah, under Grant No. DF-421-165-1441.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Jahlan, H.M.B., Elrefaei, L.A. Mobile Neural Architecture Search Network and Convolutional Long Short-Term Memory-Based Deep Features Toward Detecting Violence from Video. Arab J Sci Eng 46, 8549–8563 (2021). https://doi.org/10.1007/s13369-021-05589-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13369-021-05589-5