Abstract

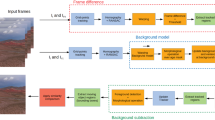

This paper presents a method of moving object detection through a fast background subtraction technique suitable for real-time performance in wide range of platforms. An intermittent background update using adaptive blocks individually calculates the learning rate through expected difference values. Then, coupled with a fast background subtraction process, the design achieves fast throughput with well-rounded performance. To compensate for the lagging effects of intermittent background update, an adaptation bias is devised to improve precision and recall metrics. Experiments show a versatile performance in varying scenes with overall results better than conventional techniques. The proposed method achieved a fast execution speed of up to 56 fps in PC using Full HD video. It also achieved 655 fps and 83 fps in PC and ARM core-embedded platform, respectively, using the minimum input resolution of 320 × 240. Overall, it is suitable for real-time performance applications.

Similar content being viewed by others

References

Cocorullo, G., Frustaci, F., Guachi, L., Perri, S.: Embedded surveillance system using background subtraction and raspberry Pi. In: 2015 AEIT International Annual Conference (AEIT), pp. 1–5 (2015)

Xu, Z.X., Zhang, D.H., Du, L.: Moving object detection based on improved three frame difference and background subtraction. In: International Conference on Industrial Informatics—Computing Technology, Intelligent Technology, Industrial Information Integration (ICIICII), pp. 79–82 (2017)

Elharrouss, O., Abbad, A., Moujahid, D., Tairi, H.: Moving object detection zone using a block-based background model. IET Comput. Vis. 12(1), 86–94 (2018)

Barnich, O., Droogenbroeck, M.V.: ViBe: a universal background subtraction algorithm for video sequences. IEEE Trans. Image Process. 20(6), 1709 (2011)

Stauffer, C., Grimson, W.E.L.: Adaptive background mixture for real-time tracking. In: Proceedings on IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Vol. 2 (1999)

Zivkovic, Z.: Improved adaptive Gaussian mixture model for background subtraction. In: Proceedings of the 17th international conference on pattern recognition, Vol. 2, pp. 28–31 (2004)

Riahi, D., St. Onge, P.L., Bilodeau, G.A.: RECTGAUSS-Tex: block-based background subtraction. École Polytechnique de Montréal, Montréal (2012)

Shah, M., Deng, J.D., Woodford, B.J.: Improving mixture of Gaussians background model through adaptive learning and spatio-temporal voting. In: IEEE International Conference on Image Processing, pp. 3436–3440 (2013)

Lin, D.Z., Cao, D.L., Zeng, H.L.: Improving motion state change object detection by using block background context. In: 14th UK Workshop on Computational Intelligence (UKCI), pp. 1–6 (2014)

Cocorullo, G., Corsonello, P., Frustaci, F., Guachi, L.A.G., Perri, S.: Multimodal background subtraction for high-performance embedded systems. J. Real Time Image Process. (2016). https://doi.org/10.1007/s11554-016-0651-6

Li, S., Florencio, D., Li, W., Zhao, Y., Cook, C.: A fusion framework for camouflaged moving foreground in the wavelet domain. IEEE Trans. Image Process. 27(8), 3910–3930 (2018)

Elgammal, A., Harwood, D., Davis, L.: Non-parametric model for background subtraction. In: Proceedings of the European Conference on Computer Vision, Lectures Notes in Computer Science, Vol. 1843, pp. 751–767 (2000)

Zivkovic, Z., van der Heijden, F.: Efficient adaptive density estimation per image pixel for the task of background subtraction. Patter Recogn. Lett. 27(7), 773–780 (2006)

Vemulapalli, R., Aravind, R.: Spatio-temporal nonparametric background modeling and subtraction. In: IEEE 12th International Conference on Computer Vision Workshops, ICCV Workshops, pp. 1145–1152 (2009)

Kim, K., Chalidabhongse, T.H., Hardwood, D., Davis, L.: Real-time foreground-background segmentation using codebook model. Real Time Imaging 11, 172–185 (2005)

Guo, J.M., Hsia, C.H., Liu, Y.F., Shih, M.H., Chang, C.H., Wu, J.Y.: Fast background subtraction based on a multilayer codebook model for moving object detection. IEEE Trans. Circuits Syst. Video Technol. 24(10), 1809–1821 (2013)

Pal, A., Schaefer, G., Celebi, M.E.: Robust codebook-based video background subtraction. In: 2010 IEEE International Conference on Acoustics, Speech and Signal Processing, pp 1146–1149 (2012)

Toyama, K., Krumm, J., Brumitt, B., Meyers, B.: Wallflower: principles and practice of background maintenance. In: Proceedings on the 7th IEEE International Conference on Computer Vision, Vol. 1, pp. 255–261 (1999)

Sajid, H., Cheung, S.C.S.: Universal multimode background subtraction. IEEE Trans. Image Process. 26(7), 3249–3260 (2017)

Jiang, S.Q., Lu, X.B.: WeSamBE: a weight-sample-based method for background subtraction. IEEE Trans. Circuits Syst. Video Technol. (2017). https://doi.org/10.1109/TCSVT.2017.2711659

St Charles, P.L., Bilodeau, G.A., Bergevin, R.: SuBSENSE: a universal change detection method with local adaptive sensitivity. IEEE Trans. Image Process. 24(1), 359–372 (2015)

Beaugendre, A., Goto, S.: Adaptive block-propagative background subtraction method for UHDTV foreground detection. IEICE Trans. Fundam. 98(11), 2307–2314 (2015)

Beaugendre, A., Goto, S., Yoshimura, T.: Real-time UHD background modelling with mixed selection block updates. IEICE Trans. Fundam. Electron. Commun. Comput. Sci. 100(2), 581–591 (2017)

Kim, H., Lee, H.J.: A low-power surveillance video coding system with early background subtraction and adaptive frame memory compression. IEEE Trans. Consum. Electron. 63(4), 359–367 (2017)

Lim, L.A., Keles, H.Y.: Foreground segmentation using a triplet convolutional neural network for multiscale feature encoding. arXiv preprint arXiv:1801.02225 (2018)

Zhao, Z.J., Zhang, X.B., Fang, Y.C.: Stacked multilayer self-organizing map for background modeling. IEEE Trans. Image Process. 24(9), 2841 (2015)

Lim, L.A., Keles, H.Y.: Foreground segmentation using convolutional neural networks for multiscale feature encoding. Pattern Recogn. Lett. 112, 256–262 (2018)

Zeng, W., Wang, K., Wang, F.Y.: A novel background subtraction algorithm based on parallel vision and Bayesian GANs. Neurocomputing (2020). https://doi.org/10.1016/j.neucom.2019.04.088

Changedetection.net (CDNET).: [Online]. http://www.changedetection.net/. Accessed 25 Jun 2018. (2018)

Wang, R., Bunyak, F., Seetharaman, G., Palaniappan, K.: Static and moving object detection using flux tensor with split Gaussian models. In: Proceedings of IEEE Workshop on Change Detection (2014)

Bianco, S., Ciocca, G., Schettini, R.: Combination of video change detection algorithms by genetic programming. IEEE Trans. Evol. Comput. 21(6), 914–928 (2017)

Wang, Y., Jodoin, P.M., Porikli, F., Konrad, J., Benezeth, Y., Ishwar, P.: CDnet 2014: an expanded change detection benchmark dataset. In: IEEE Conference on Computer Vision and Pattern Recognition Workshops, pp. 393–400 (2014)

Goyette, N., Jodoin, P.M., Porikli, F., Konrad, J., Ishwar, P.: Changedetection.net: a new change detection benchmark dataset. In: IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops, pp. 1–8 (2012)

Chang, H.J., Jeong, H.W., Choi, J.Y.: Active attention sampling for speed-up of background subtraction. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 2088–2095 (2012)

Acknowledgements

This work was supported by Kwangwoon University and by the MISP Korea under the National Program for Excellence in SW (2017-0-00096) supervised by IITP.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Montero, V.J., Jung, WY. & Jeong, YJ. Fast background subtraction with adaptive block learning using expectation value suitable for real-time moving object detection. J Real-Time Image Proc 18, 967–981 (2021). https://doi.org/10.1007/s11554-020-01058-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11554-020-01058-8