Abstract

In this paper, we develop statistical inference procedures for functional quadratic quantile regression model in which the response is a scalar and the predictor is a random function defined on a compact set of R. The functional coefficients are estimated by functional principal components. The asymptotic properties of the resulting estimators are established under mild conditions. In order to test the significance of the nonlinear term in the model, we propose a rank score test procedure. The asymptotic properties of the proposed test statistic are established. The proposed method provides a highly efficient and robust alternative to the least squares method, and can be conveniently implemented using existing R software package. Finally, we examine the performance of the proposed method for finite sample sizes by Monte Carlo simulation studies and illustrate it with a real data example.

Similar content being viewed by others

References

Aneiros G, Vieu P (2015) Partial linear modelling with multi-functional covariates. Comput Stat 30:1–25

Cai T, Hall P (2006) Prediction in functional linear regression. Ann Stat 34:2159–2179

Cai T, Yuan M (2012) Minimax and adaptive prediction for functional linear regression. J Am Stat Assoc 107:1201–1216

Cardot H, Ferraty F, Sarda P (2003) Spline estimators for the functional linear model. Stat Sin 13:571–591

Cardot H, Crambes C, Sarda P (2005) Quantile regression when the covariates are functions. Nonparametr Stat 17(7):841–856

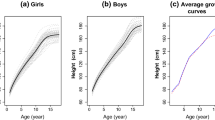

Chen K, Müller H (2012) Conditional quantile analysis when covariates are functions, with application to growth data. J R Stat Soc Ser B 74:67–89

Ferraty F, Vieu P (2006) Nonparametric functional data analysis: theory and practice. Springer, New York

Hall P, Horowitz JL (2007) Methodology and convergence rates for functional linear regression. Ann Stat 35:70–91

He X, Shi PD (1996) Bivariate tensor-product B-spline in a partly linear model. J Multivar Anal 58:162–181

He X, Zhu ZY, Fung WK (2002) Estimation in a semiparametric model for longitudinal data with unspecified dependence structure. Biometrika 89:579–590

Horváth L, Kokoszka P (2012) Inference for functional data with applications. Springer, New York

Horváth L, Reeder R (2013) A test of significance in functional quadratic regression. Bernoulli 19:2120–2151

Huang L, Wang H, Zheng A (2014) The M-estimator for functional linear regression model. Stat Probab Lett 88:165–173

Huang L, Wang H et al (2015) Sieve M-estimator for semi-functional linear model. Sci China Math 58:2421–2434

Huang L, Zhao J, Wang H, Wang S (2016) Robust shrinkage estimation and selection for functional multiple linear model through LAD loss. Comput Stat Data Anal 103:384–400

Kato K (2012) Estimation in functional linear quantile regression. Ann Stat 40:3108–3136

Kong D, Staicu A, Maity A (2013) Classical testing in functional linear models. N C State Univ Dept Stat Tech Rep 2647:1–23

Li M, Wang KH, Maity A, Staicu A (2016) Inference in functional linear quantile regression. arXiv preprint arXiv:1602.08793

Lu Y, Du J, Sun Z (2014) Functional partially linear quantile regression model. Metrika 77:317–332

Ramsay JO, Silverman BW (1997) Functional data analysis. Springer series in statistics. Springer, Berlin

Ramsay JO, Silverman BW (2005) Functional data analysis, 2nd edn. Springer, New York

Riesz F, Nagy BS (1955) Functional analysis. Dover Publications, New York

Wang H, Zhu ZY, Zhou JH (2009) Quantile regression in partially linear varying coefficient models. Ann Stat 37:3841–3866

Wei Y, He X (2006) Conditional growth charts (with discussions). Ann Stat 34:2069–2097

Whitney KN (1997) Convergence rates and asymptotic normality for series estimators. J Econom 79:147–168

Yao F, Müller HG (2010) Functional quadratic regression. Biometrika 97:49–64

Yu P, Du J, Zhang ZZ (2017) Varying-coefficient partially functional linear quantile regression models. J Korean Stat Soc 46:462–475

Yuan M, Cai TT (2010) A reproducing kernel Hilbert space approach to functional linear regression. Ann Stat 38:3412–3444

Acknowledgements

The authors would like to thank the editor and the two referees for their important comments and suggestions which lead to improvement of an earlier version of this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Xie’s work is supported by the Science and Technology Project of Beijing Municipal Education Commission (KM201710005032, KM201910005015) and the National Natural Science Foundation of China (Nos. 11571340, 11971045). Zhang’s work is partly supported by the National Natural Science Foundation of China (Nos. 11271039, 11771032), and Education Ministry Funds for Doctor Supervisors (20131103110027).

Appendix

Appendix

In this section, we present the proofs of the results stated in Sect. 3. In what follows, for two sequences of positive numbers \(a_n\) and \(b_n\), \(a_n\lesssim b_n\) satisfies that \(a_n/b_n\) is uniformly bounded and \(a_n \asymp b_n\) if \(a_n \lesssim b_n\) and \(b_n\lesssim a_n\). Let C denote a positive constant which might take a different value at the different place. First, we give the following three lemmas which are useful to prove the main results.

Lemma 1

Let \(\eta _1\) and \(\eta _n\) be the smallest and largest eigenvalues of \(\frac{1}{n}{\varvec{X}}{\varvec{X}}^T.\) Under conditions C1–C6, we have \( \eta _1=\hat{\lambda }_m, \eta _n=\hat{\lambda }_1. \)

Lemma 2

Let \(\tau _1\) and \(\tau _n\) be the smallest and largest eigenvalues of \(\frac{1}{n}{\varvec{Z}}{\varvec{Z}}^T.\) Under conditions C1–C6, we have \( \tau _1=\lambda _m^2+o_p(1), \tau _n=\lambda _1^2+o_p(1), \)

The proof of Lemmas 1 and 2 is similar to the proof of Lemma 2 in Yu et al. (2017).

Lemma 3

Let

Under conditions C1–C4 and \(C6'\), we have \( \Vert R_{i}\Vert ^2=O_p(\delta _n^2), \) where

Proof of Lemma 3

Invoking the fact \(\Vert \phi _{j}-{\hat{\phi }}_{j}\Vert ^2=O_p(n^{-1}j^2)\) and (4)–(6), one has

where

and

First, we consider \(R_{i1}.\)

Next, we consider \(R_{i2}\).

Thus \(R_{i21}=O_p\left( \sum _{k=1}^m\sum _{l=1}^m \varphi ^2_{kl} \frac{k^2}{n}\right) \). Similarly, \(R_{i22}=R_{i23}=O_p\left( \sum _{k=1}^m\right. \left. \sum _{l=m+1}^\infty {\varphi }^2_{kl}\right) ,\) and \(R_{i24}=O_p\left( \sum _{k=m+1}^\infty \sum _{l=m+1}^\infty {\varphi }^2_{kl}\right) .\) Therefore, we have

According the convergence of rate of \(R_{i1}\) and \(R_{i2}\), one has \(\Vert R_{i}\Vert ^2=O_{p}\left( \delta _n^2\right) .\)\(\square \)

Lemma 4

Under \(H_0\) and conditions C1–C5 and \(C6'\), we have

where \( {\varvec{V}}_n^*=(1-\tau )\tau {\varvec{H}}^T {\varvec{H}}. \)

The proof of Lemma 4 is similar to these of He et al. (2002).

Lemma 5

Under \(H_0\) and conditions C1–C5 and \(C6'\), we have

where \( {\varvec{S}}_n^*=(I-{\varvec{P}}){\varvec{Z}} {\varvec{\psi }}({\varvec{\varepsilon }}). \)

The proof of Lemma 5 is similar to the Theorem 4.1 in Wei and He (2006).

Proof of Theorem 1

Denote \({\varvec{P}}_Z= {\varvec{Z}}({\varvec{Z}}^T{\varvec{Z}})^{-1}{\varvec{Z}}^T, {\varvec{X}}^*=({\varvec{I}}-{\varvec{P}}_Z){\varvec{X}}\), \({\varvec{X}}^* =({\varvec{X}}^*_1,\dots ,{\varvec{X}}^*_n)^T\), \({\varvec{S}}_n={{\varvec{X}}^*}^T{\varvec{X}}^*\), \({\varvec{\beta }}=(\beta _1,\dots ,\beta _m)^T\), \({\varvec{\gamma }}=\text {vech}(\{\varphi _{kl}(2-\delta _{kl}),1\le k \le l\le m \}^T).\)

Let

where \({\varvec{H}}_{m}={\varvec{Z}}^{T}{\varvec{Z}}\). Let \(\hat{{\varvec{\theta }}}={\varvec{\theta }}({\hat{\mu }},\hat{{\varvec{\beta }}},\hat{{\varvec{\gamma }}})=({\hat{\theta }}_1,\hat{{\varvec{\theta }}}_{1}^{T},\hat{{\varvec{\theta }}}_{2}^{T})^{T}.\) Notice that the suffix 0 here means the true value of the parameter, and for simplicity we omit it where there is no misunderstanding. Now we show \(\Vert \hat{{\varvec{\theta }}}\Vert =O_{p}( \frac{1}{\lambda _m})\). To do so, we standardize \(\tilde{{\varvec{X}}}_{i}={\varvec{S}}_n^{-\frac{1}{2}} {\varvec{X}}_i^*\), \(\tilde{{\varvec{Z}}}_{i}={\varvec{H}}_m^{-\frac{1}{2}} {\varvec{Z}}_i\), and let \(R_i\) be defined in Lemma 3, then one has

which is minimized at \(\hat{{\varvec{\theta }}}\).

Invoking Lemmas 1 and 2, for any \(\kappa >0\), similar arguments to these of Lemma 1 of Cardot et al. (2005), there exists \(L_{\kappa }\) such that

On the other hand, we have

Thus, we have

Combining Eq. (16), one has

Thus, \(\Vert \hat{{\varvec{\theta }}}\Vert =O_{p}\left( \frac{1}{\lambda _m}\right) .\) By the definition of \({\varvec{\theta }}\), we have \(\Vert \hat{\mu }-\mu _0\Vert =O_{p}\left( {\frac{\lambda _m}{\sqrt{n}}} \right) .\)

Combining Lemma 1 and the definition of \(\hat{{\varvec{\theta }}}\), one has

Now, we consider the convergence rate of the proposed estimators \({\hat{\beta }}(t)\) and \({\hat{\varphi }}(t,s).\)

Now we consider \(R_{n1}\), by the fact that the sequences \(\{{\hat{\phi }}_j\}\) forms an orthonormal basis in \(L^2([0, 1])\), one has

Next we consider \(R_{n2}\) and \(R_{n3}\),

and

Therefore, one has

Note that

Firstly, we consider \(T_{n1}.\)

For \(T_{n11},\) by the orthogonality of \(\{\hat{\phi }_{k}\},\) we have

For \(T_{n12},\) according to the Cauchy–Schwarz inequality, we have

Similar to \(T_{n12},\) we have \(T_{n12}=O_p\left( \frac{m^3}{n} \right) .\) Therefore, we conclude that

Via some elementary but tedious algebra, we can conclude that

Thus, we have

Next we will show the asymptotic normality of \(\hat{ \theta }_1\). Let \( \theta _{1}^{*}=\frac{1}{f(0)\sqrt{n}} \sum _{i=0}^{n} \psi _{\tau }(\varepsilon _{i})\), according to the Lindeberg–Feller theorem, \(\theta _{1}^{*}\) is asymptotically normal with variance-covariance \(\frac{\tau (1-\tau )}{f^{2}(0)}\).

On the other hand, following arguments similar to those used in He and Shi (1996), we can establish that \(\Vert \theta _{1}^{*}-\hat{ \theta }_{1}\Vert =o_{p}(1).\) Combining this, we conclude that

This completes the proof of Theorem 1. \(\square \)

Proof of Theorem 2

We first define

By the central limit theorem, we have

where \(A_n\) is a \(q\times m\) matrix such that \(A_nA^T_n\rightarrow G\), and G is a \(q\times q\) nonegative symmetric matrix. By the definition of the chi-squared distribution, one has

Combining (17) with the continuous mapping theorem, one has

According to Lemmas 4 and 5, we have

Combining this with (18), we can conclude that

\(\square \)

Rights and permissions

About this article

Cite this article

Shi, G., Xie, T. & Zhang, Z. Statistical inference for the functional quadratic quantile regression model. Metrika 83, 937–960 (2020). https://doi.org/10.1007/s00184-020-00763-5

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00184-020-00763-5