Abstract

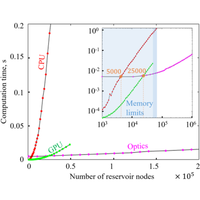

Reservoir computing is a relatively recent computational paradigm that originates from a recurrent neural network and is known for its wide range of implementations using different physical technologies. Large reservoirs are very hard to obtain in conventional computers, as both the computation complexity and memory usage grow quadratically. We propose an optical scheme performing reservoir computing over very large networks potentially being able to host several millions of fully connected photonic nodes thanks to its intrinsic properties of parallelism and scalability. Our experimental studies confirm that, in contrast to conventional computers, the computation time of our optical scheme is only linearly dependent on the number of photonic nodes of the network, which is due to electronic overheads, while the optical part of computation remains fully parallel and independent of the reservoir size. To demonstrate the scalability of our optical scheme, we perform for the first time predictions on large spatiotemporal chaotic datasets obtained from the Kuramoto-Sivashinsky equation using optical reservoirs with up to 50 000 optical nodes. Our results are extremely challenging for conventional von Neumann machines, and they significantly advance the state of the art of unconventional reservoir computing approaches, in general.

- Received 24 January 2020

- Revised 4 September 2020

- Accepted 5 October 2020

DOI:https://doi.org/10.1103/PhysRevX.10.041037

Published by the American Physical Society under the terms of the Creative Commons Attribution 4.0 International license. Further distribution of this work must maintain attribution to the author(s) and the published article’s title, journal citation, and DOI.

Published by the American Physical Society

Physics Subject Headings (PhySH)

Popular Summary

Machine learning and artificial neural networks—algorithms that tackle complex problems by mimicking how the brain processes data—have started to revolutionize society. But learning about and predicting large, complex natural phenomena remains a major challenge hindered by the limits of conventional computing technologies in realizing large neural networks. Light-based technologies, however, present a promising alternative to scale up neural networks. Here, we present an example of such an implementation to solve complex problems, namely, predicting the outcome of spatiotemporal chaotic behavior.

A popular approach to realize large and efficient neural networks is known as “reservoir computing,” a technique that maps input signals to a higher-dimensional computational space. We propose an optical scheme that performs reservoir computing over very large networks of up to one million fully connected photonic nodes. Our experimental studies confirm that the computation time of this scheme grows only linearly with the number of photonic nodes, in contrast to conventional computers, whose computation time grows quadratically. To demonstrate the scalability of our optical scheme, we perform, for the first time, predictions on large spatiotemporal nonlinear chaotic systems close to instabilities.

We believe that this work will pave the way toward neurological-based technologies that can outperform current systems in terms of size, scalability, connectivity, power consumption, and ease of training.