Abstract

Minimax lower bounds determine the complexity of given statistical problems by providing fundamental limit of any procedures. This paper gives a review on various aspects of obtaining minimax lower bounds focusing on a recent development. We first introduce classical methods, then more involved lower bound constructions such as testing two mixtures, two directional method, and global metric entropy method are provided with various examples including manifold learning, approximation sets and neural nets. In addition, we consider two different types of restrictions on the set of estimators. In particular, we consider the lower bounds when the set of estimators is required to be linear, and a private version of minimax lower bounds is discussed.

Similar content being viewed by others

References

Assouad, P. (1983). Deux remarques sur l’estimation. Comptes Rendus de l’ Academie des Sciences, Paris Serie, I Mathématique, 296, 1021–1024.

Bickel, P.J., Ritov, Y. (1988). Estimating integrated squared density derivatives: Sharp best order of convergence estimates. Sankhy?: The Indian Journal of Statistics, Series A (1961–2002), 50(3), 381–393. http://www.jstor.org/stable/25050710

Cai, T. T., Jin, J., & Low, M. G. (2007). Estimation and confidence sets for sparse normal mixtures. The Annals of Statistics, 35(6), 2421–2449. https://doi.org/10.1214/009053607000000334.

Cai, T. T., Liu, W., & Zhou, H. H. (2016). Estimating sparse precision matrix: Optimal rates of convergence and adaptive estimation. The Annals of Statistics, 44(2), 455–488. https://doi.org/10.1214/13-AOS1171.

Cai, T. T., & Low, M. G. (2004). Minimax estimation of linear functionals over nonconvex parameter spaces. The Annals of Statistics, 32(2), 552–576. https://doi.org/10.1214/009053604000000094.

Cai, T. T., & Low, M. G. (2005). Nonquadratic estimators of a quadratic functional. The Annals of Statistics, 33(6), 2930–2956. https://doi.org/10.1214/009053605000000147.

Cai, T. T., & Low, M. G. (2015). A framework for estimation of convex functions. Statistica Sinica, 25, 423–456.

Cai, T. T., & Zhou, H. H. (2012). Optimal rates of convergence for sparse covariance matrix estimation. The Annals of Statistics, 40(5), 2389–2420. https://doi.org/10.1214/12-AOS998.

Collier, O., Comminges, L., & Tsybakov, A. B. (2017). Minimax estimation of linear and quadratic functionals on sparsity classes. The Annals of Statistics, 45(3), 923–958. https://doi.org/10.1214/15-AOS1432.

Cover, T. M., & Thomas, J. A. (2006). Elements of Information Theory. New York: Wiley.

Cule, M., Samworth, R., & Stewart, M. (2010). Maximum likelihood estimation of a multi-dimensional log-concave density. Journal of the Royal Statistical Society: Series B (Statistical Methodology), 72(5), 545–607. https://doi.org/10.1111/j.1467-9868.2010.00753.x.

Donoho, D. L., & Johnstone, I. M. (1994). Minimax risk over \(l_p\) balls and \(l_q\) error. Probability Theory and Related Fields, 99, 277–303.

Donoho, D. L., & Johnstone, I. M. (1998). Minimax estimation via wavelet shrinkage. The Annals of Statistics, 26, 879–921.

Donoho, D. L., & Liu, R. C. (1991a). Geometrizing rates of convergence, II. The Annals of Statistics, 19(2), 633–667. https://doi.org/10.1214/aos/1176348114.

Donoho, D. L., & Liu, R. C. (1991b). Geometrizing rates of convergence, III. The Annals of Statistics, 19(2), 668–701. https://doi.org/10.1214/aos/1176348115.

Donoho, D. L., Liu, R. C., & MacGibbon, B. (1990). Minimax risk over hyperrectangles, and implications. The Annals of Statistics, 18(3), 1416–1437.

Donoho, D.L., & Nussbaum, M. (1990). Minimax quadratic estimation of a quadratic functional. Journal of Complexity, 6(3), 290–323. http://www.sciencedirect.com/science/article/pii/0885064X90900259

Duchi, J. C., Jordan, M. I., & Wainwright, M. J. (2018). Minimax optimal procedures for locally private estimation. Journal of the American Statistical Association, 113(521), 182–215.

Duchi, J.C., & Ruan, F. (2018). The right complecity measure in locally private estimation: It is not the fisher information. arXiv:1806.05756

Dwork, C., & Lei, J. (2009). Differential privacy and robust statistics. In: Proceedings of the Forty-First Annual ACM Symposium on the Theory of Computing (pp. 371–380)

Dwork, C., McSherry, F., Nissim, K., & Smith, A. (2006). Calibrating noise to sensitivity in private data analysis. In S. Halevi & T. Rabin (Eds.), Theory of Cryptography (pp. 265–284). Berlin, Heidelberg: Springer.

Fan, J. (1991a). On the estimation of quadratic functionals. Annals of Statistics, 19, 1273–1294.

Fan, J. (1991b). On the optimal rates of convergence for nonparametric deconvolution problems. The Annals of Statistics, 19(3), 1257–1272. https://doi.org/10.1214/aos/1176348248.

Fano, R. M. (1961). Transmission of Information: A Statistical Theory of Communication. New York: M.I.T Press and Cambridge and Wiley.

Gao, C., Ma, Z., Ren, Z., & Zhou, H. H. (2015). Minimax estimation in sparse canonical correlation analysis. The Annals of Statistics, 43(5), 2168–2197. https://doi.org/10.1214/15-AOS1332.

Genovese, C. R., Perone-Pacifico, M., Verdinelli, I., & Wasserman, L. (2012). Manifold estimation and singular deconvolution under hausdorff loss. The Annals of Statistics, 40(2), 941–963. https://doi.org/10.1214/12-AOS994.

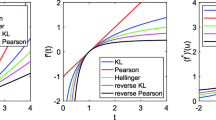

Guntuboyina, A. (2011). Lower bounds for the minimax risk using f-divergences, and applications. IEEE Transactions on Information Theory, 57(4), 2386–2399.

Haff, L. R., Kim, P. T., Koo, J.-Y., & Richards, D. S. P. (2011). Minimax estimation for mixtures of wishart distributions. The Annals of Statistics, 39(6), 3417–3440. https://doi.org/10.1214/11-AOS951.

Ibragimov, I., & Has’minskii, R. (1984). Nonparametric estimation of the value of a linear functional in gaussian white noise. Theory of Probability & Its Applications, 29, 18–32.

Kim, A. K. H. (2014). Minimax bounds for estimation of normal mixtures. bernoulli, 20, 1802–1818.

Kim, A. K. H., & Samworth, R. J. (2016). Global rates of convergence in log-concave density estimation. The Annals of Statistics, 44(6), 2756–2779. https://doi.org/10.1214/16-AOS1480.

Kim, A. K. H., & Zhou, H. H. (2015). Tight minimax rates for manifold estimation under hausdorff loss. Electronic Journal of Statistics, 9(1), 1562–1582. https://doi.org/10.1214/15-EJS1039.

Klusowski, J.M., & Barron, A.R. (2017). Minimax lower bounds for ridge combinations including neural nets. In: 2017 IEEE International Symposium on Information Theory (ISIT) (pp. 1376–1380)

Le Cam, L. (1973). Convergence of estimates under dimensionality restrictions. Annals of Statistics, 1, 38–53.

Le Cam, L. M. (1986). Asymptotic Methods in Statistical Decision Theory. New York: Springer.

Lehmann, E.L., & Casella, G. (1998). Theory of Point Estimation (Springer Texts in Statistics), 2nd Edn. New York: Springer. http://www.amazon.com/exec/obidos/redirect?tag=citeulike07-20&path=ASIN/0387985026

Loh, P.-L. (2017). On lower bounds for statistical learning theory. Entropy, 19, 617–643.

Pinsker, M. S. (1980). Optimal filtering of square-integrable signals in gaussian noise. Problems of Information Transmission, 16(2), 120–133.

Raimondo, M. (1998). Minimax estimation of sharp change points. The Annals of Statistics, 26(4), 1379–1397.

Raskutti, G., Wainwright, M. J., & Yu, B. (2011). Minimax rates of estimation for high-dimensional linear regression over \(\ell _{q}\) -balls. IEEE Transactions on Information Theory, 57(10), 6976–6994.

Sadhanala, V., Wang, Y.-X., Tibshirani, R.J. (2016). Total variation classes beyond 1d: Minimax rates, and the limitations of linear smoothers. In: Proceedings of the 30th International Conference on Neural Information Processing Systems. NIPS’16 (pp. 3521–3529). Curran Associates Inc. http://dl.acm.org/citation.cfm?id=3157382.3157491

Tao, M., Wang, Y., & Zhou, H. H. (2013). Optimal sparse volatility matrix estimation for high-dimensional ito processes with measurement errors. The Annals of Statistics, 41(4), 1816–1864.

Tsybakov, A. B. (2009). Introduction to Nonparametric Estimation. New York: Springer.

Wainwright, M.J. (2014). Constrained forms of statistical minimax: Computation, communication and privacy. In: Proceedings of the International Congress of Mathematicians

Walther, G. (2002). Detecting the presence of mixing with multiscale maximum likelihood. Journal of the American Statistical Association, 97(458), 508–513. https://doi.org/10.1198/016214502760047032.

Wasserman, L., & Zhou, S. (2010). A statistical framework for differential privacy. Journal of the American Statistical Association, 105(489), 375–389. https://doi.org/10.1198/jasa.2009.tm08651.

Yang, Y., & Barron, A. R. (1999). Information-theoretic determination of minimax rates of convergence. Annals of Statistics, 27, 1564–1599.

Yu, B. (1997). Assouad, Fano, and Le Cam. In D. Pollard, E. Torgersen, & G. L. Yang (Eds.), A Festschrift for Lucien Le Cam (pp. 423–435). New York: Springer.

Zhu, Y., Chatterjee, S., Duchi, J., Lafferty, J. (2016). Local minimax complexity of stochastic convex optimization. In: Proceedings of the 30th International Conference on Neural Information Processing Systems. NIPS’16 (pp. 3431–3439). Curran Associates Inc. http://dl.acm.org/citation.cfm?id=3157382.3157481

Acknowledgements

The author is very grateful to the anonymous reviewer for his/her constructive feedback, which significantly improved the quality of the paper. Arlene K. H. Kim’s work is supported by National Research Foundation of Korea (NRF) grant 2017R1C1B5017344 and by a Korea University Grant K1922101.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A: Supplementary materials

Appendix A: Supplementary materials

Lemma Appendix A.1

(Lemma 4 of Cai and Zhou, 2012) Let \({\bar{{{\mathbb {P}}}}}_m = \sum _{i=1}^m w_i {{\mathbb {P}}}_i\) and \({\bar{{{\mathbb {Q}}}}}_m = \sum _{i=1}^m w_i {{\mathbb {Q}}}_i\) where \(w_i \ge 0\) and \(\sum _{i=1}^m w_i = 1\). Then

Lemma Appendix A.2

Let \({\bar{{{\mathbb {P}}}}}_{k,i} = \frac{1}{2^{m-1}(n/m)^m} \sum _{\alpha \in vec(A_{k,i} \otimes B)} {{\mathbb {P}}}_{\theta _\alpha }\) for \(i=0,1\) where \({{\mathbb {P}}}_{\theta _\alpha } = P_{\theta _\alpha }^n\) is a product measure with a density \(\theta _\alpha \in \Theta _F\). Then

Proof of Lemma Appendix A.2

By symmetry, we fix \(k=1\). By Cauchy–Schwartz inequality,

where \((1) := \sum _{\alpha \in A_{1,1}} \prod _{i=1}^n \theta _\alpha (x_i)\) and \((2) := \sum _{\alpha \in A_{1,0} }\prod _{i=1}^n \theta _\alpha (x_i)\). Note that

since \(\int \phi _j(x)^2 = 1\) and \(\int \phi _j(x) \phi _{j'}(x) = 0\) when \(j \ne j'\). Similarly,

and

By construction, (A.1) and (A.2) are equal. This yields

For compuational convenience, we rewrite \({\underline{\alpha }} = (\alpha _1, \ldots , \alpha _n) = (a_1 \cdot b_1, \ldots , a_m \cdot b_m)\) where \(a_k \in \{0,1\}\) and \(b_k \in \{(1,0,\ldots ,0), (0,1,0, \ldots , 0), \ldots , (0,\ldots ,0,1)\}\) where \((a_1 \cdot b_1) = (a_1 b_{11}, \ldots , a_1 b_{1p})\) with \(p=n/m\). When \(\alpha \in A_{1,1}\), then \(a_1 = 1\) and when \(\alpha \in A_{1,0}\), then \(a_1 = 0\). This implies

It is clear \( (a_k \cdot b_k)^T (a'_k \cdot b'_k) = (a_k a'_k) (b_k^T b'_k)\), and in fact, this is nonzero if and only if \(a_k = a'_k = 1\) and \(b_k = b'_k\). Based on this observation, we consider new Bernoulli random variables

Then it suffices to show that the following is bounded above by 1.

When \(j=m-1\), all \(A_k\)’s (for \(k=2, \ldots , m\)) must have value 1. Thus, \(P(B_1 = 1, \sum _{k=2}^m A_k = m-1) = (1/4)^{m-1} (m^2/n^2)^m\). Note that \(X:= \sum _{k=2}^m A_k \sim B\left( m-1, \frac{m^2}{4n^2}\right) \) where \(B(m-1,p)\) is the binomial distribution with a success probability p among \(m-1\) trials. Let \(p_j = P(X = j)\), then \(p_j = {m-1 \atopwithdelims ()j} \left( \frac{m^2}{4n^2} \right) ^j \left( 1- \frac{m^2}{4n^2} \right) ^{m-1-j} \). By the property of moment generating function of X,

Choose \(c < \frac{4s-1}{2s+1}\) so that

Thus (A.3) \(\le 1/2\).

\(\square \)

Proof (sketch) of Lemma 4.3

For any P, Q of probability measures where \(P\ll Q\) and also \(Q \ll P\) with dominating measure \(\nu \), note that

where the inequality follows since for \(a\ge 0, b \ge 0\), \(|\log (a/b)| \le |a-b|/\min (a,b)\). This gives

Dividing the probability into two parts and by taking the supremum with respect to x first and then by definition of the total variation distance,

Note that

Also we have

For any \(x,x'\), \(q(s|x)/q(s|x') \le [e^{-\eta }, e^\eta ]\), which implies that

Now we consider the denominator in (A.4). That is,

Combining (A.5), (A.6), and (A.7), we have the desired bound. \(\square \)

Rights and permissions

About this article

Cite this article

Kim, A.K.H. Obtaining minimax lower bounds: a review. J. Korean Stat. Soc. 49, 673–701 (2020). https://doi.org/10.1007/s42952-019-00027-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42952-019-00027-7