Abstract

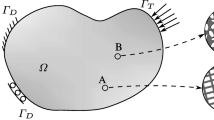

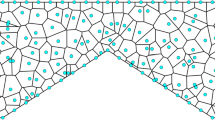

The presence of uncertainty in material properties and geometry of a structure is ubiquitous. The design of robust engineering structures, therefore, needs to incorporate uncertainty in the optimization process. Stochastic gradient descent (SGD) method can alleviate the cost of optimization under uncertainty, which includes statistical moments of quantities of interest in the objective and constraints. However, the design may change considerably during the initial iterations of the optimization process which impedes the convergence of the traditional SGD method and its variants. In this paper, we present two SGD based algorithms, where the computational cost is reduced by employing a low-fidelity model in the optimization process. In the first algorithm, most of the stochastic gradient calculations are performed on the low-fidelity model and only a handful of gradients from the high-fidelity model is used per iteration, resulting in an improved convergence. In the second algorithm, we use gradients from the low-fidelity models to be used as control variate, a variance reduction technique, to reduce the variance in the search direction. These two bi-fidelity algorithms are illustrated first with a conceptual example. Then, the convergence of the proposed bi-fidelity algorithms is studied with two numerical examples of shape and topology optimization and compared to popular variants of the SGD method that do not use low-fidelity models. The results show that the proposed use of a bi-fidelity approach for the SGD method can improve the convergence. Two analytical proofs are also provided that show linear convergence of these two algorithms under appropriate assumptions.

Similar content being viewed by others

Notes

A function \(J({{\varvec{\theta }}})\) is strongly convex with a constant \(\mu \) if \(J({{\varvec{\theta }}})-\frac{\mu }{2}\Vert {{\varvec{\theta }}}\Vert ^2\) is convex.

References

Allaire D, Willcox K, Toupet O (2010) A Bayesian-based approach to multifidelity multidisciplinary design optimization. In: 13th AIAA/ISSMO multidisciplinary analysis optimization conference, p 9183

Alnæs MS, Blechta J, Hake J, Johansson A, Kehlet B, Logg A, Richardson C, Ring J, Rognes ME, Wells GN (2015) The FENiCS project version 1.5. Arch Numer Softw 3(100):9–23

Andreassen E, Clausen A, Schevenels M, Lazarov BS, Sigmund O (2011) Efficient topology optimization in Matlab using 88 lines of code. Struct Multidiscip Optim 43(1):1–16

Babuška I, Nobile F, Tempone R (2007) A stochastic collocation method for elliptic partial differential equations with random input data. SIAM J Numer Anal 45(3):1005–1034

Bakr MH, Bandler JW, Madsen K, Søndergaard J (2000) Review of the space mapping approach to engineering optimization and modeling. Optim Eng 1(3):241–276

Bakr MH, Bandler JW, Madsen K, Søndergaard J (2001) An introduction to the space mapping technique. Optim Eng 2(4):369–384

Bandler JW, Biernacki RM, Chen SH, Grobelny PA, Hemmers RH (1994) Space mapping technique for electromagnetic optimization. IEEE Trans Microw Theory Tech 42(12):2536–2544

Bendøse M, Sigmund O (2003) Topology optimization: theory, methods and applications. ISBN: 3-540-42992-1

Bendsøe MP (1989) Optimal shape design as a material distribution problem. Struct Optim 1(4):193–202

Blatman G, Sudret B (2010) An adaptive algorithm to build up sparse polynomial chaos expansions for stochastic finite element analysis. Probab Eng Mech 25(2):183–197

Booker AJ, Dennis JE, Frank PD, Serafini DB, Torczon V, Trosset MW (1999) A rigorous framework for optimization of expensive functions by surrogates. Struct Optim 17(1):1–13

Bottou L (2010) Large-scale machine learning with stochastic gradient descent. In: Proceedings of COMPSTAT’2010. Springer, pp 177–186

Bottou L, Curtis FE, Nocedal J (2018) Optimization methods for large-scale machine learning. SIAM Rev 60(2):223–311

Bourdin B (2001) Filters in topology optimization. Int J Numer Methods Eng 50(9):2143–2158

Boyd S, Vandenberghe L (2004) Convex optimization. Cambridge University Press, Cambridge

Bruns TE, Tortorelli DA (2001) Topology optimization of non-linear elastic structures and compliant mechanisms. Comput Methods Appl Mech Eng 190(26–27):3443–3459

Bulleit WM (2008) Uncertainty in structural engineering. Pract Period Struct Des Construct 13(1):24–30

Calafiore GC, Dabbene F (2008) Optimization under uncertainty with applications to design of truss structures. Struct Multidiscip Optim 35(3):189–200

Chen SH, Yang XW, Wu BS (2000) Static displacement reanalysis of structures using perturbation and pade approximation. Commun Numer Methods Eng 16(2):75–82

Choi S, Alonso JJ, Kroo IM, Wintzer M (2008) Multifidelity design optimization of low-boom supersonic jets. J Aircr 45(1):106–118

Christensen DE (2012) Multifidelity methods for multidisciplinary design under uncertainty. Master’s thesis, Massachusetts Institute of Technology

De S, Hampton J, Maute K, Doostan A (2020) Topology optimization under uncertainty using a stochastic gradient-based approach. Struct Multidiscip Optim (accepted)

De S, Wojtkiewicz SF, Johnson EA (2017) Efficient optimal design and design-under-uncertainty of passive control devices with application to a cable-stayed bridge. Struct Control Health Monit 24(2):e1846

Defazio A, Bottou L (2018) On the ineffectiveness of variance reduced optimization for deep learning. ArXiv preprint arXiv:1812.04529

Diwekar U (2008) Optimization under uncertainty. In: Introduction to applied optimization. Springer, pp 1–54

Diwekar UM, Kalagnanam JR (1997) Efficient sampling technique for optimization under uncertainty. AIChE J 43(2):440–447

Doostan A, Geraci G, Iaccarino G (2016) A bi-fidelity approach for uncertainty quantification of heat transfer in a rectangular ribbed channel. In: ASME turbo expo 2016: turbomachinery technical conference and exposition. American Society of Mechanical Engineers, p V02CT45A031

Doostan A, Owhadi H (2011) A non-adapted sparse approximation of PDE with stochastic inputs. J Comput Phys 230(8):3015–3034

Doostan A, Owhadi H, Lashgari A, Iaccarino G (2009) Non-adapted sparse approximation of PDEs with stochastic inputs. Technical report annual research brief, Center for Turbulence Research, Stanford University

Duchi J, Hazan E, Singer Y (2011) Adaptive subgradient methods for online learning and stochastic optimization. J Mach Learn Res 12(7):2121–2159

Eldred M, Dunlavy D (2006) Formulations for surrogate-based optimization with data fit, multifidelity, and reduced-order models. In: 11th AIAA/ISSMO multidisciplinary analysis and optimization conference, p 7117

Eldred MS, Elman HC (2011) Design under uncertainty employing stochastic expansion methods. Int J Uncertain Quantif 1(2):119–146

Fairbanks HR, Doostan A, Ketelsen C, Iaccarino G (2017) A low-rank control variate for multilevel Monte Carlo simulation of high-dimensional uncertain systems. J Comput Phys 341:121–139

Fairbanks HR, Jofre L, Geraci G, Iaccarino G, Doostan A (2018) Bi-fidelity approximation for uncertainty quantification and sensitivity analysis of irradiated particle-laden turbulence. ArXiv preprint arXiv:1808.05742

Fernández-Godino MG, Park C, Kim N-H, Haftka RT (2016) Review of multi-fidelity models. ArXiv preprint arXiv:1609.07196

Fischer CC, Grandhi RV, Beran PS (2017) Bayesian low-fidelity correction approach to multi-fidelity aerospace design. In: 58th AIAA/ASCE/AHS/ASC structures, structural dynamics, and materials conference, p 0133

Forrester AI, Sóbester A, Keane AJ (2007) Multi-fidelity optimization via surrogate modelling. Proc R Soc Lond A Math Phys Eng Sci 463(2088):3251–3269

Ghanem RG, Spanos PD (2003) Stochastic finite elements: a spectral approach. Dover publications, New York

Gorodetsky AA, Geraci G, Eldred MS, Jakeman JD (2020) A generalized approximate control variate framework for multifidelity uncertainty quantification. J Comput Phys 408:109257

Hammersley J (2013) Monte Carlo methods. Springer, Berlin

Hampton J, Doostan A (2016) Compressive sampling methods for sparse polynomial chaos expansions. Handbook of uncertainty quantification, pp 1–29

Hampton J, Doostan A (2018) Basis adaptive sample efficient polynomial chaos (BASE-PC). J Comput Phys 371:20–49

Hampton J, Fairbanks HR, Narayan A, Doostan A (2018) Practical error bounds for a non-intrusive bi-fidelity approach to parametric/stochastic model reduction. J Comput Phys 368:315–332

Hasselman T (2001) Quantification of uncertainty in structural dynamic models. J Aerosp Eng 14(4):158–165

Henson VE, Briggs WL, McCormick SF (2000) A multigrid tutorial. Society for Industrial and Applied Mathematics, Philadelphia

Holmberg E, Torstenfelt B, Klarbring A (2013) Stress constrained topology optimization. Struct Multidiscip Optim 48(1):33–47

Huang D, Allen TT, Notz WI, Miller RA (2006) Sequential kriging optimization using multiple-fidelity evaluations. Struct Multidiscip Optim 32(5):369–382

Hurtado JE (2002) Reanalysis of linear and nonlinear structures using iterated Shanks transformation. Comput Methods Appl Mech Eng 191(37–38):4215–4229

Jin R, Du X, Chen W (2003) The use of metamodeling techniques for optimization under uncertainty. Struct Multidiscip Optim 25(2):99–116

Johnson R, Zhang T (2013) Accelerating stochastic gradient descent using predictive variance reduction. In: Advances in neural information processing systems, pp 315–323

Keane A (2003) Wing optimization using design of experiment, response surface, and data fusion methods. J Aircr 40(4):741–750

Keane AJ (2012) Cokriging for robust design optimization. AIAA J 50(11):2351–2364

Kennedy MC, O’Hagan A (2001) Bayesian calibration of computer models. J R Stat Soc Ser B Stat Methodol 63(3):425–464

Kingma D, Ba J (2014) Adam: a method for stochastic optimization. ArXiv preprint arXiv:1412.6980

Kirsch U (2000) Combined approximations-a general reanalysis approach for structural optimization. Struct Multidiscip Optim 20(2):97–106

Koutsourelakis P-S (2009) Accurate uncertainty quantification using inaccurate computational models. SIAM J Sci Comput 31(5):3274–3300

Koziel S, Tesfahunegn Y, Amrit A, Leifsson LT (2016) Rapid multi-objective aerodynamic design using co-kriging and space mapping. In: 57th AIAA/ASCE/AHS/ASC structures, structural dynamics, and materials conference, p 0418

Kroo I, Willcox K, March A, Haas A, Rajnarayan D, Kays C (2010) Multifidelity analysis and optimization for supersonic design. Technical report CR-2010-216874, NASA

Logg A, Mardal K-A, Wells GN et al (2012) Automated solution of differential equations by the finite element method. Springer, Berlin

Luenberger DG, Ye Y (1984) Linear and nonlinear programming, vol 2. Springer, Berlin

March A, Willcox K (2012a) Constrained multifidelity optimization using model calibration. Struct Multidiscip Optim 46(1):93–109

March A, Willcox K (2012b) Provably convergent multifidelity optimization algorithm not requiring high-fidelity derivatives. AIAA J 50(5):1079–1089

March A, Willcox K, Wang Q (2011) Gradient-based multifidelity optimisation for aircraft design using Bayesian model calibration. Aeronaut J 115(1174):729–738

Martin JD, Simpson TW (2005) Use of kriging models to approximate deterministic computer models. AIAA J 43(4):853–863

Maute K, Pettit CL (2006) Uncertainty quantification and design under uncertainty of aerospace systems. Struct Infrastruct Eng 2(3–4):159–159

Myers DE (1982) Matrix formulation of co-kriging. J Int Assoc Math Geol 14(3):249–257

Narayan A, Gittelson C, Xiu D (2014) A stochastic collocation algorithm with multifidelity models. SIAM J Sci Comput 36(2):A495–A521

Nemirovski A, Juditsky A, Lan G, Shapiro A (2009) Robust stochastic approximation approach to stochastic programming. SIAM J Optim 19(4):1574–1609

Ng LW, Willcox KE (2014) Multifidelity approaches for optimization under uncertainty. Int J Numer Methods Eng 100(10):746–772

Ng LW-T, Eldred M (2012) Multifidelity uncertainty quantification using non-intrusive polynomial chaos and stochastic collocation. In: 53rd AIAA/ASME/ASCE/AHS/ASC structures, structural dynamics and materials conference 20th AIAA/ASME/AHS adaptive structures conference 14th AIAA, p 1852

Nobile F, Tempone R, Webster CG (2008) A sparse grid stochastic collocation method for partial differential equations with random input data. SIAM J Numer Anal 46(5):2309–2345

Nocedal J, Wright S (2006) Numerical optimization. Springer, Berlin

Padron AS, Alonso JJ, Eldred MS (2016) Multi-fidelity methods in aerodynamic robust optimization. In: 18th AIAA non-deterministic approaches conference, p 0680

Park C, Haftka RT, Kim NH (2017) Remarks on multi-fidelity surrogates. Struct Multidiscip Optim 55(3):1029–1050

Parussini L, Venturi D, Perdikaris P, Karniadakis GE (2017) Multi-fidelity Gaussian process regression for prediction of random fields. J Comput Phys 336:36–50

Pasupathy R, Schmeiser BW, Taaffe MR, Wang J (2012) Control-variate estimation using estimated control means. IIE Trans 44(5):381–385

Peherstorfer B, Cui T, Marzouk Y, Willcox K (2016) Multifidelity importance sampling. Comput Methods Appl Mech Eng 300:490–509

Peherstorfer B, Willcox K, Gunzburger M (2018) Survey of multifidelity methods in uncertainty propagation, inference, and optimization. SIAM Rev 60(3):550–591

Perdikaris P, Venturi D, Royset JO, Karniadakis GE (2015) Multi-fidelity modelling via recursive co-kriging and Gaussian–Markov random fields. Proc R Soc A Math Phys Eng Sci 471(2179):20150018

Qian PZ, Wu CJ (2008) Bayesian hierarchical modeling for integrating low-accuracy and high-accuracy experiments. Technometrics 50(2):192–204

Robbins H, Monro S (1951) A stochastic approximation method. Ann Math Stat 22:400–407

Robinson T, Eldred M, Willcox K, Haimes R (2008) Surrogate-based optimization using multifidelity models with variable parameterization and corrected space mapping. AIAA J 46(11):2814–2822

Ross SM (2013) Simulation, 5th edn. Academic Press, Cambridge

Roux NL, Schmidt M, Bach FR (2012) A stochastic gradient method with an exponential convergence rate for finite training sets. In: Advances in neural information processing systems, pp 2663–2671

Rubinstein RY, Kroese DP (2016) Simulation and the Monte Carlo method, vol 10. Wiley, New York

Ruder S (2016) An overview of gradient descent optimization algorithms. ArXiv preprint arXiv:1609.04747

Sahinidis NV (2004) Optimization under uncertainty: state-of-the-art and opportunities. Comput Chem Eng 28(6–7):971–983

Sandgren E, Cameron TM (2002) Robust design optimization of structures through consideration of variation. Comput Struct 80(20–21):1605–1613

Sandridge CA, Haftka RT (1989) Accuracy of eigenvalue derivatives from reduced-order structural models. J Guid Control Dyn 12(6):822–829

Schmidt M, Le Roux N, Bach F (2017) Minimizing finite sums with the stochastic average gradient. Math Program 162(1–2):83–112

Senior A, Heigold G, Ranzato M, Yang K (2013) An empirical study of learning rates in deep neural networks for speech recognition. In: 2013 IEEE international conference on acoustics, speech and signal processing. IEEE, pp 6724–6728

Sigmund O (2001) A 99 line topology optimization code written in Matlab. Struct Multidiscip Optim 21(2):120–127

Sigmund O (2007) Morphology-based black and white filters for topology optimization. Struct Multidiscip Optim 33(4–5):401–424

Sigmund O, Maute K (2013) Topology optimization approaches: a comparative review. Struct Multidiscip Optim 48(6):1031–1055

Skinner RW, Doostan A, Peters EL, Evans JA, Jansen KE (2019) Reduced-basis multifidelity approach for efficient parametric study of NACA airfoils. AIAA J 57:1481–1491

Spillers WR, MacBain KM (2009) Structural optimization. Springer, Berlin

Wang C, Chen X, Smola AJ, Xing EP (2013) Variance reduction for stochastic gradient optimization. In: Advances in neural information processing systems, pp 181–189

Weickum G, Eldred M, Maute K (2006) Multi-point extended reduced order modeling for design optimization and uncertainty analysis. In: 47th AIAA/ASME/ASCE/AHS/ASC structures, structural dynamics, and materials conference 14th AIAA/ASME/AHS adaptive structures conference 7th, p 2145

Xiu D, Karniadakis GE (2002) The Wiener-Askey polynomial chaos for stochastic differential equations. SIAM J Sci Comput 24(2):619–644

Yamazaki W, Rumpfkeil M, Mavriplis D (2010) Design optimization utilizing gradient/hessian enhanced surrogate model. In: 28th AIAA applied aerodynamics conference, p 4363

Zang C, Friswell M, Mottershead J (2005) A review of robust optimal design and its application in dynamics. Comput Struct 83(4–5):315–326

Zeiler MD (2012) ADADELTA: an adaptive learning rate method. ArXiv preprint arXiv:1212.5701

Zhou M, Rozvany G (1991) The COC algorithm, part II: topological, geometrical and generalized shape optimization. Comput Methods Appl Mech Eng 89(1):309–336

Acknowledgements

The authors acknowledge the support of the Defense Advanced Research Projects Agency’s (DARPA) TRADES Project Under Agreement HR0011-17-2-0022. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the DARPA.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Proof of Theorem 1

Assume \({{\varvec{\theta }}}_{k}\) is the vector of optimization parameters after k iterations of Algorithm 4. \({\mathbf {h}}_\mathrm {low}({{\varvec{\theta }}}_k)\) and \({\mathbf {h}}_\mathrm {high}({{\varvec{\theta }}}_k)\) are gradients of the objective with respect to \({{\varvec{\theta }}}_k\) using the low- and high-fidelity models, respectively. Under the assumption of strong convexityFootnote 1 of low-fidelity and high-fidelity objectives,

where \(\mu _\mathrm {low}\) and \(\mu _\mathrm {high}\) are constants. Similarly, if the low- and high-fidelity gradients are Lipschitz continuous,

where \(L_\mathrm {low},L_\mathrm {high}\) are the Lipschitz constants for low- and high-fidelity gradients, respectively. The parameters are updated in Algorithm 4 using

The expected value of the search direction \({\widehat{{\mathbf {h}}}}_k\) at iteration k is

where \(p_l = N_l/N\) and \(p_h = N_h/N\).

Next, we evaluate the following expectation

where \(L^2 = \max \left\{ p_l(1-p_l-p_h)^{(k-j)}\frac{L_\mathrm {low}^2\Vert {{\varvec{\theta }}}_j - {{\varvec{\theta }}}^* \Vert ^2}{\Vert {{\varvec{\theta }}}_k - {{\varvec{\theta }}}^* \Vert ^2},p_h(1-p_l-p_h)^{(k-j)}\frac{L_\mathrm {high}^2\Vert {{\varvec{\theta }}}_j - {{\varvec{\theta }}}^* \Vert ^2}{\Vert {{\varvec{\theta }}}_k - {{\varvec{\theta }}}^* \Vert ^2}\right\} \) for \(j=1,\ldots ,k\). Using the strong convexity property of \(J({{\varvec{\theta }}})\),

where \(\mu = \min \left\{ p_l(1-p_l-p_h)^{(k-j)}\frac{\mu _\mathrm {low}\Vert {{\varvec{\theta }}}_j - {{\varvec{\theta }}}^* \Vert ^2}{\Vert {{\varvec{\theta }}}_k - {{\varvec{\theta }}}^* \Vert ^2}\right. \), \(\left. p_h(1-p_l-p_h)^{(k-j)}\frac{\mu _\mathrm {high}\Vert {{\varvec{\theta }}}_j - {{\varvec{\theta }}}^* \Vert ^2}{\Vert {{\varvec{\theta }}}_k - {{\varvec{\theta }}}^* \Vert ^2}\right\} \) for \(j=1,\ldots ,k\); and learning rate is chosen as \(\eta = \mu /L^2\) subject to \(\mu ^2/L^2\le 1\). This completes the proof of Theorem 1.

The constants \(\mu \) and \(L^2\) in (47) are affected by the parameter update history as mentioned in Sect. 3.1. To see this, let us define

Hence, the constants \(\mu \) and \(L^2\) can be written as

Note that, if \(p_l\) and \(p_h\) are fixed \(\mu \) depends on \(c^k_{\min }\), i.e., on \(\min \left\{ (1-p_l-p_h)^{(k-j)} \Vert {\varvec{\theta }}_j-{\varvec{\theta }}^* \Vert ^2 \right\} \) for \(j=1,\ldots ,k\). Further, \((1-p_l-p_h)^{(k-j)}\) increases with j since \(1-p_l-p_h<1\) but \(\Vert {\varvec{\theta }}_j-{\varvec{\theta }}^* \Vert ^2\) depends on the parameter updates \(\left\{ {{\varvec{\theta }}}_j\right\} _{j=1}^k\). Similarly, \(L^2\) depends on \(c^k_{\max }\) and in turn on \(\left\{ {{\varvec{\theta }}}_j\right\} _{j=1}^k\). Hence, the parameter update history affects \(\mu \) and \(L^2\).

Proof of Theorem 2

Using the assumption of strong convexity of objective obtained from the high-fidelity models,

where \(\mu _\mathrm {high}\) is a constant. Similarly, if the high-fidelity gradients are Lipschitz continuous

where \(L_\mathrm {high}\) is the Lipschitz constant. For the inner iterations, we can evaluate the following expectation

where \({h}_q\) is the gradient with respect to \(\theta _q\) and \(\mathrm {Var}(\cdot )\) denotes variance of its argument. Note that, if \({\widehat{{\mathbf {h}}}}_\mathrm {low}={\mathbb {E}}[{\mathbf {h}}_\mathrm {low}({{\varvec{\theta }}};{\varvec{\xi }})]\) exactly,

where \(\alpha _{qq}=\mathrm {Cov}(h_{\mathrm {low},q}({{\varvec{\theta }}}_\mathrm {prev};{\varvec{\xi }}),h_{\mathrm {high},q}({{\varvec{\theta }}}_k;{\varvec{\xi }}))/\mathrm {Var}(h_{\mathrm {low},q}({{\varvec{\theta }}}_\mathrm {prev};{\varvec{\xi }}))\) and the correlation coefficient \(\rho _{hl,q}=\mathrm {Cov}(h_{\mathrm {low},q}({{\varvec{\theta }}}_\mathrm {prev};{\varvec{\xi }}),h_{\mathrm {high},q}({{\varvec{\theta }}}_k;{\varvec{\xi }}))/\sqrt{\mathrm {Var}(h_{\mathrm {low},q}({{\varvec{\theta }}}_\mathrm {prev};{\varvec{\xi }}))\mathrm {Var}(h_{\mathrm {high},q}({{\varvec{\theta }}}_{k};{\varvec{\xi }}))}\). On the other hand, if we use \(N_l\) samples to estimate \({\widehat{{\mathbf {h}}}}_\mathrm {low}\), i.e., \({\widehat{{\mathbf {h}}}}_\mathrm {low}=\frac{1}{N_l}\sum _{i=1}^{N_l}{\mathbf {h}}_\mathrm {low}({\varvec{\theta }}_\mathrm {prev};{\varvec{\xi }}_i)\) then we can write

where the coefficient \(\alpha _{qq}\) is obtained by minimizing the mean-squared error in \({\widehat{h}}_{\mathrm {high},q}\) [33, 76], i.e.,

and the correlation coefficient \(\rho _{hl,q}\) is same as before. Next, let us assume

for some constants \(\delta _{k,q}\). Further, assume \(\delta _k = \max \{1,\delta _{k,q} \}\) for \(q=1,\ldots ,n_{{\varvec{\theta }}}\). Hence,

At kth inner iteration let us use the learning rate \(\eta = \frac{\mu _\mathrm {high}}{2L^2_\mathrm {high}\delta _k}\). This leads to

where \({\underline{\delta }}=\min \{\delta _i\}_{i=1}^k\); and \({{\varvec{\theta }}}_1={{\varvec{\theta }}}_\mathrm {prev}\). Similarly, for jth outer iteration,

where \(\delta = \min \{{{\underline{\delta }}}_i\}_{i=1}^j\) subjected to \(\frac{\mu ^2_\mathrm {high}}{2L^2_\mathrm {high}\delta }\le 1\) and this proves Theorem 2.

Note that, if \(\rho _{hl}\) is close to 1, i.e., the low- and the high-fidelity models are highly correlated, then \(\delta _k\) can be assumed small. This implies that \(\delta \) will be close to 1 and, thus, we can use a larger learning rate \(\eta \). This, in turn, leads to a smaller right hand in (59) and a tighter bound on \({\mathbb {E}}[\Vert {{\widetilde{{{\varvec{\theta }}}}}}_{j} - {{\varvec{\theta }}}^*\Vert ^2|{{\varvec{\theta }}}_0]\).

Rights and permissions

About this article

Cite this article

De, S., Maute, K. & Doostan, A. Bi-fidelity stochastic gradient descent for structural optimization under uncertainty. Comput Mech 66, 745–771 (2020). https://doi.org/10.1007/s00466-020-01870-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00466-020-01870-w