Abstract

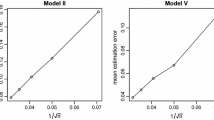

Recently, Yoo (Statistics 50:1086–1099, 2016) newly defines an informative predictor subspace to contain the central subspace. The method to estimate the informative predictor subspace does not require any of the conditions assumed to hold in usual sufficient dimension reduction methodologies. However, like sliced inverse regression (Li in J Am Stat Assoc 86:316–342, 1991) and sliced average variance estimation (Cook and Weisberg in J Am Stat Assoc 86:328–332, 1991), its non-asymptotic behavior in the estimation is sensitive to the choices of the categorization of the predictors and response. The paper develops an estimation approach that is robust to the categorization choices. For this, sample kernel matrices are combined in two ways. Numerical studies and real data analysis are presented to confirm the potential usefulness of the proposed approach in practice.

Similar content being viewed by others

References

An, H., Won, S., & Yoo, J. K. (2017). Fused sliced inverse regression in survival analysis. Journal of the Korean Statistical Society, 46, 623–628.

Brown, P. J., Fearn, T., & Vannucci, M. (2001). Bayesian wavelet regression on curves with application to a spectroscopic calibration problem. Journal of the American Statistical Association, 96, 398–408.

Cook, R. D. (1998a). Principal Hessian directions revisited. Journal of the American Statistical Association, 93, 84–100.

Cook, R. D. (1998b). Regression graphics : Ideas for studying regressions through graphics. New York: Wiley.

Cook, R. D., & Weisberg, S. (1991). Discussion of Sliced inverse regression for dimension reduction. Journal of the American Statistical Association, 86, 328–332.

Cook, R. D., & Zhang, X. (2014). Fused estimators of the central subspace in sufficient dimension reduction. Journal of the American Statistical Association, 109, 815–827.

Li, B., & Dong, Y. (2009). Dimension reduction for nonelliptically distributed predictors. Annals of Statistics, 37, 1272–1298.

Li, K. C. (1991). Sliced inverse regression for dimension reduction. Journal of the American Statistical Association, 86, 316–342.

Li, L., Cook, R. D., & Nachtsheim, C. J. (2004). Cluster-based estimation for sufficient dimension reduction. Computational Statistics & Data Analysis, 47, 175–193.

Weisberg, S. (2002). Dimension reduction regression in R. Journal of Statistical Software, 7, 1–22.

Yoo, J. K. (2016). Sufficient dimension reduction through informative predictor subspace. Statistics, 50, 1086–1099.

Yoo, J. K., & Im, Y. (2014). Multivariate seeded dimension reduction. The Journal of the Korean Statistical Society, 43, 559–566.

Acknowledgements

For Jae Keun Yoo, this work was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Korean Ministry of Education (NRF-2019R1F1A1050715/2019R1A6A1A11051177).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Um, H.Y., Yoo, J.K. Fused clustering mean estimation of central subspace. J. Korean Stat. Soc. 49, 350–363 (2020). https://doi.org/10.1007/s42952-019-00015-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42952-019-00015-x

Keywords

- Clustering mean method

- Fused estimation

- Informative predictor subspace

- K-means clustering

- Sufficient dimension reduction