Abstract

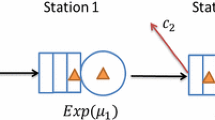

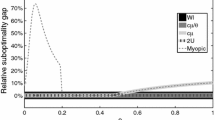

Motivated by service systems, such as telephone call centers and emergency departments, we consider admission control for a two-class multi-server loss system with periodically varying parameters and customers who may abandon from service. Assuming mild conditions for the parameters, a dynamic programming formulation is developed. We show that under the infinite horizon discounted problem, there exists an optimal threshold policy and provide conditions for a customer class to be preferred for each fixed time, extending stationary results to the non-stationary setting. We approximate the non-stationary problem by discretizing the time horizon into equally spaced intervals and examine how policies for this approximation change as a function of time and parameters numerically. We compare the performance of these approximations with several admission policies used in practice in a discrete-event simulation study. We show that simpler admission policies that ignore non-stationarity or abandonments lead to significant losses in rewards.

Similar content being viewed by others

References

Altman, E., Jiménez, T., Koole, G.: On optimal call admission control in resource-sharing system. IEEE Trans. Commun. 49(9), 1659–1668 (2001)

Blackwell, D.: Discrete dynamic programming. Ann. Math. 33, 719–726 (1962)

Buyukkoc, C., Varaiya, P., Walrand, J.: The \(c\mu \)-rule revisited. Adv. Appl. Probab. 17(1), 237–238 (1985)

Chavis, J.T., Cochran, A.L., Kocher, K.E., Washington, V.N., Zayas-Caban, G.: A simulation model of patient flow through the emergency department to determine the impact of a short stay unit on hospital congestion. In: Roeder, T.M.K., Frazier, P.I., Szechtman, R., Zhou, E., Huschka, T., Chick, S.E. (eds.) Proceedings of the 2016 Winter Simulation Conference, pp. 1982–1993. Institute of Electrical and Electronics Engineers Inc, Piscataway, NJ (2016)

Cudina, M., Ramanan, K.: Asymptotically optimal controls for time-inhomogeneous networks. SIAM J. Control Optim. 49(2), 611–645 (2011)

Feinberg, E.A., Reiman, M.I.: Optimality of randomized trunk reservation. Probab. Eng. Inf. Sci. 8(04), 463–489 (1994)

Fischer, W., Meier-Hellstern, K.: The Markov-modulated Poisson process (MMPP) cookbook. Perform. Eval. 18(2), 149–171 (1993)

Fu, M.C., Marcus, S.I., Wang, I.-J.: Monotone optimal policies for a transient queueing staffing problem. Oper. Res. 48(2), 327–331 (2000)

Green, L., Kolesar, P.: The pointwise stationary approximation for queues with nonstationary arrivals. Manag. Sci. 37(1), 84–97 (1991)

Green, L.V., Kolesar, P.J.: On the accuracy of the simple peak hour approximation for Markovian queues. Manag. Sci. 41(8), 1353–1370 (1995)

Green, L.V., Kolesar, P.J.: The lagged PSA for estimating peak congestion in multiserver Markovian queues with periodic arrival rates. Manag. Sci. 43(1), 80–87 (1997)

Green, L.V., Kolesar, P.J.: A note on approximating peak congestion in \(M_t/G/\infty \) queues with sinusoidal arrivals. Manag. Sci. 44(11–part–2), S137–S144 (1998)

Green, L.V., Kolesar, P.J., Soares, J.: Improving the SIPP approach for staffing service systems that have cyclic demands. Oper. Res. 49(4), 549–564 (2001)

Hampshire, R.C., Massey, W.A.: Dynamic Optimization with Applications to Dynamic Rate Queues. TUTORIALS in Operations Research, pp. 210–247. INFORMS Society, Catonsville (2010)

Hordijk, A., Puterman, M.L.: On the convergence of policy iteration in finite state undiscounted Markov decision processes: the unichain case. Math. Oper. Res. 12(1), 163–176 (1987)

Ingolfsson, A., Akhmetshina, E., Budge, S., Li, Y., Wu, X.: A survey and experimental comparison of service-level-approximation methods for nonstationary M (t)/M/s (t) queueing systems with exhaustive discipline. INFORMS J. Comput. 19(2), 201–214 (2007)

Jain, A., Elmaraghy, H.: Production scheduling/rescheduling in flexible manufacturing. Int. J. Prod. Res. 35(1), 281–309 (1997)

Jaśkiewicz, A.: A fixed point approach to solve the average cost optimality equation for semi-Markov decision processes with Feller transition probabilities. Commun. Stat. Theory Methods 36(14), 2559–2575 (2007)

Kim, S.-H., Whitt, W.: Are call center and hospital arrivals well modeled by nonhomogeneous Poisson processes? Manuf. Serv. Oper. Manag. 16(3), 464–480 (2014)

Koole, G.: Structural results for the control of queueing systems using event-based dynamic programming. Queueing Syst. 30(3–4), 323–339 (1998)

Koole, G., Mandelbaum, A.: Queueing models of call centers: an introduction. Ann. Oper. Res. 113(1–4), 41–59 (2002)

Lewis, M.E., Ayhan, H., Foley, R.D.: Bias optimal admission control policies for a multiclass nonstationary queueing system. J. Appl. Probab. 39, 20–37 (2002)

Lewis, P.A.: Some results on tests for Poisson processes. Biometrika 52, 67–77 (1965)

Lippman, S.: Applying a new device in the optimization of exponential queuing systems. Oper. Res. 23(4), 687–710 (1975)

Martin-Löf, A.: Optimal control of a continuous-time Markov chain with periodic transition probabilities. Oper. Res. 15(5), 872–881 (1967)

Miller, B.L.: A queueing reward system with several customer classes. Manag. Sci. 16(3), 234–245 (1969)

Örmeci, E.L., Burnetas, A., van der Wal, J.: Admission policies for a two class loss system. Stoch. Model 17(4), 513–539 (2001)

Örmeci, E.L., van der Wal, J.: Admission policies for a two class loss system with general interarrival times. Stoch. Models 22(1), 37–53 (2006)

Puterman, M.L.: Markov Decision Processes: Discrete Stochastic Dynamic Programming. Wiley Series in Probability and Mathematical Statistics. Wiley, New York (1994)

Puterman, M.L., Shin, M.C.: Modified policy iteration algorithms for discounted Markov decision problems. Manag. Sci. 24(11), 1127–1137 (1978)

Roubos, A., Bhulai, S., Koole, G.: Flexible staffing for call centers with non-stationary arrival rates In: Markov decision processes in practice, pp. 487–503. Springer, Cham (2017)

Roubos, D., Bhulai, S.: Approximate dynamic programming techniques for the control of time-varying queuing systems applied to call centers with abandonments and retrials. Probab. Eng. Inf. Sci. 24(01), 27–45 (2010)

Rowe, B.H., Channan, P., Bullard, M., Blitz, S., Saunders, L.D., Rosychuk, R.J., Lari, H., Craig, W.R., Holroyd, B.R.: Characteristics of patients who leave emergency departments without being seen. Acad. Emerg. Med. 13(8), 848–852 (2006)

Savin, S.V., Cohen, M.A., Gans, N., Katalan, Z.: Capacity management in rental businesses with two customer bases. Oper. Res. 53(4), 617–631 (2005)

Stidham Jr., S.: Optimal control of admission to a queueing system. IEEE Trans. Autom. Control 30(8), 705–713 (1985)

Wakuta, K.: Arbitrary state semi-Markov decision processes. Optimization 18(3), 447–454 (1987)

Wei, Q., Guo, X.: New average optimality conditions for semi-Markov decision processes in Borel spaces. J. Optim. Theory Appl. 153(3), 709–732 (2012)

Yoon, S., Lewis, M.E.: Optimal pricing and admission control in a queueing system with periodically varying parameters. Queueing Syst. 47(3), 177–199 (2004)

Zayas-Cabán, G., Xie, J., Green, L.V., Lewis, M.E.: Dynamic control of a tandem system with abandonments. Queueing Syst. 84(3–4), 279–293 (2016)

Acknowledgements

We would like to thank the Associate Editor and the two referees for several and insightful comments.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

A Appendix

A Appendix

This section is dedicated to showing that the discounted reward optimality equations have a solution and that these solutions can be computed via successive approximations. The result is an application of Theorems 4.1 and 4.2 in Wakuta [36]. There are minor adjustments to the proofs since the distribution of the state change and the time between decision points is not separable. The following notation and definitions are used throughout this Appendix.

Let \(\mathbb {X}\) and \(\mathbb {A}\), respectively, denote the state and action space for a generic semi-Markov decision process (SMDP).

Let the Euclidean distance between \(\ell = \left( \left( i,j,k,z\right) ,a\right) \), \(\ell ' = \left( \left( i',j',k',z'\right) ,a'\right) \in \text {Gr}(\mathbb {A})\) be given by

$$\begin{aligned} \Vert \ell -\ell '\Vert&:= \sqrt{(i-i')^2 + (j-j')^2 + (k-k')^2 + (z-z')^2 + (a-a')^2}. \end{aligned}$$

Definition A1

The transition kernel \(Q(\cdot |x,a)\) is called strongly continuous provided the mapping \((x,a) \rightarrow \int _{x' \in \mathbb {X}} \! f(x') \, Q(\mathrm {d}x'|x,a)\) is continuous and bounded whenever f is measurable and bounded on \(\mathbb {X}\).

1.1 A.1 Discounted reward optimality equations (DROE)

In this section, we show that the DROE have a solution and that the solution can be computed via the (contraction) mappings defined in Sect. 4. Before doing so we note that because the rewards are bounded below, it suffices (and is in fact equivalent) to consider the case with nonnegative rewards. That is to say that adding the lower bound on rewards to all rewards does not change the optimal control. Let this lower bound be denoted L and, throughout this section, assume \(r(x,a) \ge 0\).

Lemma 2

The following hold:

- 1.

There exist a Borel measurable function w on \(\mathbb {X}\) with \(w(x) \ge 1, x \in \mathbb {X}\), a number \(\xi \) (\(0< \xi < 1\)), and a number \(M > 0\) such that

- (a)

for any \((x,a) \in Gr(\mathbb {A})\)

$$\begin{aligned} \Vert r(x,a)\Vert \le M w(x). \end{aligned}$$ - (b)

for any policy, the sequence of decision times \(\{t_n, n \ge 0\}\) are such that \(\limsup _{n \rightarrow \infty } t_n = \infty \) almost surely.

- (a)

- 2.

\(\mathbb {X}\) and \(\mathbb {A}\) are non-empty Borel subsets of a complete separable metric space.

- 3.

A(x) is a Borel measurable compact-valued multi-function from \(\mathbb {X}\) into \(\mathbb {A}\).

- 4.

The one-stage reward function r(x, a) is upper-semicontinuous on \(\mathbb {A}\) for each fixed \(x \in \mathbb {X}\).

- 5.

The transition kernel Q is strongly continuous.

We remark that it is usual that Statement 1(b) (see Proposition 3.1 of [36]) is proved under the assumption of the existence of a Lyapunov function (see inequality (3.2) in [36]).

Proof

Statement 1(a) holds with \(w(x) \equiv 1\) and for any M that maximizes the one-stage reward functions r(x, a) (below and above). For Statement 1(b), notice that if the initial state is (i, j, k, 0), the expected number of transitions before the dummy transition at time T is \({{\,\mathrm{\mathbb {E}}\,}}N(T) < \infty \) (in fact \(\varPsi T\)), where N(T) is a Poisson process of rate \(\varPsi \). This implies that \(N(T) < \infty \) almost surely. At time T, there is a renewal. Since there are deterministic renewals at times \((T, 2T, \ldots )\), we have that it requires an infinite time to see an infinite number of transitions (almost surely).

Statements 2, 3 and 4 hold by construction and by the finiteness of A(x) for each x. For Statement 5, it is enough to show that

is continuous for any bounded, real-valued measurable function f. To this end, fix \(\epsilon > 0\) and let \(x = (i,j,k,z), x' = (i',j',k',z') \in \mathbb {X}\) with \(z < z'\). Next, choose \(\delta < 1\) so that \(\Vert (x,a) - (x',a')\Vert < \delta \) implies that \(i = i', j = j', k = k'\) and \(a = a'\). It follows that

which simplifies to

Let \(M < \infty \) be such that \(\sup _{x \in \mathbb {X}} |f(x)| \le M < \infty \). The convexity of the function \(x^2\) for \(0< x < \infty \) implies that \(\Big ( \frac{f+g}{2} \Big )^2 \le \frac{1}{2} \big (f^2 + g^2 \big )\), and hence, that \((f+g)^2 \le 2f^2 + 2g^2\) for any two functions f, g. It follows that the previous expression is bounded above by

This last expression is bounded above by \(\sqrt{2} M (1 - e^{-(1+\alpha )(z'-z)})\). The continuity of \(e^{-x}\) on \(-\infty< x < \infty \) implies that we can choose \(\delta \) so that \(z'-z < \delta \) implies \(\sqrt{2} M (1 - e^{-(1+\alpha )(z'-z)}) < \epsilon \). This proves the last statement. \(\square \)

The previous lemma implies that Theorem 4.1 in [36] holds with some minor adjustments. We include the statement and proof of necessity (for the current model) for completeness. Sufficiency holds with almost no changes to that in [36] since A(x) is finite for each \(x \in \mathbb {X}\).

Theorem 3

A policy \(\pi \) is optimal if and only if its reward \(v^{\pi }_{\alpha }(x)\) satisfies the discounted reward optimality equations (see (4.1)).

Proof

Suppose \(v^{\pi '}_{\alpha }(x)\) satisfies the DROE. Fix \(n \ge 1\). Denote the history under a generic policy \(\pi = \{d_0, d_1, \ldots \}\) by

and note that

for any \(n \ge 2\). For ease of notation, for any fixed \(s \in \mathbb {X}\), let p(y|s, a) denote the probability of next entering state y given that the current state is s and action a is chosen. Let \(\{\tau _n, n \ge 1\}\) be the inter-event times so that \(\tau _n = t_n - t_{n-1}\). Consider the second term above,

where we have denoted by t(y) the discount time associated with next transition and the last inequality holds by taking the maximum in the DROE and the assumption that \(v_{\alpha }^{\pi '}\) satisfies it. Thus, using (8.2) yields

The first two terms in the sum telescope, yielding the following inequality:

Statement 1(a) of Lemma 2 implies that \(v_{\alpha }^{\pi '}\) is bounded. The Dominated Convergence Theorem and Statement 1(b) of Lemma 2 yield that \({{\,\mathrm{\mathbb {E}}\,}}_{\pi ,x}e^{-\alpha t_{n}} v_{\alpha }^{\pi '}(x_{n}) \rightarrow 0\) as \(n \rightarrow \infty \). The last term converges to the total discounted reward of \(\pi \) so that we have \(v_{\alpha }^{\pi '} \ge v_{\alpha }^{\pi }\), which yields the result. \(\square \)

We conclude this section by stating Lemma 5.7 and Theorem 5.8 of [36], which follow in the same manner.

Theorem 4

The following hold for the discounted reward model:

- 1.

The mapping \(U_{n, \alpha }\) maps the set of bounded functions on \(\mathbb {X}\) back into the same set and is a contraction mapping. This implies that \(v_{\alpha }\) is the unique solution to the DROE.

- 2.

There exists a Borel measurable function f that achieves the maximum in the DROE.

- 3.

There exists an optimal stationary policy.

Rights and permissions

About this article

Cite this article

Zayas-Cabán, G., Lewis, M.E. Admission control in a two-class loss system with periodically varying parameters and abandonments. Queueing Syst 94, 175–210 (2020). https://doi.org/10.1007/s11134-019-09620-3

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11134-019-09620-3