Abstract

Modern problems in AI or in numerical analysis require nonsmooth approaches with a flexible calculus. We introduce generalized derivatives called conservative fields for which we develop a calculus and provide representation formulas. Functions having a conservative field are called path differentiable: convex, concave, Clarke regular and any semialgebraic Lipschitz continuous functions are path differentiable. Using Whitney stratification techniques for semialgebraic and definable sets, our model provides variational formulas for nonsmooth automatic differentiation oracles, as for instance the famous backpropagation algorithm in deep learning. Our differential model is applied to establish the convergence in values of nonsmooth stochastic gradient methods as they are implemented in practice.

Similar content being viewed by others

Notes

Although all results we provide are generalizable to complete Riemannian manifolds.

Which is possible thanks to Lemma 1.

Valadier’s terminology finds here a surprising justification, since “saine”, healthy in English, is chosen as an antonym to “pathological”.

We only consider embedded submanifolds.

In [12] the authors assume f to be arbitrary and obtain similar result, for simplicity we pertain to the Lipschitz case.

From a practical point of view, qualification is hard to enforce or even check.

Which we shall not define formally since it is not essential to our purpose.

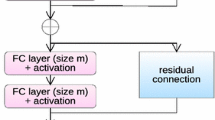

If a unique \(\sigma :{\mathbb {R}}\rightarrow {\mathbb {R}}\) is applied to each coordinate of each layer, this amounts to consider a conservative field for \(\sigma \), for example its Clarke subgradient.

References

Abadi, M., Barham, P., Chen, J., Chen, Z., Davis, A., Dean, J., Devin, M., Ghemawat, S., Irving, G., Isard, M., Kudlur, M., Levenberg, J., Monga, R., Moore, S., Murray, D., Steiner, B., Tucker, P., Vasudevan, V., Warden, P., Wicke, M., Yu, Y., Zheng, X.: Tensorflow: a system for large-scale machine learning. In: Symposium on Operating Systems Design and Implementation, OSDI, vol. 6, pp. 265–283 (2016)

Adil, S.: Opérateurs monotones aléatoires et application à l’optimisation stochastique. PhD Thesis, Paris Saclay (2018)

Aliprantis, C.D., Border, K.C.: Infinite Dimensional Analysis, 3rd edn. Springer, Berlin (2005)

Attouch, H., Goudou, X., Redont, P.: The heavy ball with friction method, I. The continuous dynamical system: global exploration of the local minima of a real-valued function by asymptotic analysis of a dissipative dynamical system. Commun. Contemp. Math. 2(1), 1–34 (2000)

Aubin, J.P., Cellina, A.: Differential Inclusions: Set-valued Maps and Viability Theory, vol. 264. Springer, Berlin (1984)

Aubin, J.-P., Frankowska, H.: Set-Valued Analysis. Springer, Berlin (2009)

Barakat, A., Bianchi, P.: Convergence and Dynamical Behavior of the Adam Algorithm for Non Convex Stochastic Optimization (2018). arXiv preprint arXiv:1810.02263

Baydin, A., Pearlmutter, B., Radul, A., Siskind, J.: Automatic differentiation in machine learning: a survey. J. Mach. Learn. Res. 18(1), 5595–5637 (2018)

Benaïm, M.: Dynamics of stochastic approximation algorithms. In: Séminaire de Probabilités XXXIII, pp. 1–68. Springer, Berlin, Heidelberg (1999)

Benaïm, M., Hofbauer, J., Sorin, S.: Stochastic approximations and differential inclusions. SIAM J. Control Optim. 44(1), 328–348 (2005)

Bianchi, P., Hachem, W., Salim, A.: Constant step stochastic approximations involving differential inclusions: stability, long-run convergence and applications. Stochastics 91(2), 288–320 (2019)

Bolte, J., Daniilidis, A., Lewis, A., Shiota, M.: Clarke subgradients of stratifiable functions. SIAM J. Optim. 18(2), 556–572 (2007)

Bolte, J., Sabach, S., Teboulle, M.: Proximal alternating linearized minimization for nonconvex and nonsmooth problems. Math. Program. 146(1–2), 459–494 (2014)

Borkar, V.: Stochastic Approximation: A Dynamical Systems Viewpoint, vol. 48. Springer, Berlin (2009)

Borwein, J., Lewis, A.S.: Convex Analysis and Nonlinear Optimization: Theory and Examples. Springer, Berlin (2010)

Borwein, J.M., Moors, W.B.: Essentially smooth Lipschitz functions. J. Funct. Anal. 149(2), 305–351 (1997)

Borwein, J.M., Moors, W.B.: A chain rule for essentially smooth Lipschitz functions. SIAM J. Optim. 8(2), 300–308 (1998)

Borwein, J., Moors, W., Wang, X.: Generalized subdifferentials: a Baire categorical approach. Trans. Am. Math. Soc. 353(10), 3875–3893 (2001)

Bottou, L., Bousquet, O.: The tradeoffs of large scale learning. In: Platt, J.C., Koller, D., Singer, Y., Roweis, S.T. (eds.) Advances in Neural Information Processing Systems, vol. 20, pp. 161–168. Curran Associates, Inc. (2008)

Bottou, L., Curtis, F.E., Nocedal, J.: Optimization methods for large-scale machine learning. SIAM Rev. 60(2), 223–311 (2018)

Castera, C., Bolte, J., Févotte, C., Pauwels, E.: An inertial Newton algorithm for deep learning (2019). arXiv preprint arXiv:1905.12278

Clarke, F.H.: Optimization and Nonsmooth Analysis. SIAM, Philadelphia (1983)

Chizat, L., Bach F.: On the global convergence of gradient descent for over-parameterized models using optimal transport. In: Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 31, pp. 3036–3046. Curran Associates, Inc. (2018)

Corliss, G., Faure, C., Griewank, A., Hascoet, L., Naumann, U. (eds.): Automatic Differentiation Of Algorithms: From Simulation to Optimization. Springer, Berlin (2002)

Correa, R., Jofre, A.: Tangentially continuous directional derivatives in nonsmooth analysis. J. Optim. Theory Appl. 61(1), 1–21 (1989)

Coste, M.: An Introduction to O-Minimal Geometry. RAAG notes, Institut de Recherche Mathématique de Rennes, p. 81 (1999)

Davis, D., Drusvyatskiy, D., Kakade, S., Lee, J.D.: Stochastic subgradient method converges on tame functions. Found. Comput. Math. 20(1), 119–154 (2020)

Evans, L.C., Gariepy, R.F.: Measure Theory and Fine Properties of Functions, Revised edn. Chapman and Hall/CRC, London (2015)

Glorot, X., Bordes, A., Bengio, Y.: Deep sparse rectifier neural networks. In: Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, pp. 315–323 (2011)

Griewank, A., Walther, A.: Evaluating Derivatives: Principles and Techniques of Algorithmic Differentiation, vol. 105. SIAM, Philadelphia (2008)

Griewank, A.: On stable piecewise linearization and generalized algorithmic differentiation. Optim. Methods Softw. 28(6), 1139–1178 (2013)

Griewank, A., Walther, A., Fiege, S., Bosse, T.: On Lipschitz optimization based on gray-box piecewise linearization. Math. Program. 158(1–2), 383–415 (2016)

Ioffe, A.D.: Nonsmooth analysis: differential calculus of nondifferentiable mappings. Trans. Am. Math. Soc. 266(1), 1–56 (1981)

Ioffe, A.D.: Variational Analysis of Regular Mappings. Springer Monographs in Mathematics. Springer, Cham (2017)

Kakade, S.M., Lee, J.D.: Provably correct automatic sub-differentiation for qualifed programs. In: Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 31, pp 7125–7135. Curran Associates, Inc. (2018)

Kurdyka, K.: On gradients of functions definable in o-minimal structures. Ann. l’inst. Fourier 48(3), 769–783 (1998)

Kurdyka, K., Mostowski, T., Parusinski, A.: Proof of the gradient conjecture of R. Thom. Ann. Math. 152(3), 763–792 (2000)

Kushner, H., Yin, G.G.: Stochastic Approximation and Recursive Algorithms and Applications, vol. 35. Springer, Berlin (2003)

Le Cun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436–444 (2015)

Ljung, L.: Analysis of recursive stochastic algorithms. IEEE Trans. Autom. Control 22(4), 551–575 (1977)

Majewski, S., Miasojedow, B., Moulines, E.: Analysis of nonsmooth stochastic approximation: the differential inclusion approach (2018). arXiv preprint arXiv:1805.01916

Mohammadi, B., Pironneau, O.: Applied Shape Optimization for Fluids. Oxford University Press, Oxford (2010)

Moulines, E., Bach, F.R.: Non-asymptotic analysis of stochastic approximation algorithms for machine learning. In Shawe-Taylor, J., Zemel, R.S., Bartlett, P.L., Pereira, F., Weinberger, K.Q. (eds.) Advances in Neural Information Processing Systems, vol. 24, pp. 451–459. Curran Associates, Inc. (2011)

Moreau J.-J.: Fonctionnelles sous-différentiables, Séminaire Jean Leray (1963)

Mordukhovich, B.S.: Variational Analysis and Generalized Differentiation i: Basic Theory. Springer, Berlin (2006)

Paszke, A., Gross, S., Chintala, S., Chanan, G., Yang, E., DeVito, Z., Lin, Z., Desmaison, A., Antiga, L., Lerer, A.: Automatic differentiation in Pytorch. In: NIPS Workshops (2017)

Robbins, H., Monro, S.: A stochastic approximation method. Ann. Math. Stat. 22, 400–407 (1951)

Rockafellar, R.T.: Convex functions and dual extremum problems. Doctoral dissertation, Harvard University (1963)

Rockafellar, R.: On the maximal monotonicity of subdifferential mappings. Pacific J. Math. 33(1), 209–216 (1970)

Rockafellar, R.T., Wets, R.J.B.: Variational Analysis. Springer, Berlin (1998)

Rumelhart, E., Hinton, E., Williams, J.: Learning representations by back-propagating errors. Nature 323, 533–536 (1986)

Speelpenning, B.: Compiling fast partial derivatives of functions given by algorithms (No. COO-2383-0063; UILU-ENG-80-1702; UIUCDCS-R-80-1002). Illinois Univ., Urbana (USA). Dept. of Computer Science (1980)

Thibault, L., Zagrodny, D.: Integration of subdifferentials of lower semicontinuous functions on Banach spaces. J. Math. Anal. Appl. 189(1), 33–58 (1995)

Thibault, L., Zlateva, N.: Integrability of subdifferentials of directionally Lipschitz functions. In: Proceedings of the American Mathematical Society, pp. 2939–2948 (2005)

Valadier, M.: Entraînement unilatéral, lignes de descente, fonctions lipschitziennes non pathologiques. C. R. l’Acad. Sci. 308, 241–244 (1989)

van den Dries, L., Miller, C.: Geometric categories and o-minimal structures. Duke Math. J 84(2), 497–540 (1996)

Wang, X.: Pathological Lipschitz functions in \({\mathbb{R}}^n\). Master thesis, Simon Fraser University (1995)

Acknowledgements

The authors acknowledge the support of AI Interdisciplinary Institute ANITI funding, through the French “Investing for the Future— PIA3” program under the Grant Agreementi ANR-19-PI3A-0004, Air Force Office of Scientific Research, Air Force Material Command, USAF, under Grant Numbers FA9550-19-1-7026, FA9550-18-1-0226, and ANR MasDol. J. Bolte acknowledges the support of ANR Chess, Grant ANR-17-EURE-0010 and ANR OMS. The authors would like to thank Lionel Thibault, Sylvain Sorin for a carefull reading of an early version of this work and Gersende Fort for her very valuable comments and discussions on stochastic approximation.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Bolte, J., Pauwels, E. Conservative set valued fields, automatic differentiation, stochastic gradient methods and deep learning. Math. Program. 188, 19–51 (2021). https://doi.org/10.1007/s10107-020-01501-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-020-01501-5

Keywords

- Deep learning

- Automatic differentiation

- Backpropagation algorithm

- Nonsmooth stochastic optimization

- Definable sets

- o-Minimal structures

- Stochastic gradient

- Clarke subdifferential

- First order methods