Abstract

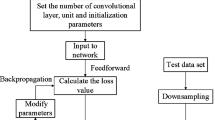

Huge amount of time series data over several domains such as engineering, biomedical and finance, demands the development of efficient methods for the problem of time series classification. The classification of univariate and multivariate time series together using a single architecture is a very difficult task. In this work, a bio-inspired convolutional spiking neural network (CSNN) is proposed for both univariate and multivariate time series. For this, first we develop a simple transformation to convert raw time series sequences into matrices. The CSNN is a three staged framework which include convolutional feature extraction, spike encoding using soft leaky integrate and fire (Soft-LIf) and classification. As spikes generated are differentiable, thus the learning algorithm for CSNN uses error-backpropagation with cyclical learning rates (CLR) and RMSprop optimizer. Additionally, validation based stopping rules are employed to overcome the overfitting which also provides a set of parameters associated with low validation set loss. Thereafter, to demonstrate the accuracy and robustness of proposed CSNN model, we have used University of California (UCR) univariate as well as University of East Anglia (UEA) multivariate datasets to perform the experiments. Moreover, we conduct comparative empirical performance evaluation with benchmark methods and also with recent deep networks proposed for time series classification. Our results reveal that proposed CSNN advances the baseline methods by achieving higher performance accuracy for both univariate and multivariate datasets. It is shown that the CLR with RMSprop optimizer is able to achieve faster convergence, however CLR and adaptive rates are considered competitive to each other. In addition, we also address the optimal model selection and study the effects of different factors on the performance of proposed CSNN.

Similar content being viewed by others

References

Song G, Dai Q (2017) A novel double deep elms ensemble system for time series forecasting. Knowl-Based Syst 134:31–49

Acharya UR, Oh SL, Hagiwara Y, Tan JH, Adam M, Gertych A, San Tan R (2017) A deep convolutional neural network model to classify heartbeats. Comput Biol Med 89:389–396

Moskovitch R, Elovici Y, Rokach L (2008) Detection of unknown computer worms based on behavioral classification of the host. Comput Stat Data Anal 52(9):4544–4566

Lines J, Bagnall A, Caiger-Smith P, Anderson S (2011) Classification of household devices by electricity usage profiles. In: International Conference on Intelligent Data Engineering and Automated Learning. Springer, pp 403–412

Li H (2015) On-line and dynamic time warping for time series data mining. Int J Mach Learn Cybern 6 (1):145–153

Kate RJ (2016) Using dynamic time warping distances as features for improved time series classification. Data Min Knowl Disc 30(2):283–312

Rakthanmanon T, Campana B, Mueen A, Batista G, Westover B, Zhu Q, Zakaria J, Keogh E (2013) Addressing big data time series: Mining trillions of time series subsequences under dynamic time warping. ACM Trans Knowl Discov Data (TKDD) 7(3):10

Jeong YS, Jeong MK, Omitaomu OA (2011) Weighted dynamic time warping for time series classification. Pattern Recogn 44(9):2231–2240

Baydogan MG, Runger G, Tuv E (2013) A bag-of-features framework to classify time series. IEEE Trans Pattern Anal Mach Intell 35(11):2796–2802

Schäfer P (2015) The boss is concerned with time series classification in the presence of noise. Data Min Knowl Disc 29(6):1505–1530

Schäfer P (2016) Scalable time series classification. Data Min Knowl Disc 30(5):1273–1298

Antonucci A, De Rosa R, Giusti A, Cuzzolin F (2015) Robust classification of multivariate time series by imprecise hidden markov models. Int J Approx Reason 56:249–263

Han X, Dai Q (2018) Batch-normalized mlpconv-wise supervised pre-training network in network. Appl Intell 48(1):142–155

Schmidhuber J (2015) Deep learning in neural networks: an overview. Neural Netw 61:85–117

Bengio Y, Courville A, Vincent P (2013) Representation learning: a review and new perspectives. IEEE Trans Pattern Anal Mach Intell 35(8):1798–1828

Längkvist M, Karlsson L, Loutfi A (2014) A review of unsupervised feature learning and deep learning for time-series modeling. Pattern Recogn Lett 42:11–24

Bengio Y (2013) Deep learning of representations: Looking forward. In: International Conference on Statistical Language and Speech Processing. Springer, pp 1–37

Zheng Y, Liu Q, Chen E, Ge Y, Zhao JL (2016) Exploiting multi-channels deep convolutional neural networks for multivariate time series classification. Front Comput Sci 10(1):96–112

Cui Z, Chen W, Chen Y (2016) Multi-scale convolutional neural networks for time series classification. arXiv:160306995

Wang Z, Yan W, Oates T (2017) Time series classification from scratch with deep neural networks: a strong baseline. In: 2017 International Joint Conference on Neural networks (IJCNN). IEEE. 1578–1585

Zhao B, Lu H, Chen S, Liu J, Wu D (2017) Convolutional neural networks for time series classification. J Syst Eng Electron 28(1):162–169

Maass W (1997) Networks of spiking neurons: the third generation of neural network models. Neural Netw 10(9):1659–1671

Serre T (2015) Hierarchical models of the visual system. Encyclopedia of computational neuroscience, pp 1309–1318

Freiwald WA, Tsao DY (2010) Functional compartmentalization and viewpoint generalization within the macaque face-processing system. Science 330(6005):845–851

O’Connor P, Neil D, Liu SC, Delbruck T, Pfeiffer M (2013) Real-time classification and sensor fusion with a spiking deep belief network. Front Neurosci 7:178

Merolla PA, Arthur JV, Alvarez-Icaza R, Cassidy AS, Sawada J, Akopyan F, Jackson BL, Imam N, Guo C, Nakamura Y et al (2014) A million spiking-neuron integrated circuit with a scalable communication network and interface. Science 345(6197):668–673

Herikstad R, Baker J, Lachaux JP, Gray CM, Yen SC (2011) Natural movies evoke spike trains with low spike time variability in cat primary visual cortex. J Neurosci 31(44):15844–15860

Meftah B, Lezoray O, Benyettou A (2010) Segmentation and edge detection based on spiking neural network model. Neural Process Lett 32(2):131–146

Kasabov N, Feigin V, Hou ZG, Chen Y, Liang L, Krishnamurthi R, Othman M, Parmar P (2014) Evolving spiking neural networks for personalised modelling, classification and prediction of spatio-temporal patterns with a case study on stroke. Neurocomputing 134:269–279

Tavanaei A, Maida A (2017) Bio-inspired multi-layer spiking neural network extracts discriminative features from speech signals. In: International Conference on Neural Information Processing. Springer, pp 899–908

Maass W (1996) Lower bounds for the computational power of networks of spiking neurons. Neural Comput 8(1):1–40

Diehl PU, Neil D, Binas J, Cook M, Liu SC, Pfeiffer M (2015) Fast-classifying, high-accuracy spiking deep networks through weight and threshold balancing. In: 2015 International joint conference on neural networks, IJCNN. IEEE, pp 1–8

Esser SK, Appuswamy R, Merolla P, Arthur JV, Modha DS (2015) Backpropagation for energy-efficient neuromorphic computing. In: Advances in Neural Information Processing Systems, pp 1117–1125

Bohte SM, Kok JN, La Poutre H (2002) Error-backpropagation in temporally encoded networks of spiking neurons. Neurocomputing 48(1-4):17–37

Hunsberger E, Eliasmith C (2015) Spiking deep networks with lif neurons. arXiv:151008829

Keogh E, Kasetty S (2003) On the need for time series data mining benchmarks: a survey and empirical demonstration. Data Min Knowl Discov 7(4):349–371

Wilkins AS (2018) To lag or not to lag?: re-evaluating the use of lagged dependent variables in regression analysis. Polit Sci Res Methods 6(2):393–411

Keele L, Kelly NJ (2006) Dynamic models for dynamic theories: The ins and outs of lagged dependent variables. Polit Anal 14(2):186–205

Wehmeyer C, Noé F (2018) Time-lagged autoencoders: Deep learning of slow collective variables for molecular kinetics. J Chem Phys 148(24):241703

Liu Z, Hauskrecht M (2016) Learning linear dynamical systems from multivariate time series: a matrix factorization based framework. In: Proceedings of the 2016 SIAM International Conference on Data Mining. SIAM, pp 810–818

Karim F, Majumdar S, Darabi H, Harford S (2019) Multivariate lstm-fcns for time series classification. Neural Netw 116:237–245

Yang J, Nguyen MN, San PP, Li XL, Krishnaswamy S (2015) Deep convolutional neural networks on multichannel time series for human activity recognition. In: Twenty-Fourth International Joint Conference on Artificial Intelligence

Savvaki S, Tsagkatakis G, Panousopoulou A, Tsakalides P (2017) Matrix and tensor completion on a human activity recognition framework. IEEE J Biomed Health Inf 21(6):1554–1561

Han L, Yu C, Xiao K, Zhao X (2019) A new method of mixed gas identification based on a convolutional neural network for time series classification. Sensors 19(9):1960

Balkin SD, Ord JK (2000) Automatic neural network modeling for univariate time series. Int J Forecast 16(4):509–515

LeCun Y, Bengio Y, Hinton G (2015) Deep learning. Nature 521(7553):436. Google Scholar

Cucker F, Smale S (2002) Best choices for regularization parameters in learning theory: on the bias-variance problem. Found Comput Math 2(4):413–428

Anders U, Korn O (1999) Model selection in neural networks. Neural Netw 12(2):309–323

Socher R, Pennington J, Huang EH, Ng AY, Manning CD (2011) Semi-supervised recursive autoencoders for predicting sentiment distributions. In: Proceedings of the conference on empirical methods in natural language processing, Association for Computational Linguistics, pp 151–161

Weisstein EW (1999) Convolution. http://mathworld.wolfram.com/Convolution.html

Goodfellow I, Bengio Y, Courville A (2016) Deep learning. MIT press, Cambridge

Krizhevsky A, Sutskever I, Hinton GE (2012) Imagenet classification with deep convolutional neural networks. In: Advances in neural information processing systems, pp 1097–1105

Hunsberger E, Eliasmith C (2016) Training spiking deep networks for neuromorphic hardware. arXiv:161105141

Prechelt L (2012) Early stopping—but when? In: Neural networks: tricks of the trade. Springer, Berlin, pp 53–67

Smith LN, Topin N (2018) Super-convergence: Very fast training of residual networks using large learning rates

Smith LN (2017) Cyclical learning rates for training neural networks. In: 2017 IEEE Winter Conference on Applications of Computer Vision (WACV). IEEE, pp 464–472

Geman S, Bienenstock E, Doursat R (1992) Neural networks and the bias/variance dilemma. Neural Comput 4(1):1–58

Lines J, Taylor S, Bagnall A (2018) Time series classification with hive-cote: The hierarchical vote collective of transformation-based ensembles. ACM Trans Knowl Discov Data (TKDD) 12(5):52

Bagnall A, Dau HA, Lines J, Flynn M, Large J, Bostrom A, Southam P, Keogh E (2018) The uea multivariate time series classification archive, arXiv:181100075

Acknowledgments

The authors are very grateful to Indian Institute of Information Technology Allahabad for providing the required resources. We would also like to thank the UCR archive for allowing access to the datasets used in this research.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Gautam, A., Singh, V. CLR-based deep convolutional spiking neural network with validation based stopping for time series classification. Appl Intell 50, 830–848 (2020). https://doi.org/10.1007/s10489-019-01552-y

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-019-01552-y