Abstract

We obtain estimation and excess risk bounds for Empirical Risk Minimizers (ERM) and minmax Median-Of-Means (MOM) estimators based on loss functions that are both Lipschitz and convex. Results for the ERM are derived under weak assumptions on the outputs and subgaussian assumptions on the design as in Alquier et al. (Estimation bounds and sharp oracle inequalities of regularized procedures with Lipschitz loss functions. arXiv:1702.01402, 2017). The difference with Alquier et al. (2017) is that the global Bernstein condition of this paper is relaxed here into a local assumption. We also obtain estimation and excess risk bounds for minmax MOM estimators under similar assumptions on the output and only moment assumptions on the design. Moreover, the dataset may also contains outliers in both inputs and outputs variables without deteriorating the performance of the minmax MOM estimators. Unlike alternatives based on MOM’s principle (Lecué and Lerasle in Ann Stat, 2017; Lugosi and Mendelson in JEMS, 2016), the analysis of minmax MOM estimators is not based on the small ball assumption (SBA) of Koltchinskii and Mendelson (Int Math Res Not IMRN 23:12991–13008, 2015). In particular, the basic example of non parametric statistics where the learning class is the linear span of localized bases, that does not satisfy SBA (Saumard in Bernoulli 24(3):2176–2203, 2018) can now be handled. Finally, minmax MOM estimators are analysed in a setting where the local Bernstein condition is also dropped out. It is shown to achieve excess risk bounds with exponentially large probability under minimal assumptions insuring only the existence of all objects.

Similar content being viewed by others

Notes

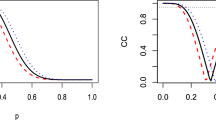

All figures can be reproduced from the code available at https://github.com/lecueguillaume/MOMpower.

References

Alon, N., Matias, Y., Szegedy, M.: The space complexity of approximating the frequency moments. J. Comput. Syst. Sci. 58(1, part 2), 137–147 (1999). Twenty-eighth Annual ACM Symposium on the Theory of Computing (Philadelphia, PA, 1996)

Alquier, P., Cottet, V., Lecué, G.: Estimation bounds and sharp oracle inequalities of regularized procedures with lipschitz loss functions (2017). arXiv:1702.01402

Audibert, J.-Y., Catoni, O.: Robust linear least squares regression. Ann. Stat. 39(5), 2766–2794 (2011)

Bach, F., Jenatton, R., Mairal, J., Obozinski, G., et al.: Convex optimization with sparsity-inducing norms. Optim. Mach. Learn. 5, 19–53 (2011)

Baraud, Y., Birgé, L., Sart, M.: A new method for estimation and model selection: \(\rho \)-estimation. Invent. Math. 207(2), 425–517 (2017)

Bartlett, P.L., Bousquet, O., Mendelson, S.: Local Rademacher complexities. Ann. Stat. 33(4), 1497–1537 (2005)

Bartlett, P.L., Bousquet, O., Mendelson, S., et al.: Local rademacher complexities. Ann. Stat. 33(4), 1497–1537 (2005)

Bartlett, P.L., Mendelson, S.: Empirical minimization. Probab. Theory Relat. Fields 135(3), 311–334 (2006)

Birgé, L.: Stabilité et instabilité du risque minimax pour des variables indépendantes équidistribuées. Ann. Inst. H. Poincaré Probab. Stat. 20(3), 201–223 (1984)

Boucheron, S., Bousquet, O., Lugosi, G.: Theory of classification: a survey of some recent advances. ESAIM Probab. Stat. 9, 323–375 (2005)

Boucheron, S., Lugosi, G., Massart, P.: Concentration Inequalities. Oxford University Press, Oxford (2013). A nonasymptotic theory of independence, with a foreword by Michel Ledoux

Bubeck, S.: Convex optimization: algorithms and complexity. Found. Trends® Mach. Learn. 8(3–4), 231–357 (2015)

Catoni, O.: Challenging the empirical mean and empirical variance: a deviation study. Ann. Inst. Henri Poincaré Probab. Stat. 48(4), 1148–1185 (2012)

Devroye, L., Lerasle, M., Lugosi, G., Oliveira, R.I., et al.: Sub-Gaussian mean estimators. Ann. Stat. 44(6), 2695–2725 (2016)

Elsener, A., van de Geer, S.: Robust low-rank matrix estimation (2016). arXiv:1603.09071

Han, Q., Wellner, J.A.: Convergence rates of least squares regression estimators with heavy-tailed errors. Ann. Statist. 47(4), 2286–2319 (2019)

Huber, P.J., Ronchetti, E.: Robust statistics. In: International Encyclopedia of Statistical Science, pp. 1248–1251. Springer, New York (2011)

Jerrum, M.R., Valiant, L.G., Vazirani, V.V.: Random generation of combinatorial structures from a uniform distribution. Theor. Comput. Sci. 43(2–3), 169–188 (1986)

Koltchinskii, V.: Local Rademacher complexities and oracle inequalities in risk minimization. Ann. Stat. 34(6), 2593–2656 (2006)

Koltchinskii, V.: Empirical and Rademacher processes. In: Oracle Inequalities in Empirical Risk Minimization and Sparse Recovery Problems, pp. 17–32. Springer, New York (2011)

Koltchinskii, V.: Oracle inequalities in empirical risk minimization and sparse recovery problems, volume 2033 of Lecture Notes in Mathematics. Springer, Heidelberg (2011). Lectures from the 38th Probability Summer School held in Saint-Flour, 2008, École d’Été de Probabilités de Saint-Flour (Saint-Flour Probability Summer School)

Koltchinskii, V., Mendelson, S.: Bounding the smallest singular value of a random matrix without concentration. Int. Math. Res. Not. IMRN 23, 12991–13008 (2015)

Lecué, G., Lerasle, M.: Learning from mom’s principles: Le cam’s approach. Stochast. Process. Appl. (2018). arXiv:1701.01961

Lecué, G., Lerasle, M.: Robust machine learning by median-of-means: theory and practice. Ann. Stat. (2017). arXiv:1711.10306

Lecué, G., Mendelson, S.: Performance of empirical risk minimization in linear aggregation. Bernoulli 22(3), 1520–1534 (2016)

Lecué, G., Lerasle, M., Mathieu, T.: Robust classification via mom minimization (2018). arXiv:1808.03106

Ledoux, M.: The Concentration of Measure Phenomenon, Volume 89 of Mathematical Surveys and Monographs. American Mathematical Society, Providence (2001)

Ledoux, M., Talagrand, M.: Probability in Banach Spaces:Isoperimetry and Processes. Springer, New York (2013)

Lugosi, G., Mendelson, S.: Risk minimization by median-of-means tournaments. J. Eur. Math. Soc. (2019). arXiv:1608.00757

Lugosi, G., Mendelson, S.: Regularization, sparse recovery, and median-of-means tournaments (2017). arXiv:1701.04112

Lugosi, G., Mendelson, S.: Sub-gaussian estimators of the mean of a random vector (2017). To appear in Ann. Stat. arXiv:1702.00482

Mammen, E., Tsybakov, A.B.: Smooth discrimination analysis. Ann. Stat. 27(6), 1808–1829 (1999)

Mendelson, S.: Learning without concentration. In: Conference on Learning Theory, pp. 25–39 (2014)

Mendelson, S.: Learning without concentration. J. ACM 62(3), Art. 21, 25 (2015)

Mendelson, S.: On multiplier processes under weak moment assumptions. In: Geometric Aspects of Functional Analysis, Volume 2169 of Lecture Notes in Math., pp. 301–318. Springer, Cham (2017)

Mendelson, S., Pajor, A., Tomczak-Jaegermann, N.: Reconstruction and subgaussian operators in asymptotic geometric analysis. Geom. Funct. Anal. 17(4), 1248–1282 (2007)

Nemirovsky, A.S., Yudin, D.B.: Problem Complexity and Method Efficiency in Optimization. A Wiley-Interscience Publication. Wiley, New York (1983). Translated from the Russian and with a preface by E. R. Dawson, Wiley-Interscience Series in Discrete Mathematics

Pedregosa, F., Varoquaux, G., Gramfort, A., Michel, V., Thirion, B., Grisel, O., Blondel, M., Prettenhofer, P., Weiss, R., Dubourg, V., Vanderplas, J., Passos, A., Cournapeau, D., Brucher, M., Perrot, M., Duchesnay, E.: Scikit-learn: machine learning in python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Saumard, A.: On optimality of empirical risk minimization in linear aggregation. Bernoulli 24(3), 2176–2203 (2018)

Talagrand, M.: Upper and lower bounds for stochastic processes, volume 60 of Ergebnisse der Mathematik und ihrer Grenzgebiete. 3. Folge. A Series of Modern Surveys in Mathematics (Results in Mathematics and Related Areas. 3rd Series. A Series of Modern Surveys in Mathematics). Springer, Heidelberg (2014). Modern methods and classical problems

Tsybakov, A.B.: Optimal aggregation of classifiers in statistical learning. Ann. Stat. 32(1), 135–166 (2004)

van de Geer, S.: Estimation and Testing Under Sparsity, Volume 2159 of Lecture Notes in Mathematics. Springer, Cham (2016). Lecture notes from the 45th Probability Summer School held in Saint-Four, 2015, École d’Été de Probabilités de Saint-Flour (Saint-Flour Probability Summer School)

Vapnik, V.: The Nature of Statistical Learning Theory. Springer, New York (1995)

Vapnik, V.: Statistical Learning Theory, vol. 1. Wiley, New York (1998)

Zhou, W.-X., Bose, K., Fan, J., Liu, H.: A new perspective on robust m-estimation: finite sample theory and applications to dependence-adjusted multiple testing. Ann Stat. 46(5), 1904–1931 (2018). https://doi.org/10.1214/17-AOS1606

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Proof of Theorems 1, 2, 3 and 4

1.1 Proof of Theorem 1

The proof is splitted in two parts. First, we identify an event where the statistical behavior of the regularized estimator \(\hat{f}^{ERM}\) can be controlled. Then, we prove that this event holds with probability at least (3). Introduce \(\theta =1/(2A)\) and define the following event:

where \(\theta \) is a parameter appearing in the definition of \(r_2\) in Definition 3.

Proposition 3

On the event \(\Omega \), one has

Proof

By construction, \(\hat{f}^{ERM}\) satisfies \(P_N{{\mathcal {L}}}_{\hat{f}^{ERM}} \le 0 \). Therefore, it is sufficient to show that, on \(\Omega \), if \(\Vert f-f^{*}\Vert _{L_2} > r_2(\theta )\), then \(P_N {{\mathcal {L}}}_f >0\). Let \(f\in F\) be such that \(\Vert f-f^{*}\Vert _{L_2 } > r_2(\theta )\). By convexity of F, there exists \(f_0 \in F \cap (f^{*} + r_2(\theta )S_{L_2})\) and \(\alpha > 1\) such that

For all \(i \in \{1,\ldots ,N \}\), let \(\psi _i: {\mathbb {R}} \rightarrow {\mathbb {R}} \) be defined for all \(u\in \mathbb {R}\) by

The functions \(\psi _i\) are such that \(\psi _i(0) = 0\), they are convex because \(\overline{\ell }\) is, in particular \(\alpha \psi _i(u) \le \psi _i(\alpha u)\) for all \(u\in {\mathbb {R}}\) and \(\alpha \ge 1\) and \(\psi _i(f(X_i) - f^{*}(X_i) )= \overline{\ell } (f(X_i), Y_i) - \overline{\ell } (f^{*}(X_i), Y_i) \) so that the following holds:

Until the end of the proof, the event \(\Omega \) is assumed to hold. Since \(f_0 \in F \cap (f^{*}+ r_2(\theta ) S_{L_2})\), \(P_N {{\mathcal {L}}}_{f_0} \ge P{{\mathcal {L}}}_{f_0} - \theta r_2^2(\theta )\). Moreover, by Assumption 4, \(P{{\mathcal {L}}}_{f_0} \ge A^{-1} \Vert f_0-f^*\Vert _{L_2 }^2 = A^{-1}r_2^2(\theta ) \), thus

From Eqs. (21) and (22), \(P_N {{\mathcal {L}}}_f > 0\) since \(A^{-1}>\theta \). Therefore, \(\Vert \hat{f}^{ERM}-f^{*}\Vert _{L_2 } \le r_2^2(\theta )\). This proves the \(L_2\)-bound.

Now, as \(\Vert \hat{f}^{ERM}-f^{*}\Vert _{L_2 } \le r_2^2(\theta )\), \(|(P-P_N){{\mathcal {L}}}_{\hat{f}^{ERM}}|\le \theta r_2^2(\theta )\). Since \(P_N{{\mathcal {L}}}_{\hat{f}^{ERM}}\le 0\),

This show the excess risk bound. \(\square \)

Proposition 3 shows that \(\hat{f}^{ERM}\) has the risk bounds given in Theorem 1 on the event \(\Omega \). To show that \(\Omega \) holds with probability (3), recall the following results from [2].

Lemma 2

[2] [Lemma 8.1] Grant Assumptions 1 and 3 . Let \(F^\prime \subset F\) with finite \(L_2\)-diameter \(d_{L_2}(F^\prime )\). For every \(u>0\), with probability at least \(1-2\exp (-u^2)\),

It follows from Lemma 2 that for any \(u>0\), with probability larger that \(1-2\exp (-u^2)\),

where \(d_{L_2} ((F-f^*)\cap r_2(\theta )B_{L_2}) \le r_2(\theta )\). By definition of the complexity parameter (see Eq. (3)), for \(u = \theta \sqrt{N} r_2(\theta )/(64L) \), with probability at least

for every f in \(F\cap (f^*+ r_2(\theta )B_{L_2} )\),

Together with Proposition 3, this concludes the proof of Theorem 1.

1.2 Proof of Theorem 2

The proof is splitted in two parts. First, we identify an event \(\Omega _K\) where the statistical properties of \({\hat{f}}\) from Theorem 2 can be established. Next, we prove that this event holds with probability (8). Let \(\alpha , \theta \) and \(\gamma \) be positive numbers to be chosen later. Define

where the exact form of \(\alpha , \theta \) and \(\gamma \) are given in Eq. (33). Set the event \(\Omega _K\) to be such that

1.2.1 Deterministic argument

The goal of this section is to show that, on the event \(\Omega _K\), \(\Vert {\hat{f}} - f^{*}\Vert _{L_2}^2 \le C_{K,r}\) and \(P{{\mathcal {L}}}_{{\hat{f}}}\le 2 \theta C_{K,r}\).

Lemma 3

If there exists \(\eta >0\) such that

then \(\Vert {\hat{f}} - f^{*} \Vert _{L_2 }^2 \le C_{K,r}\).

Proof

Assume that (26) holds, then

Moreover, if \(T_K(f)=\sup _{g\in F}\text {MOM}_K[\ell _f-\ell _g]\) for all \(f\in F\), then

By definition of \(\hat{f}\) and (28), \(T_K(\hat{f})\leqslant T_K(f^*)\leqslant \eta \). Moreover, by (27), any \(f\in F \backslash \left( f^{*} + \sqrt{C_{K,r}}B_{L_2}\right) \) satisfies \(T_K(f)\geqslant \text {MOM}_K[\ell _f-\ell _{f^*}]> \eta \). Therefore \(\hat{f} \in F\cap (f^{*} + \sqrt{C_{K,r}} B_{L_2})\). \(\square \)

Lemma 4

Grant Assumption 6 and assume that \(\theta -A^{-1}<-\theta \). On the event \(\Omega _K\), (26) holds with \(\eta = \theta C_{K,r}\).

Proof

Let \(f\in F\) be such that \(\Vert f-f^{*}\Vert _{L_2 } > C_{K,r}\). By convexity of F, there exists \(f_0 \in F \cap \left( f^{*} + \sqrt{C_{K,r}} S_{L_2}\right) \) and \(\alpha > 1\) such that \(f = f^{*} + \alpha (f_0 - f^{*})\). For all \(i \in \{1,\ldots ,N \}\), let \(\psi _i: {\mathbb {R}} \rightarrow {\mathbb {R}} \) be defined for all \(u\in \mathbb {R}\) by

The functions \(\psi _i\) are convex because \(\overline{\ell }\) is and such that \(\psi _i(0) = 0\), so \(\alpha \psi _i(u) \le \psi _i(\alpha u)\) for all \(u\in {\mathbb {R}}\) and \(\alpha \ge 1\). As \(\psi _i(f(X_i) - f^{*}(X_i) )= \overline{\ell } (f(X_i), Y_i) - \overline{\ell } (f^{*}(X_i), Y_i)\), for any block \(B_k\),

As \(f_0 \in F \cap (f^* + \sqrt{C_{K,r}} S_{L_2})\), on \(\Omega _K\), there are strictly more than K / 2 blocks \(B_k\) where \(P_{B_k} {{\mathcal {L}}}_{f_0} \ge P{{\mathcal {L}}}_{f_0} - \theta C_{K,r}\). Moreover, from Assumption 6, \(P{{\mathcal {L}}}_{f_0} \ge A^{-1} \Vert f_0-f^*\Vert _{L_2 }^2 = A^{-1}C_{K,r} \). Therefore, on strictly more than K / 2 blocks \(B_k\),

From Eqs. (30) and (31), there are strictly more than K / 2 blocks \(B_k\) where \(P_{B_k} {{\mathcal {L}}}_f \ge (A^{-1}- \theta ) C_{K,r} \). Therefore, on \(\Omega _K\), as \((\theta - A^{-1}) < - \theta \),

In addition, on the event \(\Omega _K\), for all \(f \in F \cap (f^{*} + \sqrt{C_{K,r}}B_{L_2})\), there are strictly more than K / 2 blocks \(B_k\) where \(|(P_{B_k}-P) {{\mathcal {L}}}_f | \le \theta C_{K,r} \). Therefore

\(\square \)

Lemma 5

Grant Assumption 6 and assume that \(\theta - A^{-1}<-\theta \). On the event \(\Omega _K\), \(P{{\mathcal {L}}}_{\hat{f}} \le 2\theta C_{K,r}\).

Proof

Assume that \(\Omega _K\) holds. From Lemmas 3 and 4 , \(\Vert \hat{f}-f^{*}\Vert _{L_2 } \le \sqrt{C_{K,r}}\). Therefore, on strictly more than K / 2 blocks \(B_k\), \(P {{\mathcal {L}}}_{\hat{f}} \le P_{B_k} {{\mathcal {L}}}_{\hat{f}} + \theta C_{K,r}\). In addition, by definition of \({\hat{f}}\) and (28) (for \(\eta = \theta C_{K,r}\)),

As a consequence, there exist at least K / 2 blocks \(B_k\) where \(P_{B_k} {{\mathcal {L}}}_{\hat{f}} \le \theta C_{K,r}\). Therefore, there exists at least one block \(B_k\) where both \(P {{\mathcal {L}}}_{\hat{f}} \le P_{B_k} {{\mathcal {L}}}_{\hat{f}} + \theta C_{K,r}\) and \(P_{B_k} {{\mathcal {L}}}_{\hat{f}} \le \theta C_{K,r}\). Hence \(P{{\mathcal {L}}}_{\hat{f}} \le 2\theta C_{K,r}\). \(\square \)

1.2.2 Stochastic argument

This section shows that \(\Omega _K\) holds with probability at least (8).

Proposition 4

Grant Assumptions 1, 2, 5 and 6 and assume that \((1-\beta )K\ge |{{\mathcal {O}}}|\). Let \(x>0\) and assume that \(\beta (1-\alpha -x-8\gamma L/\theta )>1/2\). Then \(\Omega _K\) holds with probability larger than \(1-\exp (-x^2 \beta K/2)\).

Proof

Let \({{\mathcal {F}}}= F \cap \left( f^{*} + \sqrt{C_{K,r}}B_{L_2}\right) \) and set \(\phi :t\in \mathbb {R}\rightarrow I \{ t\ge 2 \} + (t-1) I \{1 \le t \le 2 \}\) so, for all \(t \in \mathbb {R}\), \(I \{ t\ge 2 \} \le \phi (t) \le I \{ t\ge 1 \}\). Let \(W_k = ((X_i,Y_i))_{i \in B_k}\), \(G_f(W_k) = (P_{B_k} - P){{\mathcal {L}}}_f\). Let

Let \(\mathcal {K}\) denote the set of indices of blocks which have not been corrupted by outliers, \(\mathcal {K} = \{k \in \{1,\ldots ,K \} : B_k \subset \mathcal {I}\}\) and let \(f \in {{\mathcal {F}}}\). Basic algebraic manipulations show that

By Assumptions 1 and 5, using that \(C_{K,r}^2\ge \left\| f-f^*\right\| ^2_{L_2 }[(4L^2K)/(\theta ^2\alpha N)]\),

Therefore,

Using Mc Diarmid’s inequality [11, Theorem 6.2], for all \(x>0\), with probability larger than \(1-\exp (-x^2 |{{\mathcal {K}}}| /2)\),

Let \(\epsilon _1, \ldots , \epsilon _K\) denote independent Rademacher variables independent of the \((X_i, Y_i), i\in {{\mathcal {I}}}\). By Giné-Zinn symmetrization argument,

As \(\phi \) is 1-Lipschitz with \(\phi (0)=0\), using the contraction lemma [28, Chapter 4],

Let \((\sigma _i: i \in \cup _{k\in {{\mathcal {K}}}}B_k)\) be a family of independent Rademacher variables independent of \((\epsilon _k)_{k \in \mathcal {K}}\) and \((X_i, Y_i)_{i \in {{\mathcal {I}}}}\). It follows from the Giné-Zinn symmetrization argument that

By the Lipschitz property of the loss, the contraction principle applies and

To bound from above the right-hand side in the last inequality, consider two cases 1) \(C_{K,r}= \tilde{r}_2^2(\gamma )\) or 2) \(C_{K,r} = 4L^2K/(\alpha \theta ^2 N)\). In the first case, by definition of the complexity parameter \(\tilde{r}_2(\gamma )\) in (6),

In the second case,

Let \(f\in F\) be such that \(\tilde{r}_2(\gamma ) \le \left\| f-f^*\right\| _{L_2 }\le \sqrt{[4L^2K]/[\alpha \theta ^2 N]}\); by convexity of F, there exists \(f_0\in F\) such that \(\left\| f_0-f^*\right\| _{L_2 } = \tilde{r}_2(\gamma )\) and \(f = f^*+\alpha (f_0-f^*)\) with \(\alpha = \left\| f-f^*\right\| _{L_2 }/\tilde{r}_2(\gamma )\ge 1\). Therefore,

and so

By definition of \(\tilde{r}_2(\gamma )\), it follows that

Therefore, as \(|\mathcal {K}| \ge K-|\mathcal {O}| \ge \beta K\), with probability larger than \(1-\exp (-x^2 \beta K/2)\), for all \(f\in F\) such that \(\left\| f-f^*\right\| _{L_2 }\le \sqrt{C_{K,r}}\),

\(\square \)

1.2.3 End of the proof of Theorem 2

Theorem 2 follows from Lemmas 3, 4, 5 and Proposition 4 for the choice of constant

1.3 Proof of Theorem 3

Let \(K \in \big [ 7|{{\mathcal {O}}}|/3 , N \big ]\) and consider the event \(\Omega _K\) defined in (25). It follows from the proof of Lemmas 3 and 4 that \(T_K(f^*)\le \theta C_{K,r}\) on \(\Omega _K\). Setting \(\theta =1/(3A)\), on \(\cap _{J=K}^N \Omega _J\), \(f^*\in {\hat{R}}_J\) for all \(J=K,\ldots , N\), so \(\cap _{J=K}^N {\hat{R}}_J\ne \emptyset \). By definition of \({\hat{K}}\), it follows that \({\hat{K}}\le K\) and by definition of \({\tilde{f}}\), \({\tilde{f}} \in {\hat{R}}_K\) which means that \(T_K({\tilde{f}})\le \theta C_{K,r}\). It is proved in Lemmas 3 and 4 that on \(\Omega _K\), if \(f\in F\) satisfies \(\left\| f-f^*\right\| _{L_2 }\ge \sqrt{C_{K,r}}\) then \(T_K(f)> \theta C_{K,r}\). Therefore, \(\left\| {\tilde{f}}-f^*\right\| _{L_2 }\le \sqrt{C_{K,r}}\). On \(\Omega _K\), since \(\left\| {\tilde{f}}-f^*\right\| _{L_2 }\le \sqrt{C_{K,r}}\), \(P{{\mathcal {L}}}_{{\tilde{f}}}\le 2 \theta C_{K,r}\). Hence, on \(\cap _{J=K}^N \Omega _J\), the conclusions of Theorem 3 hold. Finally, by Proposition 4,

1.4 Proof of Theorem 4

The proof of Theorem 4 follows the same path as the one of Theorem 2. We only sketch the different arguments needed because of the localization by the excess loss and the lack of Bernstein condition.

Define the event \(\Omega _K^\prime \) in the same way as \(\Omega _K\) in (25) where \(C_{K,r}\) is replaced by \(\bar{r}_2^2(\gamma )\) and the \(L_2\) localization is replaced by the “excess loss localization”:

where \(({{\mathcal {L}}}_F)_{\bar{r}_2^2(\gamma )} =\{f\in F: P{{\mathcal {L}}}_{f}\le \bar{r}_2^2(\gamma )\}\). Our first goal is to show that on the event \(\Omega _K^\prime \), \(P{{\mathcal {L}}}_{{\hat{f}}}\le (1/4) \bar{r}_2^2(\gamma )\). We will then handle \({\mathbb {P}}[\Omega _K^\prime ]\).

Lemma 6

Grant Assumptions 1 and 2. For every \(r\ge 0\), the set \(({{\mathcal {L}}}_F)_r:=\{f\in F:P{{\mathcal {L}}}_f\le r\}\) is convex and relatively closed to F in \(L_1(\mu )\). Moreover, if \(f\in F\) is such that \(P{{\mathcal {L}}}_f>r\) then there exists \(f_0\in F\) and \((P{{\mathcal {L}}}_f/r)\ge \alpha >1\) such that \((f-f^*)=\alpha (f_0-f^*)\) and \(P{{\mathcal {L}}}_{f_0} = r\).

Proof

Let f and g be in \(({{\mathcal {L}}}_F)_r\) and \(0\le \alpha \le 1\). We have \(\alpha f + (1-\alpha )g\in F\) because F is convex and for all \(x\in {{\mathcal {X}}}\) and \(y\in \mathbb {R}\), using the convexity of \(u\rightarrow {\bar{\ell }}(u+f^*(x), y)\), we have

and so \(P{{\mathcal {L}}}_{\alpha f + (1-\alpha )g}\le \alpha P{{\mathcal {L}}}_f + (1-\alpha )P{{\mathcal {L}}}_g\). Given that \(P{{\mathcal {L}}}_f, P{{\mathcal {L}}}_g\le r\) we also have \(P{{\mathcal {L}}}_{\alpha f + (1-\alpha )g}\le r\). Therefore, \(\alpha f + (1-\alpha )g\in ({{\mathcal {L}}}_F)_r\) and \(({{\mathcal {L}}}_F)_r\) is convex.

For all \(f,g\in F\), \(|P{{\mathcal {L}}}_f - P{{\mathcal {L}}}_g|\le \left\| f-f^*\right\| _{L_1(\mu )}\) so that \(f\in F\rightarrow P{{\mathcal {L}}}_f\) is continuous onto F in \(L_1(\mu )\) and therefore its level sets, such as \(({{\mathcal {L}}}_F)_r\), are relatively closed to F in \(L_1(\mu )\).

Finally, let \(f\in F\) be such that \(P{{\mathcal {L}}}_f >r\). Define \(\alpha _0 = \sup \{\alpha \ge 0: f^*+\alpha (f-f^*)\in ({{\mathcal {L}}}_F)_r\}\). Note that \(P{{\mathcal {L}}}_{f^*+\alpha (f-f^*)}\le \alpha P{{\mathcal {L}}}_f= r\) for \(\alpha = r/P{{\mathcal {L}}}_f\) so that \(\alpha _0\ge r/P{{\mathcal {L}}}_f\). Since \(({{\mathcal {L}}}_F)_r\) is relatively closed to F in \(L_1(\mu )\), we have \(f^*+\alpha _0(f-f^*)\in ({{\mathcal {L}}}_F)_r\) and in particular \(\alpha _0<1\) otherwise, by convexity of \(({{\mathcal {L}}}_F)_r\), we would have \(f\in ({{\mathcal {L}}}_F)_r\). Moreover, by maximality of \(\alpha _0\), \(f_0 = f^*+\alpha _0(f-f^*)\) is such that \(P{{\mathcal {L}}}_{f_0}=r\) and the results follows for \(\alpha = \alpha _0^{-1}\). \(\square \)

Lemma 7

Grant Assumptions 1 and 2. On the event \(\Omega _K^\prime \), \(P{{\mathcal {L}}}_{{\hat{f}}}\le \bar{r}_2^2(\gamma )\).

Proof

Let \(f\in F\) be such that \(P{{\mathcal {L}}}_f > \bar{r}_2^2(\gamma )\). It follows from Lemma 6 that there exists \(\alpha \ge 1\) and \(f_0\in F\) such that \(P{{\mathcal {L}}}_{f_0} = \bar{r}_2^2(\gamma )\) and \(f-f^* = \alpha (f_0-f^*)\). According to (30), we have for every \(k\in \{1, \ldots , K\}\), \(P_{B_k} {{\mathcal {L}}}_f\ge \alpha P_{B_k}{{\mathcal {L}}}_{f_0}\). Since \(f_0\in ({{\mathcal {L}}}_F)_{\bar{r}_2^2(\gamma )}\), on the event \(\Omega _K^\prime \), there are strictly more than K / 2 blocks \(B_k\) such that \(P_{B_k}{{\mathcal {L}}}_{f_0}\ge P{{\mathcal {L}}}_{f_0}- (1/4) \bar{r}_2^2(\gamma ) = (3/4)\bar{r}_2^2(\gamma )\) and so \(P_{B_k}{{\mathcal {L}}}_{f}\ge (3/4)\bar{r}_2^2(\gamma )\). As a consequence, we have

Moreover, on the event \(\Omega _K^\prime \), for all \(f\in ({{\mathcal {L}}}_F)_{\bar{r}_2^2(\gamma )}\), there are strictly more than K / 2 blocks \(B_k\) such that \(P_{B_k}(-{{\mathcal {L}}}_f)\le (1/4) \bar{r}_2^2(\gamma ) - P{{\mathcal {L}}}_f\le (1/4) \bar{r}_2^2(\gamma )\). Therefore,

We conclude from (35) and (36) that \(\sup _{f\in F} \text {MOM}_{K}\big (\ell _{f^{*}}-\ell _f\big ) \le (1/4) \bar{r}_2^2(\gamma )\) and that every \(f\in F\) such that \(P{{\mathcal {L}}}_{f}> \bar{r}_2^2(\gamma )\) satisfies \(\text {MOM}_{K}\big (\ell _{f}-\ell _{f^*}\big )\ge (3/4)\bar{r}_2^2(\gamma )\). But, by definition of \({\hat{f}}\), we have

Therefore, we necessarily have \(P{{\mathcal {L}}}_{{\hat{f}}}\le \bar{r}_2^2(\gamma )\). \(\square \)

Now, we prove that \(\Omega _K^\prime \) is an exponentially large event using similar argument as in Proposition 4.

Proposition 5

Grant Assumptions 1, 2 and 7 and assume that \((1-\beta )K\ge |{{\mathcal {O}}}|\) and \(\beta (1-1/12-32\gamma L)>1/2\). Then \(\Omega _K^\prime \) holds with probability larger than \(1-\exp (-\beta K/1152)\).

Sketch of proof

The proof of Proposition 5 follows the same line as the one of Proposition 4. Let us precise the main differences. We set \({{\mathcal {F}}}^\prime = ({{\mathcal {L}}}_F)_{\bar{r}_2^2(\gamma )}\) and for all \(f\in {{\mathcal {F}}}^\prime \), \(z^\prime (f) = \sum _{k=1}^K I\{|G_f(W_k)|\le (1/4) \bar{r}_2^2(\gamma )\}\) where \(G_f(W_k)\) is the same quantity as in the proof of Proposition 5. Let us consider the contraction \(\phi \) introduced in Proposition 5. By definition of \(\bar{r}_2^2(\gamma )\) and \(V_K(\cdot )\), we have

Using Mc Diarmid’s inequality, the Giné-Zinn symmetrization argument and the contraction lemma twice and the Lipschitz property of the loss function, such as in the proof of Proposition 4, we obtain with probability larger than \(1-\exp (-|{{\mathcal {K}}}|/1152)\), for all \(f\in {{\mathcal {F}}}^\prime \),

Now, it remains to use the definition of \(\bar{r}_2^2(\gamma )\) to bound the expected supremum in the right-hand side of (37) to get

\(\square \)

Proof of Theorem 4

The proof of Theorem 4 follows from Lemma 7 and Proposition 5 for \(\beta =4/7\) and \(\gamma = 1/(768L)\). \(\square \)

Proof of Lemma 1

Proof

We have

Let \(\Sigma =\mathbb {E} X^TX\) denote the covariance matrix of X and consider its SVD, \(\Sigma = QDQ^T\) where \(Q = [Q_1|\ldots |Q_d]\in {\mathbb {R}}^{d\times d}\) is an orthogonal matrix and D is a diagonal \(d\times d\) matrix with non-negative entries. For all \(t\in \mathbb {R}^d\), we have \(\mathbb {E}\langle X,t \rangle ^2 = t^T \Sigma t = \sum _{j=1}^d d_j \langle t,Q_j \rangle ^2\). Then

Moreover, for any j such that \(d_j\ne 0\),

By orthonormality, \(Q^TQ_j = e_j\) and \(Q_j^TQ = e_j^T\), then, for any j such that \(d_j\ne 0\),

Finally, we obtain

and therefore the fixed point \(\tilde{r}_2(\gamma )\) is such that

\(\square \)

Proofs of the results of Sect. 5

We begin this section with a simple Lemma coming from the convexity of F.

Lemma 8

For any \(f \in F\),

where we recall that \(R(f) = \mathbb {E}_{(X,Y) \sim P} [\ell _f(X,Y)]\).

Proof

Let \(t \in (0,1)\). By convexity of F, \(f^* + t(f-f^*) \in F\) and \(R(f^*+t(f-f^*)) - R(f^*) \ge 0\) because \(f^*\) minimizes the risk over F. \(\square \)

1.1 Proof of Theorem 6

Let \(r >0\). Let \(f\in F\) be such that \(\left\| f-f^*\right\| _{L_2}\le r\). For all \(x\in {{\mathcal {X}}}\) denote by \(F_{Y|X=x}\) the conditional c.d.f. of Y given \(X=x\). We have

By Fubini’s theorem,

Therefore,

where \(g:(x,a)\in {{\mathcal {X}}}\times {\mathbb {R}}\rightarrow \int \mathbf{1}_{y \ge a} (1 - F_{Y|X=x}(y))\text {d}y + (1-\tau )a\). It follows that

Since for all \(x \in {{\mathcal {X}}}\), \(a \mapsto g(x,a)\) is twice differentiable, from a second order Taylor expansion we get

where for all \(x\in {{\mathcal {X}}}\), \(z_x\) is some point in \(\big [\min (f(x), f^{*}(x)),\max (f(x), f^{*}(x)) \big ]\). For the first order term, we have

For all \(x \in {{\mathcal {X}}}\), we have \([g(x,f^*(x)+t(f(x)-f^*(x))) - g(x,f^*(x))]/t \le (2-\tau ) |f^*(x)- f(x)|\) which is integrable with respect to \(P_X\). Thus, by the dominated convergence theorem, it is possible to interchange integral and limit and therefore using Lemma 8, we obtian

Given that for all \(x\in {{\mathcal {X}}}\), \(\frac{\partial ^2 g (x,a)}{\partial a^2} (z)= f_{Y|X=x}(z)\) for all \(z\in {\mathbb {R}}\) it follows that

Consider \(A = \{ x \in \mathcal {X}, |f(x)-f^{*}(x)| \le (\sqrt{2}C')^{(2+\varepsilon )/\varepsilon } r \}\). Given that \(\Vert f-f^{*}\Vert _{L_2 }\le r\), by Markov’s inequality, \(P(X \in A) \geqslant 1-1/(\sqrt{2}C')^{(4+2\varepsilon )/\varepsilon }\). From Assumption 10 we get

By Holder and Markov’s inequalities,

By Assumption 9, it follows that \(\mathbb {E} [ I_{A^c}(X) (f(X)-f^{*}(X))^2 ]\leqslant \Vert f-f^{*}\Vert _{L_2}^2/2\) and we conclude with (40).

1.2 Proof of Theorem 7

Let \(r>0\). Let \(f\in F\) be such that \(\left\| f-f^*\right\| _{L_2}\le r\). We have

where \(g:(x,a)\in {{\mathcal {X}}}\times {\mathbb {R}}= \mathbb {E}[\rho _H(Y-a)|X=x]\). Let \(F_{Y|X=x}\) denote the c.d.f. of Y given \(X=x\). Since for all \(x \in {{\mathcal {X}}}\), \(a \mapsto g(x,a)\) is twice differentiable in its second argument (see Lemma 2.1 in [15]), a second Taylor expansion yields

where for all \(x\in {{\mathcal {X}}}\), \(z_x\) is some point in \([\min (f(x), f^{*}(x)), \max (f(x), f^{*}(x))]\). By Lemma 8, with the same reasoning as the one in Sect. C.1, we get

Moreover, for all \(z \in {\mathbb {R}}\),

Now, let \(A = \{ x \in {{\mathcal {X}}}: |f(x)-f^{*}(x)| \le (\sqrt{2}C')^{(2+\varepsilon )/\varepsilon } r \} \). It follows from Assumption 10 that \(P{{\mathcal {L}}}_f \ge (\alpha /2) \mathbb {E} [(f(X)-f^{*}(X))^2 I_A(X)]\). Since \(\Vert f-f^{*}\Vert _{L_2 }\le r\), by Markov’s inequality, \(P(X \in A) \geqslant 1-1/(\sqrt{2}C')^{(4+2\varepsilon )/\varepsilon }\). By Holder and Markov’s inequalities,

By Assumption 9, it follows that \(\mathbb {E} [ I_{A^c}(X) (f(X)-f^{*}(X))^2 ]\leqslant \frac{\Vert f-f^{*}\Vert _{L_2}^2}{2}\), which concludes the proof.

1.3 Proof of Theorem 8

Let \(r>0\). Let \(f\in F\) be such that \(\left\| f-f^*\right\| _{L_2}\le r\). Let \(\eta (x) = P(Y=1|X=x)\). Write first that \(P{{\mathcal {L}}}_f = \mathbb {E} \bigg [ g(X, f(X)) - g(X, f^*(X))\bigg ]\) where for all \(x\in {{\mathcal {X}}}\) and \(a\in {\mathbb {R}}\), \(g(x,a) = \eta (x) \log (1+\exp (-a)) + (1-\eta (x))\log (1+\exp (a))\). From Lemma 8 and the same reasoning as in Sects. C.1 and C.2 we get

for some \(z_x \in [\min (f(x), f^{*}(x)), \max (f(x), f^{*}(x))]\). Now, let

On the event A we have

Using the fact that \(P(X \notin A) \le P(|f^*(X) |> c_0) + P(|f(X)-f^*(X| > (2C')^{(2+\varepsilon )/\varepsilon } r ) \le 2/(2C')^{(4+\varepsilon )/\varepsilon } \), we conclude with Assumption 9 and the same analysis as in the two previous proofs.

1.4 Proof of Theorem 9

Let \(r>0\) such that \( r(\sqrt{2} C')^{(2+\varepsilon )/\varepsilon } \le 1\). Let f be in F such that \(\Vert f-f^*\Vert _{L_2} \le r\). For all x in \(\mathcal {X}\) let us denote \(\eta (x) = {\mathbb {P}}(Y=1 | X=x) \). It is easy to verify that the Bayes estimator (which is equal to the oracle) is defined as \(f^*(x) = \text{ sign }(2\eta (x)-1)\). Consider the set \(A= \{ x \in {\mathcal {X}}, |f(x)-f^*(x)| \le r(\sqrt{2} C')^{(2+\varepsilon )/\varepsilon } \}\). Since \(\Vert f-f^*\Vert _{L_2} \le r\), by Markov’s inequality \({\mathbb {P}}(X \in A) \ge 1-1/(\sqrt{2} C')^{(4+ 2\varepsilon )/\varepsilon }\). Let x be in A. If \(f^*(x) = -1\) (i.e \(2\eta (x) \le 1\)) and \(f(x) \le f^*(x) = -1\) we obtain

where we used the fact that on A, \(|f(x)-f^*(x)| \le r(\sqrt{2} C')^{(2+\varepsilon )/\varepsilon } \le 1\). Using the same analysis for the other cases we get that

Therefore,

By Holder and Markov’s inequalities,

By Assumption 9, it follows that \(\mathbb {E} [ I_{A^c}(X) (f(X)-f^{*}(X))^2 ]\leqslant \frac{\Vert f-f^{*}\Vert _{L_2}^2}{2}\) and we conclude with (41).

Rights and permissions

About this article

Cite this article

Chinot, G., Lecué, G. & Lerasle, M. Robust statistical learning with Lipschitz and convex loss functions. Probab. Theory Relat. Fields 176, 897–940 (2020). https://doi.org/10.1007/s00440-019-00931-3

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00440-019-00931-3