Abstract

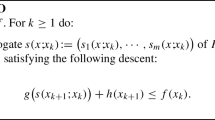

We introduce new optimized first-order methods for smooth unconstrained convex minimization. Drori and Teboulle (Math Program 145(1–2):451–482, 2014. doi:10.1007/s10107-013-0653-0) recently described a numerical method for computing the N-iteration optimal step coefficients in a class of first-order algorithms that includes gradient methods, heavy-ball methods (Polyak in USSR Comput Math Math Phys 4(5):1–17, 1964. doi:10.1016/0041-5553(64)90137-5), and Nesterov’s fast gradient methods (Nesterov in Sov Math Dokl 27(2):372–376, 1983; Math Program 103(1):127–152, 2005. doi:10.1007/s10107-004-0552-5). However, the numerical method in Drori and Teboulle (2014) is computationally expensive for large N, and the corresponding numerically optimized first-order algorithm in Drori and Teboulle (2014) requires impractical memory and computation for large-scale optimization problems. In this paper, we propose optimized first-order algorithms that achieve a convergence bound that is two times smaller than for Nesterov’s fast gradient methods; our bound is found analytically and refines the numerical bound in Drori and Teboulle (2014). Furthermore, the proposed optimized first-order methods have efficient forms that are remarkably similar to Nesterov’s fast gradient methods.

Similar content being viewed by others

Notes

The problem \(\mathcal {B}_{\mathrm {P}}(\varvec{h},N,d,L,R)\) was shown to be independent of d in [17]; thus this paper’s results are independent of d.

Substituting \(\varvec{x}' = \frac{1}{R}\varvec{x}\) and \(\breve{f} (\varvec{x}') = \frac{1}{LR^2}f(R\varvec{x}')\in \mathcal {F}_1(\mathbb {R} ^d)\) in problem (P), we get \(\mathcal {B}_{\mathrm {P}}(\varvec{h},N,L,R) = LR^2\mathcal {B}_{\mathrm {P}}(\varvec{h},N,1,1)\). This leads to \(\varvec{\hat{h}}_{\mathrm {P}} = \hbox {arg min}_{\varvec{h}} \mathcal {B}_{\mathrm {P}}(\varvec{h},N,L,R) = \hbox {arg min}_{\varvec{h}} \mathcal {B}_{\mathrm {P}}(\varvec{h},N,1,1)\).

The equivalence of two of Nesterov’s fast gradient methods for smooth unconstrained convex minimization was previously mentioned without details in [18].

The fast gradient method in [12] was originally developed to generalize FGM1 to the constrained case. Here, this second form is introduced for use in later proofs.

The vector \(\varvec{e}_{N,i}^{ }\) is the \(i\hbox {th}\) standard basis vector in \(\mathbb {R} ^{N}\), having 1 for the \(i\hbox {th}\) entry and zero for all other elements.

References

Allen-Zhu, Z., Orecchia, L.: Linear coupling: an ultimate unification of gradient and mirror descent (2015). arXiv:1407.1537

Beck, A., Teboulle, M.: A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM J. Imaging Sci. 2(1), 183–202 (2009). doi:10.1137/080716542

CVX Research, I.: CVX: Matlab software for disciplined convex programming, version 2.0. (2012). http://cvxr.com/cvx

Drori, Y.: Contributions to the complexity analysis of optimization algorithms. Ph.D. thesis, Tel-aviv Univ., Israel (2014)

Drori, Y., Teboulle, M.: Performance of first-order methods for smooth convex minimization: a novel approach. Math. Program. 145(1–2), 451–482 (2014). doi:10.1007/s10107-013-0653-0

Grant, M., Boyd, S.: Graph implementations for nonsmooth convex programs. In: Blondel, V., Boyd, S., Kimura, H. (eds.) Recent Advances in Learning and Control, Lecture Notes in Control and Information Sciences, pp. 95–110. Springer, Berlin (2008). http://stanford.edu/~boyd/graph_dcp.html

Kim, D., Fessler, J.A.: Optimized first-order methods for smooth convex minimization (2014). arXiv:1406.5468v1

Kim, D., Fessler, J.A.: Optimized momentum steps for accelerating X-ray CT ordered subsets image reconstruction. In: Proceedings of 3rd International Meeting on Image Formation in X-ray CT, pp. 103–106 (2014)

Kim, D., Fessler, J.A.: An optimized first-order method for image restoration. In: Proceedings of IEEE International Conference on Image Processing (2015). (to appear)

Nesterov, Y.: A method of solving a convex programming problem with convergence rate \(O(1/k^2)\). Sov. Math. Dokl. 27(2), 372–376 (1983)

Nesterov, Y.: Introductory Lectures on Convex Optimization: A Basic Course. Kluwer, Dordrecht (2004)

Nesterov, Y.: Smooth minimization of non-smooth functions. Math. Program. 103(1), 127–152 (2005). doi:10.1007/s10107-004-0552-5

Nesterov, Y.: Gradient methods for minimizing composite functions. Math. Program. 140(1), 125–161 (2013). doi:10.1007/s10107-012-0629-5

O’Donoghue, B., Candès, E.: Adaptive restart for accelerated gradient schemes. Found. Comput. Math. 15(3), 715–732 (2015). doi:10.1007/s10208-013-9150-3

Polyak, B.T.: Some methods of speeding up the convergence of iteration methods. USSR Comput. Math. Math. Phys. 4(5), 1–17 (1964). doi:10.1016/0041-5553(64)90137-5

Su, W., Boyd, S., Candes, E.J.: A differential equation for modeling Nesterov’s accelerated gradient method: theory and insights (2015). arXiv:1503.01243

Taylor, A.B., Hendrickx, J.M., Glineur, F.: Smooth strongly convex interpolation and exact worst-case performance of first- order methods (2015). arXiv:1502.05666

Tseng, P.: Approximation accuracy, gradient methods, and error bound for structured convex optimization. Math. Program. 125(2), 263–295 (2010). doi:10.1007/s10107-010-0394-2

Author information

Authors and Affiliations

Corresponding author

Additional information

This research was supported in part by NIH Grants R01-HL-098686 and U01-EB-018753.

Appendix

Appendix

1.1 Proof of Lemma 2

We prove that the choice  in (6.9), (6.10), (6.11) and (6.12) satisfies the feasible conditions (6.14) of (RD1).

in (6.9), (6.10), (6.11) and (6.12) satisfies the feasible conditions (6.14) of (RD1).

Using the definition of \(\varvec{\breve{Q}}(\varvec{r},\varvec{\lambda },\varvec{{\tau }})\) in (6.7), and considering the first two conditions of (6.14), we get

where the last equality comes from \((\varvec{\lambda },\varvec{{\tau }})\in {\varLambda }\), and this reduces to the following recursion:

We use induction to prove that the solution of (10.1) is

which is equivalent to \(\varvec{\hat{\lambda }}\) (6.10). It is obvious that \(\lambda _1 = \theta _0\lambda _1\), and for \(i=2\) in (10.1), we get

Then, assuming \(\lambda _i = \theta _{i-1}^2\lambda _1\) for \(i=1,\ldots ,n\) and \(n\le N-1\), and using the second equality in (10.1) for \(i=n+1\), we get

where the last equality uses (3.2). Then we use the first equality in (10.1) to find the value of \(\lambda _1\) as

with \(\theta _N\) in (6.13).

Until now, we derived \(\varvec{\hat{\lambda }}\) (6.10) using some conditions of (6.14). Consequently, using the last two conditions in (6.14) with (3.2) and (6.15), we can easily derive the following:

which are equivalent to \(\varvec{{\hat{\tau }}}\) (6.11) and \(\hat{\gamma } \) (6.12).

Next, we derive  for given \(\varvec{\hat{\lambda }}\) (6.10) and \(\varvec{{\hat{\tau }}}\) (6.11). Inserting \(\varvec{{\hat{\tau }}}\) (6.11) to the first two conditions of (6.14), we get

for given \(\varvec{\hat{\lambda }}\) (6.10) and \(\varvec{{\hat{\tau }}}\) (6.11). Inserting \(\varvec{{\hat{\tau }}}\) (6.11) to the first two conditions of (6.14), we get

for \(i,k=0,\ldots ,N-1\), and considering (6.5) and (10.2), we get

Finally, using the two equivalent forms (6.2) and (10.3) of  , we get

, we get

and this can be easily converted to the choice \(\hat{r}_{i,k}\) in (6.9).

For these given  , we can easily notice that

, we can easily notice that

for \(\varvec{\check{\theta }}= \left( \theta _0,\ldots ,\theta _{N-1},\frac{\theta _N}{2}\right) ^\top \), showing that the choice is feasible in both (RD) and (RD1). \(\square \)

1.2 Proof of (8.2)

We prove that (8.2) holds for the coefficients \(\varvec{\hat{h}}\) (7.1) of OGM1 and OGM2.

We first show the following property using induction:

Clearly, \(\hat{h}_{1,0} = 1 + \frac{2\theta _0 - 1}{\theta _1} = \theta _1\) using (3.2). Assuming \(\sum _{k=0}^{j-1}\hat{h}_{j,k} = \theta _j\) for \(j=1,\ldots ,n\) and \(n\le N-1\), we get

where the last equality uses (3.2) and (6.15).

Then, (8.2) can be easily derived using (3.2) and (6.15) as

\(\square \)

Rights and permissions

About this article

Cite this article

Kim, D., Fessler, J.A. Optimized first-order methods for smooth convex minimization. Math. Program. 159, 81–107 (2016). https://doi.org/10.1007/s10107-015-0949-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-015-0949-3